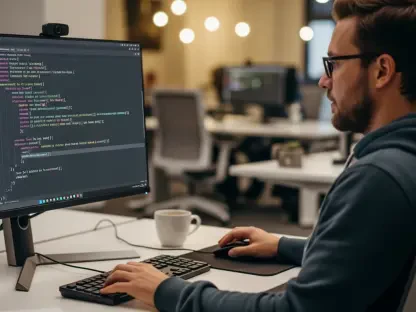

The traditional image of a data engineer hunched over a keyboard, manually debugging thousands of lines of SQL and Python, is rapidly being replaced by a more sophisticated reality where conversational intent dictates complex machine learning workflows. While the technology industry has become accustomed to simple AI assistants that suggest the next line of code, Databricks has introduced something fundamentally different with the launch of Genie Code. This is not just a digital helper; it is a sophisticated agentic system integrated into the very fabric of the Lakehouse environment. By transforming conversational prompts into complex, executable machine learning workflows, this technology marks a definitive departure from passive tools toward a future where the data platform itself acts as a primary collaborator in the engineering process.

This shift comes at a time when the sheer volume of data and the complexity of modern stacks have reached a breaking point. Organizations no longer just need a place to put their data; they need a system that understands what to do with it. Genie Code represents a move away from the “stitching” era, where humans acted as the glue between disparate tools, toward an era of autonomous orchestration. By embedding intelligence directly into the Lakehouse, Databricks addresses the fundamental friction that has historically slowed down the development of AI applications, paving the way for a more streamlined and intelligent data lifecycle.

Beyond Autocomplete: The Arrival of the Autonomous Data Agent

Genie Code represents a paradigm shift in how developers and data scientists interact with their computational environments. Unlike earlier iterations of AI assistants that functioned primarily as enhanced search engines or autocomplete scripts, this agent operates with a level of situational awareness. It understands the context of the entire data lake, including the relationships between tables, the nuances of existing pipelines, and the specific requirements of the underlying infrastructure. This allows the system to generate entire blocks of functional logic that are immediately ready for execution within the Databricks workspace.

The integration of such an agentic system into the Lakehouse allows for a more fluid movement between conceptualizing a solution and implementing it. Instead of spending hours writing boilerplate code to connect various services, engineers can now describe the desired outcome, such as an optimized feature engineering pipeline for a specific churn model. The agent then reasons through the necessary steps, selects the appropriate libraries, and constructs the workflow. This capability effectively transforms the data platform from a passive storage and compute engine into an active participant that shares the cognitive load of the development team.

Furthermore, this transition signifies the end of fragmented development cycles. By providing a unified interface where natural language can be translated into sophisticated code, Databricks is closing the gap between business requirements and technical execution. The ability for the platform to autonomously suggest and build components based on high-level goals reduces the barrier to entry for complex data science tasks. This ensures that the focus remains on the strategic value of the data rather than the syntax of the programming languages used to manipulate it.

The Evolution from Data Storage to the AI Operating System

For many years, the Data Lakehouse was primarily defined by its ability to unify data warehousing and AI workloads into a single architectural framework. However, as enterprises struggle with the increasing complexity of modern data stacks, the focus has shifted from mere storage to intelligent orchestration. Genie Code emerges as a direct response to this complexity, aiming to bridge the gap between raw data and actionable intelligence. It addresses a critical pain point in the industry known as the “integration tax,” where data teams often spend more time managing infrastructure and connecting disparate tools than they do extracting actual business value.

This evolution signals the transition of Databricks from a processing engine into what experts describe as an “AI runtime.” In this new configuration, the platform functions as a governed environment where AI agents autonomously reason over data and execute business logic without constant human intervention. By centralizing the execution of these agents within the Lakehouse, Databricks provides a consistent layer of control that is often missing in multi-tool environments. This allows organizations to move away from fragile, hand-coded integrations toward a more resilient and self-managing system.

The strategic importance of this shift cannot be overstated, as it positions the data platform as the central nervous system of the enterprise. When the infrastructure itself can understand and act upon the data it holds, it becomes the foundational layer for all subsequent AI applications. This transformation reduces the need for external orchestration layers, simplifying the overall architecture and allowing for a more cohesive approach to data management. Consequently, the Lakehouse is no longer just a destination for data; it is the engine that powers the next generation of autonomous business processes.

Architecting the Agentic Workflow

The true power of Genie Code lies in its ability to automate the heavy lifting of the data science and engineering lifecycle through specific, high-value functional layers. Instead of manually configuring experiment tracking or tuning computational resources, practitioners can now use the agent to orchestrate the entire MLflow trajectory. The system handles the deployment and maintenance of models, allowing teams to focus on high-level strategy rather than the minutiae of pipeline health. This level of automation ensures that models are not only built faster but are also more robust and easier to manage over time.

One of the most significant bottlenecks in data engineering is the time lost to debugging and resolving performance issues. Genie Code proactively identifies model performance regressions and troubleshoots errors in real-time. By providing immediate fixes within the SQL Editor or Lakeflow Pipelines, it significantly reduces the downtime associated with manual error logs and complex stack traces. This proactive stance toward system health allows data teams to maintain a higher velocity of development without sacrificing the reliability of their production environments.

Scaling AI in highly regulated industries requires rigorous compliance, and Genie Code addresses this by being built directly on top of the Unity Catalog. Every automated workflow and generated script adheres to established access controls and audit standards, ensuring that speed does not come at the expense of security or enterprise policy. This integration provides a unified governance framework that covers everything from raw data ingestion to the final AI-generated insight. By automating the governance layer, the system allows enterprises to innovate rapidly while maintaining the strict oversight required by modern regulatory environments.

Expert Perspectives on the Strategic Pivot

Industry analysts view the introduction of Genie Code as a definitive move by Databricks to dominate the enterprise software stack. Stephanie Walter of HyperFRAME Research notes that by turning the Lakehouse into an AI runtime, the company is positioning itself as the “AI operating system” for the modern corporation. Unlike traditional Business Intelligence tools that merely visualize data, this new approach allows the platform to control the execution layer where AI applications actually live. This provides a level of platform depth that competitors struggle to match, as it integrates data engineering, ML operations, and generative AI under one roof.

This strategic pivot also changes the competitive landscape for data platforms. While other providers may offer similar code assistance tools, the deep integration of Genie Code into the core Lakehouse architecture offers a unique advantage. Analysts suggest that the ability to reason over the entire data lineage and governance model gives the agent a superior understanding of the enterprise context. This results in code that is not only technically correct but also aligned with the specific business rules and data structures of the organization.

Furthermore, the shift toward an AI-driven runtime is expected to consolidate the number of tools required by data teams. By offering a comprehensive solution that covers the entire lifecycle, from data prep to model deployment, Databricks is reducing the need for specialized third-party orchestration and monitoring services. This consolidation simplifies the procurement and management process for IT departments, making the platform a more attractive option for large-scale enterprise deployments. The expert consensus points toward a future where the ability to manage and execute AI agents becomes the primary differentiator in the data platform market.

Strategies for Integrating Genie Code into Enterprise Workflows

To fully leverage the capabilities of Genie Code, organizations should focus on reducing friction and maximizing the agent’s reach across their existing infrastructure. One of the most effective ways to do this is by utilizing the support for the Model Context Protocol (MCP) to connect the agent with third-party tools like Jira, GitHub, and Slack. This allows developers to trigger model training or pipeline updates directly from their project management software, ensuring that results are automatically synchronized without the need to jump between multiple dashboards.

Transitioning to an agent-first data culture also requires a shift in how teams approach their daily tasks. Engineers should move away from writing repetitive boilerplate code and instead focus on defining the underlying primitives and business logic. By allowing Genie Code to handle the “stitching” of feature engineering logic and orchestration code, data professionals can transition into roles that prioritize system architecture and observability. This shift not only increases productivity but also allows for the creation of more complex and scalable data systems.

Finally, it is vital to utilize built-in observability tools to maintain production integrity as more AI-generated code enters the environment. Monitoring how these pipelines behave in real-time allows enterprises to benefit from the speed of automated development while maintaining total control over their systems. The integration of Genie Code into the modern enterprise transformed the landscape of data engineering from a manual labor-intensive process into a high-level strategic oversight role. Organizations that successfully adapted to this new model observed a significant decrease in the time required to move from data discovery to production-ready AI applications.