The sheer scale of global investment in artificial intelligence has elevated PyTorch from a specialized academic library into the indispensable bedrock that powers every major breakthrough in modern computing. This fundamental piece of the technology stack now acts as the definitive layer of abstraction between diverse hardware architectures and the sophisticated software that drives machine learning models across the globe. As the industry enters this new era, the role of the PyTorch Foundation has become increasingly critical in ensuring that the software remains an open, neutral, and accessible resource for developers and enterprises alike. By operating under the governance of the Linux Foundation, the project serves as a safeguard for a multi-billion-dollar ecosystem that relies on its stability to function. Mark Collier, a prominent figure in open-source infrastructure, noted during recent industry discussions that the continued success of AI innovation depends entirely on maintaining this standard as a shared global public good rather than a proprietary asset.

Bridging the Gap Between Research and Production

The scope of the PyTorch ecosystem has expanded significantly, moving beyond its historical roots in model training to encompass the entire lifecycle of artificial intelligence. While the training phase remains the foundational process where complex neural networks are initially conceptualized and built, the foundation has identified inference and agentic systems as the next frontiers of development. This holistic expansion ensures that developers can map advanced mathematical operations to the underlying physical capabilities of high-performance GPUs and AI accelerators with unprecedented precision. By integrating projects like vLLM, the foundation has successfully bridged the gap between a raw trained model and a fully functional, high-performance application that can serve millions of users. This evolution is vital because inference is currently scaling at a much faster rate than training, as organizations transition from experimental research to the large-scale deployment of AI services in commercial environments across every major industry sector.

A fundamental transformation is occurring in how digital infrastructure is utilized, driven by the emergence of autonomous AI agents that act as sophisticated loops of repeated model calls. Unlike traditional human-paced interactions that follow predictable patterns, agentic systems generate high-frequency, automated requests that place unique stresses on modern inference engines and network architectures. The PyTorch Foundation has positioned itself at the center of this shift by developing the necessary infrastructure to handle these complex, machine-driven workloads with high efficiency. This focus on agentic behavior represents a departure from static digital experiences toward a future where AI systems can perform multi-step tasks with increasing levels of independence and speed. By supporting this third pillar of the AI stack, the foundation provides the groundwork for developers to build more capable systems that are not limited by the latency of manual input. This strategic direction ensures that the underlying software stack remains resilient enough to support the next generation of automated intelligence.

Cultivating a Diverse and Competitive Hardware Market

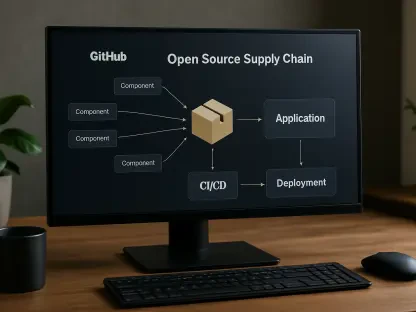

Sustaining a healthy and innovative AI ecosystem requires deep hardware diversity to prevent market stagnation and drive down the immense costs associated with high-performance computing. While a few major manufacturers have historically dominated the silicon landscape, a new wave of competitors ranging from established hyperscalers like AWS and Google to specialized startups like Cerebras is seeking to disrupt the status quo. PyTorch acts as the essential layer of enablement that makes these diverse hardware alternatives commercially viable by providing a universal software interface for developers. Without this neutral abstraction layer, the industry would face insurmountable barriers to entry, as engineers would be forced to write custom, highly specific code for every different chip architecture they encountered. By ensuring that code remains portable across various accelerators, PyTorch effectively removes the friction that would otherwise prevent the adoption of more efficient or cost-effective hardware solutions. This neutrality is the primary mechanism that allows for a competitive marketplace where innovation is judged on performance.

The strategic importance of hardware neutrality cannot be overstated in an industry where the path to market for new silicon is often blocked by complex software requirements. PyTorch provides a standardized environment where any new AI accelerator can immediately find a community of developers ready to utilize its specialized capabilities without needing to learn entirely new frameworks. This accessibility is what allows smaller players in the semiconductor industry to compete with established giants, as they can focus on architectural innovation rather than building a massive software ecosystem from scratch. Furthermore, this standard helps enterprises avoid being trapped in a cycle of rising costs driven by a single vendor’s pricing power. By maintaining a common software layer that supports everything from traditional GPUs to the latest tensor processing units, the foundation ensures that organizations have the flexibility to migrate workloads based on cost, power efficiency, and specific performance needs. This level of portability is essential for maintaining the agility required to stay competitive in a landscape that changes constantly.

Neutral Governance as a Barrier to Monopolistic Control

The transition of PyTorch from a project managed by Meta to a foundation-governed entity represents a strategic maneuver to build deep, long-term trust within the global technology community. One of the most significant components of this shift is the transfer of the PyTorch trademark to the foundation itself, which serves as a contractual guarantee that the core software will remain open and accessible. This structure effectively prevents any single corporation from making sudden licensing changes or introducing restrictive enterprise-only versions that could hinder the progress of independent developers. By operating under a neutral governance model, the project protects the entire ecosystem from the risks of sudden policy shifts where a dominant player might change the rules of engagement to maximize profit at the expense of its users. This legal and organizational framework provides the stability that multi-billion-dollar enterprises require before they commit their most critical workloads to an open-source project, ensuring that the foundational tools of AI remain a public resource for all.

Technical leadership within the PyTorch ecosystem is defined by a rigorous meritocracy where authority and contributor status are earned through consistent engineering excellence rather than financial contributions or membership fees. This ensures that the strategic direction of the project is guided by the people who understand the code most deeply and can best anticipate the technical challenges of the coming years. While the original creators continue to provide a significant amount of the engineering talent, the foundation provides the structural framework for other organizations to earn their place in the leadership hierarchy through high-quality code submissions. This gradual diversification of leadership is a critical part of building industry-wide confidence, as it demonstrates that no single company can unilaterally control the project’s roadmap or favor its own proprietary interests. Trust is built over time as the community sees that the project remains technically objective and focused on solving universal problems for all developers, ensuring that PyTorch remains the most advanced framework for machine learning.

Redefining Infrastructure for Cloud Native AI

The rapid emergence of autonomous AI agents is fundamentally altering the requirements for cloud-native infrastructure, forcing a move away from legacy systems designed for human interactions. Traditional web and cloud environments were built to handle sequential, predictable requests from human users, but agentic workloads often trigger massive bursts of hundreds of thousands of API calls in almost instantaneous succession. This shift in behavior necessitates an entirely new approach to system monitoring, resource scaling, and network management to prevent bottlenecks and ensure consistent performance at scale. The PyTorch Foundation is addressing these challenges by collaborating with other ecosystems to create a more robust and automated inference environment that can handle the sheer volume of agent-driven traffic. By identifying these behaviors early, developers are creating specialized orchestration layers that allow cloud infrastructure to adapt dynamically to the needs of machine-to-machine interactions, ensuring that infrastructure remains a facilitator of progress.

Modern enterprises are increasingly adopting a co-design strategy that integrates different open-source projects across multiple foundations to create a unified and sovereign AI stack. This approach involves combining PyTorch for model training with distributed computing frameworks like Ray and container orchestration systems like Kubernetes to build a resilient and scalable production environment. By moving away from a reliance on closed-source, proprietary APIs, companies are regaining control over their own data, costs, and architectural decisions. This trend is particularly evident in organizations that train thousands of specialized models for internal use, requiring a flexible and open infrastructure that can support a wide variety of workloads. The integration of projects like vLLM into this ecosystem provides the final piece of the puzzle, allowing for efficient model serving on top of a standard cloud-native foundation. This collaborative design effort is paving the way for a future where high-performance AI is as accessible and reliable as any other standard utility in the modern technology stack.

Strategic Imperatives for the Global Intelligence Stack

In the period leading up to the current technological landscape, the industry recognized that the health of the open-source ecosystem was the single most important factor in the global democratization of artificial intelligence. Leaders successfully navigated the transition from proprietary silos to a shared, neutral infrastructure that prioritized accessibility and hardware portability above all else. This collective effort established PyTorch as the universal standard, ensuring that innovation could occur at every level of the stack without the fear of vendor lock-in or sudden changes in licensing. Organizations that prioritized the adoption of this open stack found themselves better positioned to integrate new silicon breakthroughs and scale their agentic workloads with minimal technical debt. The shift toward a foundation-led model proved to be the necessary safeguard that protected the industry from monopolistic bottlenecks and fostered a culture of collaborative engineering excellence. As the focus shifted toward massive-scale automated inference, the integration of distributed computing became the standard blueprint for high-performance deployments.