In the fast-evolving landscape of software development, a new trend has taken hold, captivating developers with its promise of speed and simplicity: vibe coding. This approach, driven by artificial intelligence (AI) tools like large language models (LLMs), allows coders to generate code from vague prompts or minimal instructions, seemingly slashing the time spent on tedious tasks. Yet, beneath this glossy surface of innovation lies a minefield of challenges. Vibe coding, while alluring, often leads to ineffective habits that can transform promising projects into frustrating failures. The casual reliance on AI without proper oversight or understanding breeds errors, inefficiencies, and unexpected costs. As the tech community embraces these tools with enthusiasm, it becomes crucial to dissect the hidden traps that can sabotage success. Drawing from critical insights in the field, this exploration sheds light on the common missteps of vibe coders, offering a cautionary tale for those tempted to lean too heavily on AI without mastering its nuances. The focus here is not just on the technology itself, but on how developers interact with it, highlighting the need for balance between automation and human judgment to avoid the pitfalls that threaten to undermine this cutting-edge practice.

The Seductive Promise and Hidden Dangers of AI Coding

Vibe coding has emerged as a captivating shortcut in software development, where a quick, loosely defined prompt fed into an AI tool can yield lines of code in mere seconds. The appeal is undeniable—developers can bypass hours of manual coding, tapping into vast repositories of data and pre-trained algorithms to produce functional snippets almost effortlessly. Large language models, the backbone of this trend, promise to streamline workflows, especially for repetitive or boilerplate tasks. This efficiency can feel like a game-changer, particularly for tight deadlines or understaffed teams. However, the very simplicity that makes vibe coding so attractive often masks a critical flaw: over-reliance on AI without scrutiny. Developers, caught up in the excitement of instant results, may skip the vital step of validating outputs, assuming the technology is infallible. This blind trust can lead to subtle bugs or glaring errors slipping into production, costing time and resources to fix. The seductive ease of vibe coding, while powerful, sets a trap for those who fail to balance automation with critical thinking, underscoring the need for a more cautious approach to harnessing AI’s potential.

Beyond the allure of speed, a deeper misunderstanding often plagues vibe coders—the belief that AI tools possess human-like reasoning. In reality, these models are sophisticated mimics, piecing together responses from extensive training data without true comprehension or creativity. Expecting an LLM to interpret complex intent or innovate beyond its dataset is a recipe for disappointment. For instance, a vague prompt about a niche framework might yield outdated or irrelevant code, as the AI draws from patterns it knows rather than adapting to unique needs. This gap between expectation and capability can frustrate developers who anticipate genius-level insights from a tool that excels only at retrieval and recombination. Moreover, the conversational tone of many AI interfaces can reinforce this misconception, making interactions feel deceptively personal. Recognizing that these tools are not peers but utilities is essential to avoid misplaced trust. Vibe coders must temper their expectations, viewing AI as a helpful assistant rather than a replacement for domain expertise or problem-solving skills, lest they stumble into preventable setbacks rooted in a fundamental misreading of the technology’s nature.

Habits That Undermine Vibe Coding Effectiveness

One of the most pervasive traps in vibe coding is the tendency to accept AI-generated code at face value, without rigorous verification. Developers, enchanted by the polished output of large language models, often assume correctness, overlooking the potential for errors. Imagine a scenario where an AI provides a list of resource links or API calls, only for every single one to fail upon testing due to inaccuracies. Such oversights can grind projects to a halt, as time spent debugging faulty code piles up. This habit of blind trust stems from the agreeable, helpful demeanor engineered into many AI tools, designed to please rather than challenge. Yet, this very trait can mislead coders into skipping essential checks. Effective vibe coding demands a skeptical mindset, where every line of AI output is cross-checked against reliable sources or tested in context. Without this discipline, developers risk embedding flaws into their work, turning a tool meant to save time into a source of costly delays. The lesson is clear: automation should never eclipse the need for human oversight in ensuring quality and accuracy.

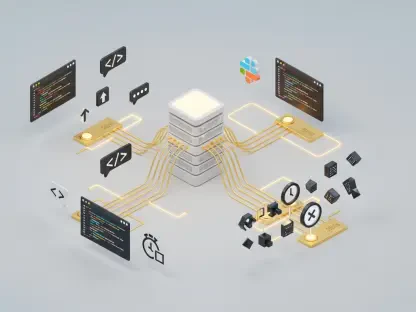

Another critical misstep lies in the assumption that all AI models are interchangeable, functioning identically regardless of the task. In truth, each large language model varies in design, training data, and parameter scale, which directly impacts performance. A model trained on older programming datasets might struggle with modern libraries or frameworks, producing irrelevant or obsolete code. Vibe coders who treat these tools as a one-size-fits-all solution often find themselves mismatched to their project’s needs, leading to suboptimal results. For example, a complex machine learning task might overwhelm a lightweight model better suited for basic scripting. Understanding these differences requires experimentation and research to select the appropriate tool for specific challenges. Ignoring this diversity can waste effort on outputs that fail to meet expectations. Developers must invest time in learning the strengths and limitations of various models to avoid this pitfall, ensuring that their choice aligns with the project’s technical demands rather than relying on a generic approach that undermines effectiveness.

Overlooked Operational and Financial Challenges

A significant yet often ignored aspect of vibe coding is the lack of discipline in managing interactions with AI tools, particularly when it comes to input size and clarity. Some developers, eager to offload entire codebases or sprawling problem statements into a single prompt, expect the AI to sift through the clutter and deliver precise solutions. This approach not only slows down processing times due to the sheer volume of data but also inflates costs, as many AI services bill based on token usage. The result is a double hit—wasted time and unexpected expenses. Moreover, overloading prompts can confuse the model, causing it to latch onto irrelevant details or miss the core intent. Effective vibe coding hinges on crafting concise, targeted inputs that guide the AI toward relevant outputs. By trimming unnecessary information and focusing on specific needs, developers can optimize both performance and budget. This operational discipline is not just a nicety but a necessity to prevent vibe coding from becoming a resource drain, highlighting how seemingly minor habits can snowball into major inefficiencies if left unchecked.

Equally troubling is the financial and operational risk of granting AI excessive autonomy over critical tasks. Vibe coders sometimes relinquish control, allowing models to make decisions or execute actions without thorough oversight, under the assumption that the technology can handle complex responsibilities. This can lead to catastrophic outcomes, such as an AI script inadvertently wiping out a live database due to a misinterpreted command. The randomness inherent in AI outputs amplifies this danger, as even well-intentioned suggestions can veer into destructive territory without human intervention. Maintaining a firm grip on pivotal decisions is non-negotiable, with AI positioned as a supportive tool rather than a standalone decision-maker. Token-based billing further complicates matters, as unchecked usage during automated processes can spiral into significant costs. Developers must establish clear boundaries, ensuring that AI contributions are vetted and aligned with project goals. This balance prevents operational disasters and financial strain, underscoring the importance of vigilance in managing the scope of AI’s role within development workflows.

Navigating Code Quality and AI Limitations

The quality of AI-generated code often suffers from inconsistency, a direct consequence of the inherent randomness in large language model outputs. Vibe coders frequently cobble together disparate snippets without adhering to uniform coding standards, resulting in a fragmented, hard-to-maintain codebase. This patchwork approach can create headaches down the line, as debugging becomes a tangle of mismatched styles and logic. For instance, one AI response might follow a functional programming paradigm while another leans on object-oriented principles, clashing within the same project. Such discrepancies complicate collaboration and future updates, eroding the very efficiency vibe coding aims to achieve. To counter this, developers must take an active role in harmonizing outputs, enforcing consistent style guidelines manually or through additional tools. This hands-on effort ensures that AI contributions integrate seamlessly into the broader project, preserving readability and structure. Without this intervention, the shortcut of vibe coding risks becoming a long-term liability, emphasizing that automation cannot substitute for the deliberate craftsmanship required in software development.

Another persistent challenge lies in the limitations of AI, particularly its tendency to produce biased outputs or outright fabrications, often termed “hallucinations.” These models draw from training data that may carry biases, such as favoring older, more common solutions over innovative ones, which can skew results in larger projects. Even more frustrating are the instances where AI invents nonexistent elements, like imaginary library functions, complete with plausible documentation that wastes hours of troubleshooting. Vibe coders who fail to question every output may chase these false leads, squandering valuable time. Staying alert to these flaws requires a mindset of constant verification, cross-referencing AI suggestions against trusted resources. Additionally, awareness of potential biases in training data helps developers anticipate and mitigate skewed recommendations. This vigilance is not merely a precaution but a fundamental aspect of using AI responsibly, ensuring that vibe coding does not devolve into a cycle of correcting phantom errors or reinforcing outdated practices. Only through such scrutiny can the true value of AI tools be realized without succumbing to their inherent imperfections.

Building a Smarter Approach to Vibe Coding

Reflecting on the journey through the challenges of vibe coding, it becomes evident that many developers have fallen into traps of overconfidence and negligence in the past. Blind trust in AI outputs has led to unnoticed errors creeping into live systems, while misunderstandings about the non-human nature of large language models have fostered unrealistic expectations. Operational missteps, like overloading prompts or ignoring token costs, have quietly drained budgets, and the lack of control over AI autonomy has occasionally triggered severe setbacks in critical environments. The inconsistent quality of code and the pursuit of AI hallucinations have further compounded frustrations, turning potential time-savers into sources of delay. These lessons from previous experiences paint a clear picture: vibe coding, though innovative, has often been mishandled without proper caution.

Moving forward, the path to effective vibe coding lies in adopting a disciplined, informed mindset. Developers should prioritize rigorous validation of AI outputs, treating every suggestion as a starting point rather than a final solution. Tailoring approaches to the specific strengths of different models can optimize results, while concise prompts and controlled usage help manage costs and efficiency. Maintaining human oversight over critical decisions ensures safety, and enforcing coding standards preserves project integrity. By questioning biases and verifying against reliable sources, the risks of AI limitations can be minimized. Embracing these strategies transforms vibe coding from a risky gamble into a powerful ally, balancing automation with expertise to drive sustainable progress in software development.