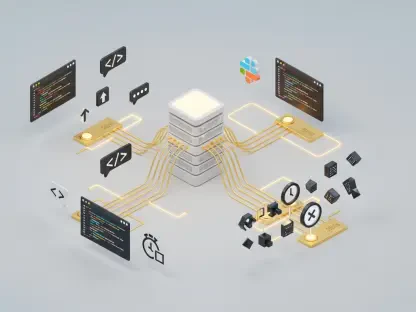

The global demand for high-performance computing has reached a critical bottleneck where a handful of centralized tech giants control the vast majority of the world’s processing power. This monopoly on silicon and data centers creates a high barrier to entry for smaller innovators and raises significant concerns regarding data sovereignty. Decentralized AI computing emerges as a disruptive solution, shifting the architectural focus from massive, localized server farms to a globally distributed network of consumer-grade hardware. By leveraging blockchain technology to orchestrate these resources, this model seeks to transform every connected device into a functional unit of a global supercomputer.

The Convergence: Distributed Ledger Technology and AI

The evolution of the Pi Network from a mobile-based blockchain experiment into a robust utility provider marks a significant shift in how we perceive digital ecosystems. Traditional blockchain models often suffer from “useless” computation, where energy is expended solely to secure a network without producing external value. In contrast, the current decentralized AI model pivots toward a proof-of-utility framework. This transition allows the network to maintain its security while simultaneously directing its vast computational resources toward solving complex mathematical problems required for machine learning.

This convergence is not merely a technical upgrade but a strategic move away from the centralized cloud dominance of the past decade. By 2026, the necessity for decentralized alternatives has become clear as costs for traditional cloud services continue to climb. A distributed model provides a democratic alternative, ensuring that the power to train and run AI models is not restricted to those with the deepest pockets. It represents a fundamental restructuring of the internet’s backend, moving from a client-server relationship to a peer-to-peer resource exchange.

Core Architectural Components of Decentralized AI

Distributed Node Infrastructure: The Mesh Network

At the heart of this technological shift is a massive infrastructure consisting of over 421,000 active nodes. These nodes form a global mesh network that operates on a “spare capacity” model, utilizing the idle CPU and GPU cycles of personal computers. Unlike traditional data centers that require massive cooling systems and dedicated real estate, this distributed approach spreads the environmental and physical load across the globe. This allows the network to remain resilient; even if thousands of nodes go offline, the collective processing power remains stable and accessible.

Human-Centric Feedback: Refining the Intelligence

Beyond raw processing, the true innovation lies in the integration of Reinforcement Learning from Human Feedback (RLHF) directly into the blockchain layer. Because these networks are community-driven, they possess an inherent advantage in data tagging and model validation. Users do not just provide electricity; they provide the human intuition necessary to refine AI outputs. This creates a closed-loop system where the community that builds the infrastructure also ensures the quality and ethical alignment of the models being trained upon it.

Latest Developments: Decentralized High-Performance Computing

Recent proof-of-concept trials have shattered the skepticism surrounding the efficiency of decentralized hardware. While critics once argued that latency would prevent distributed nodes from handling complex tasks, new protocols have demonstrated that localized AI workloads can be processed with remarkable speed. This “democratized supercomputing” is no longer a theoretical pursuit but a functioning reality. By utilizing advanced sharding techniques, the network breaks down massive datasets into manageable chunks that can be processed in parallel across thousands of geographically diverse locations.

Furthermore, the emergence of hybrid models has bridged the gap between blockchain security and the heavy-duty requirements of neural network training. These models use the blockchain as a transparent ledger to verify that the work was performed correctly, preventing malicious actors from submitting “faked” results. This verification layer is crucial for maintaining the integrity of AI models, especially as they become more integrated into critical infrastructure and decision-making processes.

Real-World Applications: From Robotics to Global Startups

AI Model Training: The OpenMind Case Study

The partnership with the robotics startup OpenMind serves as a primary example of this technology in action. By utilizing decentralized nodes, OpenMind was able to process massive amounts of sensor data and train autonomous systems without the overhead costs of a proprietary data center. This collaboration proved that decentralized computing could handle the high-concurrency needs of modern robotics. The nodes effectively managed the real-time data ingestion required for autonomous navigation, showing that distributed systems are capable of supporting physical-world AI applications.

Scalable AI Inference: Empowering the Underdog

For small-to-medium enterprises, the shift toward decentralized inference has been a game-changer. These businesses often struggle with the “cloud tax” imposed by major providers, which can eat into thin margins. By switching to a decentralized infrastructure, startups can run their AI applications at a fraction of the cost while benefiting from enhanced data privacy. Since the data is processed across a fragmented network rather than a single central server, the risk of massive data breaches is inherently reduced, providing a natural layer of security for sensitive enterprise information.

Challenges: Navigating the Technical Hurdles

Despite the rapid progress, the road to total decentralization is fraught with technical obstacles. Latency remains a primary concern; synchronizing thousands of nodes across different time zones and internet speeds requires sophisticated orchestration. While sharding and edge computing help, the “overhead” of managing a distributed system can sometimes diminish the raw performance gains. Additionally, verifying the work of anonymous participants without revealing the underlying data requires advanced cryptographic tools like zero-knowledge proofs, which are still resource-intensive to implement at scale.

Regulatory hurdles also loom on the horizon. As AI models become more powerful, governments are increasingly concerned with how and where these models are trained. A decentralized network, by its very nature, is difficult to regulate or geofence. This creates a tension between the desire for a free, open-access supercomputer and the need for safety protocols. Developers are currently working on governance frameworks that allow for decentralized oversight, but finding the balance between permissionless innovation and responsible deployment remains a work in progress.

The Future Outlook: The Rise of Global Intelligence

The transition of blockchain ecosystems into comprehensive AI service providers suggests a future where the distinction between “fintech” and “deep tech” disappears. We are moving toward a “Global Supercomputer” where computational resources are as fluid and accessible as electricity. This evolution will likely lead to breakthroughs in hardware optimization, where consumer devices are specifically designed to contribute to these networks more efficiently. As the hardware becomes more specialized, the gap between consumer machines and enterprise-grade servers will continue to narrow.

Moreover, the democratization of these resources will likely spark a new wave of localized AI development. Instead of “one-size-fits-all” models trained on general data, we will see a proliferation of niche models tailored to specific languages, cultures, and industries. This move away from a monolithic AI landscape toward a diverse, decentralized ecosystem will redefine the competitive dynamics of the entire technology sector, forcing legacy providers to rethink their centralized business models.

Final Assessment: A Decisive Shift in Computing

The successful transition from proof-of-work to a proof-of-utility model has validated the potential of decentralized networks to serve as the backbone of the next industrial revolution. While technical hurdles regarding latency and data verification still exist, the foundational proof-of-concept has been established. The ability to harness hundreds of thousands of individual nodes to perform high-performance computing tasks at scale represents a significant victory for the democratization of technology. Moving forward, the industry must focus on refining the cryptographic tools used for work verification and establishing clearer ethical standards for distributed training. This technology has officially moved beyond the experimental phase and is now a viable, scalable alternative to the centralized status quo.