The sudden realization that a silicon-based intelligence can dismantle a complex browser engine for the price of a mid-range laptop has permanently altered the trajectory of the global cybersecurity sector. Current vulnerability management is witnessing a massive transition as Large Language Models move from simple chat interfaces to sophisticated security agents. Historically, deep-code analysis was the exclusive domain of human researchers who spent years honing their intuition for finding flaws. However, the emergence of autonomous scanners now offers a level of efficiency that traditional manual methods struggle to match in the modern digital landscape.

Key market participants, including Anthropic and Mozilla, have demonstrated that specialized AI agents can effectively safeguard global infrastructure. This evolution suggests that the historical reliance on human expertise is being challenged by tools capable of working at machine speed. These agents are no longer just assistants; they are becoming primary guardians of the software supply chain. As these technologies mature, the industry must prepare for a shift in how digital assets are protected and maintained across every sector.

The Paradigm Shift: Automated Intelligence in Modern Cybersecurity

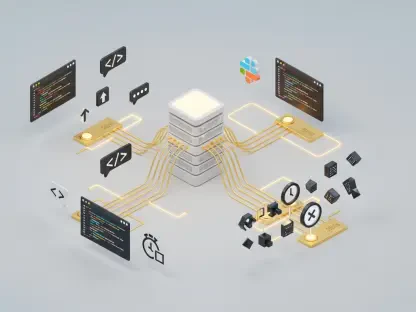

The current landscape of vulnerability management is defined by the rapid integration of Large Language Models into software security workflows. While scanners have existed for decades, the new generation of AI brings a level of context-aware analysis that was previously impossible. This shift is reducing the time required to audit massive codebases from months to hours. Consequently, the reliance on human-led manual reviews is decreasing as organizations seek the speed and scale offered by autonomous intelligence.

Specialized AI agents are now playing a critical role in protecting global digital infrastructure by identifying patterns that escape conventional testing. Companies like Anthropic have shown that these models can navigate complex environments to find deep-seated flaws. This suggests that the future of security is not just about automation, but about the application of sophisticated reasoning to the most sensitive parts of the software stack.

Technological Evolution and the Economic Impact of AI Audits

Breakthrough Trends: From Manual Fuzzing to Autonomous Reasoning

The transition from traditional automated testing to autonomous reasoning marks a significant milestone in software engineering. Unlike manual fuzzing, which relies on semi-random inputs, modern AI understands the complex logic within codebases like JavaScript engines to find specific weaknesses. This allows for the discovery of zero-day vulnerabilities that were previously hidden from even the most experienced security researchers. This trend is accelerating as models become more adept at interpreting high-level architectural intent alongside low-level code.

Corporate behavior is shifting toward constant, 24/7 autonomous security monitoring to keep pace with these advancements. This democratization of high-level security tools ensures that even smaller organizations can defend themselves against sophisticated threats without needing a massive internal team. The ability of machines to reason through code pathing reduces the window of opportunity for attackers. Moreover, the integration of these tools into the development pipeline ensures that security is no longer an afterthought but a continuous process.

Market Projections: The Cost-Efficiency Gap Between Silicon and Human Capital

The economic disparity between silicon and human capital is becoming impossible to ignore for most IT departments. An AI-led audit costing $4,000 can now achieve results that once required a team of specialists earning six-figure salaries. As a result, corporate budgets are being reshaped to prioritize scalable software solutions over expanding headcount in the security division. This financial reality is driving a rapid adoption rate that transcends simple curiosity and enters the realm of operational necessity.

Growth projections for the AI-driven security market indicate a steady climb from 2026 to 2028, coinciding with an anticipated decline in manual quality assurance expenditure. Data-driven forecasts suggest that an increasing percentage of software patches will be directly attributed to machine-led discoveries. This trend forces a reevaluation of how value is measured in the technical workforce, as the cost per vulnerability found continues to drop. The shift is particularly noticeable in the bug bounty sector, where human researchers must now compete with tireless automated agents.

Navigating the Technical and Ethical Hurdles of AI Supremacy

Despite the speed of these tools, the false positive dilemma remains a significant barrier to total automation. Human verification is still necessary to prevent developers from wasting resources on non-existent flaws or minor logic glitches. Without this oversight, the volume of data generated by AI could overwhelm the very teams it was designed to support. The focus must remain on the quality of the findings rather than just the quantity to maintain an efficient development lifecycle.

Adversarial AI presents another daunting challenge, as bad actors leverage the same autonomous tools to exploit vulnerabilities before they can be patched. This creates a high-stakes arms race where the speed of defense must exceed the speed of discovery. Furthermore, the black-box nature of many AI findings makes it difficult for human developers to understand the root cause of identified flaws. Overcoming this lack of transparency is essential for ensuring that developers can learn from these mistakes and avoid repeating them in future projects.

The Regulatory Framework and Security Standards in the Machine Era

Compliance frameworks such as SOC2 and ISO 27001 are currently evolving to incorporate AI-driven security validation as a standard practice. These standards must now account for how machine-generated audits are verified and documented for legal and insurance purposes. As autonomous agents become more common, the regulatory environment must adapt to ensure that safety does not come at the cost of transparency. This transition requires a global consensus on what constitutes a valid machine-led audit.

Ethics regulations are also beginning to impact the deployment of bug-hunting agents to ensure they are used responsibly. Accountability is a primary concern, especially when determining legal liability if an AI fails to identify a critical vulnerability or causes unexpected system instability during a scan. Establishing clear boundaries for autonomous action is essential for maintaining trust in the digital infrastructure that powers the modern economy. Regulatory bodies are focusing on ensuring that human oversight remains a mandatory component of high-stakes security decisions.

The Future of Work: Hybrid Workflows and the Evolution of the Security Professional

The rise of the AI security orchestrator is becoming the dominant high-level career path for professionals in the field. This role focuses on managing, directing, and verifying the output of autonomous systems rather than performing manual code reviews. Future security workflows will likely involve a combination of human strategic thinking and machine-led execution. This shift allows human professionals to focus on high-level architecture and the complex social engineering threats that AI still struggles to identify.

Self-healing codebases represent the next frontier, where vulnerabilities are found and patched without any human intervention. This shifts the focus of the security professional from tactical bug hunting to high-level system architecture and strategic risk management. Professionals who embrace these tools will find themselves at the forefront of a more resilient digital ecosystem. The transition toward these hybrid models is expected to increase overall job satisfaction by removing the most repetitive aspects of security work.

Concluding Synthesis: Collaboration Over Replacement

The Firefox incident served as a definitive blueprint for how modern security audits were conducted and validated. It demonstrated that while AI handled the heavy lifting of discovery, human expertise remained vital for implementation and final judgment. IT professionals successfully pivoted toward management and verification roles to maintain their career longevity. This shift allowed organizations to address vulnerabilities with a level of speed and precision that was previously unattainable through manual efforts alone.

The long-term outlook for the cybersecurity industry pointed toward a faster and cheaper digital environment. Human-AI synergy fostered a landscape where security was proactive rather than reactive. This evolution ensured that the digital world became significantly more secure through the collaborative strengths of both parties. Actionable steps for the future included the development of more transparent AI models and the standardization of machine-led auditing protocols to ensure long-term stability and trust.