The rapid evolution of web technologies has fundamentally transformed the browser from a simple document viewer into a high-performance execution environment for distributed software. In this modern landscape, a developer might spend weeks optimizing a React component’s render cycle, only to find that the end-user experience remains sluggish due to a misconfigured distribution setting or an overlooked serverless execution delay. This disconnect highlights a critical reality: the front end is no longer an isolated entity but rather the visible manifestation of a complex cloud infrastructure. Within the Amazon Web Services ecosystem, the performance and reliability of an interface are frequently dictated by layers that exist far beyond the local source code. By acknowledging that client-side logic is deeply intertwined with content delivery networks, serverless compute cycles, and distributed database consistency, engineers can move beyond reactive debugging. This holistic perspective allows for the creation of applications that are not only aesthetically pleasing but also technically resilient against the inherent volatility of the modern cloud.

The Role of Global Content Delivery

The implementation of Amazon CloudFront has become a standard requirement for reducing latency by caching static assets at edge locations strategically positioned near the user. While this drastically improves load times for global audiences, it simultaneously introduces a logistical challenge known as the deployment paradox. In this scenario, an engineer might push a critical bug fix to an S3 bucket, yet users continue to report the original issue because the CDN is still serving a cached version of the previous file. This behavior is not a technical failure of the deployment pipeline; rather, it is the intended function of the infrastructure prioritizing speed over immediate consistency. When developers fail to account for this mechanism, they often waste hours investigating why their local changes are not appearing in production, leading to frustration and delayed release cycles.

To navigate these delivery challenges effectively, front-end engineers must take an active role in managing cache policies and asset versioning strategies. Implementing automated cache busting through the use of content-addressed filenames, where each build generates a unique hash, ensures that the CDN recognizes the new asset as a distinct entity rather than an update to an existing one. Furthermore, a deep understanding of HTTP headers, such as Cache-Control and ETag, allows developers to fine-tune how long specific resources should reside at the edge versus being revalidated. By integrating these infrastructure-level considerations into the development workflow, teams can guarantee that users always interact with the most current version of the application. This level of technical oversight transforms the network from an unpredictable variable into a reliable tool for high-speed delivery.

Serverless Compute and Execution Latency

The transition toward serverless architecture, specifically using AWS Lambda to power application programming interfaces, offers unparalleled scalability and cost benefits for modern web applications. However, this architectural choice introduces the “cold start” phenomenon, where a function requires several seconds to initialize its execution environment after a period of inactivity. To a front-end developer unaware of this infrastructure behavior, a sudden delay in data fetching might be incorrectly attributed to inefficient database queries or excessive JavaScript bundle sizes. Without this context, optimization efforts are often misdirected toward refactoring client-side state management when the actual bottleneck resides in the cloud provider’s resource allocation logic. This lack of alignment between the UI and the compute layer can lead to perceived performance issues that diminish user trust.

Recognizing the reality of serverless execution allows engineers to build more empathetic and transparent user interfaces that manage these technical constraints gracefully. Instead of utilizing a generic loading indicator that might cause the user to suspect a system crash, the UI can be designed with skeleton screens or progressive disclosure patterns that acknowledge the longer initial wait time. Moreover, developers can implement “warming” strategies or use provisioned concurrency for critical paths to ensure that the most important features are always responsive. By aligning the front-end feedback loop with the specific characteristics of the serverless environment, developers create a more stable experience. This proactive approach ensures that the application remains functional and informative even during the standard architectural warm-ups that define cloud-native systems.

Navigating Distributed Inconsistency

Modern applications are rarely hosted on a single server; instead, they are distributed across multiple AWS Availability Zones and managed by Elastic Load Balancers to ensure high availability. This distributed nature introduces “Heisenbugs,” which are intermittent errors that appear for some users while remaining completely invisible to others depending on which node receives their request. For instance, during a rolling deployment, a user might hit a server running the new version of an API while their subsequent request hits a node that has not yet been updated. Front-end engineers who treat the back end as a monolithic, perfectly consistent entity will find these issues nearly impossible to replicate in a local environment. These discrepancies are frequently the result of eventual consistency models rather than flaws in the application logic itself.

To address the volatility inherent in distributed systems, the modern developer must prioritize building resilient interfaces that employ intelligent retries and graceful degradation. Implementing exponential backoff algorithms within the client-side data fetching layer allows the application to recover from temporary network tremors without requiring user intervention. Furthermore, adopting optimistic UI updates—where the interface reflects a successful action before the server confirms it—can mask minor delays in data synchronization. By designing with the assumption that the underlying infrastructure is a shifting sea of services, developers create a more robust product that maintains its integrity under diverse conditions. This shift in mindset moves the focus from chasing non-existent logic errors to building a front end that anticipates and absorbs the tremors of a global cloud environment.

The Shift Toward Full-Stack Awareness

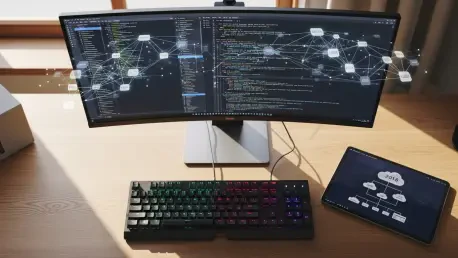

The industry is currently witnessing a significant shift where the traditional “endpoint contract,” which limited the front-end developer’s responsibility to sending a request and receiving JSON, is no longer sufficient. Senior engineers now recognize that the cloud is not merely a distant hosting platform but the very environment that defines the operational constraints of the software. This has led to the emergence of “infrastructure-aware” front-end engineering, where the goal is to observe the entire system rather than just debugging a local codebase. The current trend emphasizes the importance of understanding how API Gateways, routing rules, and authentication layers influence the final delivery of the user interface. This systemic view enables developers to identify the root cause of performance regressions that might otherwise remain hidden within the layers of the delivery pipeline.

This evolution does not suggest that front-end specialists must suddenly become DevOps experts or spend their days configuring Virtual Private Clouds. Instead, it advocates for an expanded mental model where infrastructure knowledge is viewed as a foundational part of the developer’s toolkit. By understanding the path that data takes from an S3 bucket or a Lambda function to the user’s screen, an engineer can make more informed decisions about state management, caching, and error handling. This cross-disciplinary expertise serves as a major differentiator in the professional landscape, separating those who simply follow design specs from those who build production-grade systems. Ultimately, the integration of cloud fundamentals into the development process ensures that the front end is not just a facade, but a highly optimized layer of a cohesive, distributed machine.

Future Considerations and Strategic Implementation

The successful integration of AWS infrastructure knowledge into front-end engineering was previously viewed as an optional skill, but it has now become a mandatory requirement for building scalable web systems. Moving forward, the most effective teams will be those that break down the silos between infrastructure and interface, fostering a culture of shared responsibility for the end-to-end performance of the application. Engineers should begin by auditing their existing deployment pipelines to ensure that cache-busting strategies are robust and that serverless cold starts are accounted for in the UI’s loading states. Furthermore, adopting observability tools that track requests through the entire stack allows for faster identification of bottlenecks, whether they reside in the browser or at the network edge. This data-driven approach removes the guesswork from performance optimization and provides a clear roadmap for architectural improvements.

In conclusion, the modern front-end landscape was significantly reshaped by the complexities of the cloud, necessitating a transition from purely code-centric development to a more comprehensive systems-thinking approach. The “buggy” behaviors that often plagued production environments were frequently revealed to be the predictable outcomes of AWS infrastructure choices, such as CDN caching or serverless initialization. By mastering the mechanics of these invisible layers, developers moved beyond the limitations of the browser to create applications that were both faster and more reliable. This strategic alignment between the client-side logic and the hosting environment ensured that the user experience was resilient against the fluctuations of distributed systems. The ultimate takeaway from this evolution was that the more an engineer understood the pipes through which their code flowed, the better that code performed when it finally reached the end user.