The foundational architecture of the Linux kernel is currently undergoing its most transformative structural reorganization since its inception three decades ago, shifting from a reliance on manual memory oversight toward automated, compiler-enforced safety. For years, the kernel remained a monolith of high-performance code written almost exclusively in the C programming language, a language prized for its proximity to hardware but notorious for its unforgiving nature regarding memory management. Today, the landscape is pivoting as the global developer community acknowledges that the sheer complexity of modern workloads requires more than just human vigilance. This shift signifies a departure from the traditional mindset that prioritized execution speed above all else, marking instead the beginning of an era where the structural integrity of the system is the primary benchmark of operational success.

The ongoing transformation is a response to the inherent fragility of legacy systems that struggle to keep pace with the hyper-scale demands of the current digital environment. By integrating a language that enforces safety at the source, Linux is effectively neutralizing the most common vectors for system failure and security breaches. This is not a superficial update but a core re-engineering project that seeks to bridge the gap between low-level performance and high-level safety. As the industry moves further away from the acceptance of preventable system crashes, the adoption of Rust stands as a strategic pivot toward a more resilient and predictable computing foundation that can support the next generation of technological innovation.

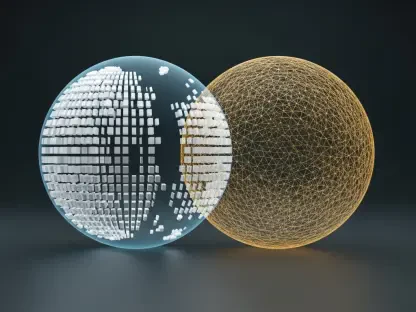

The Paradigm Shift: From Raw Performance to Structural Integrity

The history of operating system development has long been a tale of trading safety for speed. In the early days of the Linux kernel, the priority was to extract every possible ounce of performance from the limited hardware available at the time. C was the perfect tool for this task because it allowed developers to manage hardware resources directly with minimal abstraction. However, this power came with a significant cost: the system provided no safety net. If a developer made a mistake in memory allocation, the entire system could fail or become vulnerable to external exploitation. This trust-based model, while efficient in a simpler era, has become a liability in a world where a single kernel panic can disrupt global infrastructure.

Moving forward, the industry is redefining what it means for a kernel to be “performant.” In the current market, performance is increasingly synonymous with availability and uptime. A system that runs five percent faster but requires frequent reboots or security patches is no longer considered superior to one that is slightly more resource-intensive but demonstrably more stable. The integration of Rust into the kernel architecture addresses this by automating the validation process that was once the sole responsibility of the programmer. This evolution ensures that the kernel can maintain its high-speed execution while simultaneously guaranteeing that memory-related errors are mathematically impossible to commit within safe code blocks.

The Rise of Memory Safety and Proactive Error Prevention

Transitioning from Reactive Patching: The Move to Compiler-Enforced Validation

One of the most significant shifts in the Linux ecosystem is the transition from a reactive security posture to one defined by security by design. For decades, the primary method for improving kernel stability was a cycle of deployment, failure, and patching. When a vulnerability like a buffer overflow was discovered, developers would rush to release a fix, often after the exploit had already been used in the wild. This “smoke alarm” approach served the community for a time, but the increasing sophistication of cyberattacks has rendered it obsolete. Rust changes this dynamic by acting as a “fireproof wall” through its sophisticated compiler, which verifies the safety of every line of code before it is ever executed.

This shift toward compiler-enforced validation means that entire categories of bugs are being eliminated at the source. The Rust compiler utilizes a unique ownership and borrowing system that tracks how data is accessed across the system. If a developer attempts to write code that could lead to a data race or a memory corruption, the compiler identifies the error and refuses to build the software. This proactive prevention fundamentally alters the development lifecycle, reducing the time spent on debugging and allowing engineers to focus on building new features. By moving the validation phase from the production environment to the development environment, the Linux kernel is becoming a much harder target for attackers and a more stable platform for enterprises.

Measuring the Momentum: Rust Adoption Within the Kernel Ecosystem

The adoption of Rust within the Linux kernel is no longer a theoretical exercise but a measurable trend with significant momentum. Since the initial integration efforts began, the focus has been on high-risk areas where memory safety is most critical, particularly within device drivers and newly developed subsystems. Data from the current year indicates that a growing percentage of new driver submissions are being written in Rust, reflecting a deliberate strategy to phase out memory-unsafe code in the most volatile parts of the kernel. This targeted approach allows the community to gain the benefits of Rust without the logistical nightmare of a full rewrite of millions of lines of legacy C code.

Projections for the coming years suggest that this trajectory will only accelerate as the necessary toolchains and documentation continue to mature. The success of early Rust modules has provided a blueprint for other subsystems to follow, leading to a measurable decline in kernel panics related to memory mismanagement in the areas where Rust has been implemented. This trend is also being mirrored by a cultural shift among kernel maintainers, who are increasingly prioritizing memory-safe languages for new projects. As the ecosystem of Rust-based tools and libraries expands, the barrier to entry for new contributors is lowering, ensuring a steady influx of talent dedicated to maintaining the kernel’s new, hardened architecture.

Navigating the Technical Hurdles of a Dual-Language Architecture

Integrating a modern, memory-safe language into a massive codebase that has been built on C for over thirty years is a task of immense technical complexity. The primary challenge lies in the interoperability between the two languages, often managed through complex foreign function interfaces. These interfaces must allow Rust and C to communicate seamlessly without introducing any performance overhead or new security vulnerabilities. Developers must carefully map C data structures to Rust and ensure that the strict safety rules of Rust are not compromised when interacting with legacy code. This creates a hybrid environment that requires a high level of expertise in both paradigms, making the learning curve for veteran maintainers particularly steep.

Beyond the code itself, there are significant logistical hurdles related to the build systems and infrastructure required to support two distinct languages. Maintaining a unified development environment that can handle both the C-based tools and the Rust compiler necessitates a substantial investment in continuous integration and testing. This dual-language approach also complicates the debugging process, as developers must be able to trace errors across language boundaries. Despite these challenges, the Linux community has shown a remarkable ability to adapt, implementing incremental updates and fostering a culture of continuous learning. The goal is to reach a state where the two languages coexist in a way that feels natural to the developer, providing the best of both worlds.

Hardening the Kernel Against Sophisticated Regulatory and Security Demands

The push for Rust integration is also being driven by a changing regulatory environment that is increasingly demanding higher standards for critical infrastructure. Security agencies, such as CISA and the NSA, have begun advocating for the use of memory-safe languages in software that underpins essential public and private services. For Linux, which powers everything from cloud servers to medical devices, meeting these standards is not just a technical goal but a necessity for long-term viability. By adopting Rust, the Linux community is demonstrating a commitment to global security mandates, positioning the kernel as a reliable foundation for the world’s most sensitive data and processes.

This architectural hardening is particularly important in the context of privilege escalation exploits, which often rely on memory vulnerabilities to gain unauthorized access to system resources. By securing the kernel at its most fundamental level, developers are making it significantly more difficult for attackers to find and exploit weaknesses. This proactive defense is a critical component of a modern security strategy, providing a level of resilience that cannot be achieved through external firewalls or monitoring tools alone. As organizations face more frequent and sophisticated threats, the demand for a naturally secure operating system continues to grow, further cementing Rust’s place in the future of Linux.

Engineering a Predictable and Invisible Computing Environment

The ultimate goal of redefining the Linux architecture is to create a computing environment that is so stable and responsive that it becomes essentially invisible to the user. Historically, users have had to tolerate a certain level of unpredictability in their operating systems, from sudden application crashes to mysterious system slowdowns caused by memory leaks. However, as Rust moves from the kernel into userland utilities and background services, these issues are becoming increasingly rare. The efficiency of the language allows for high-performance utilities that do not suffer from the bloat or instability often found in older software, leading to a much more “drama-free” user experience.

This shift toward predictability is particularly beneficial for enterprises that manage large-scale deployments where reliability is the most important factor. In these environments, the cost of a system failure can be measured in millions of dollars, making the stability offered by Rust a significant competitive advantage. As the platform becomes more resilient under heavy loads, users can expect a level of consistency that was previously out of reach. This evolution suggests that the future of Linux will be defined not by how fast it can execute a single task, but by how reliably it can manage thousands of tasks simultaneously without any degradation in performance or security.

Solidifying the Future of the Open-Source Foundation

The strategic transition to a memory-safe kernel architecture finalized the groundwork for a more secure and sustainable open-source future. By addressing the deep-seated vulnerabilities of the C language through the rigorous implementation of Rust, the Linux community successfully moved past the era of reactive patching and entered a period of proactive structural integrity. This transformation provided a stable platform for emerging technologies and ensured that the kernel remained the gold standard for critical infrastructure. The integration process, while technically demanding, fostered a new culture of safety-first engineering that attracted a diverse range of contributors and strengthened the ecosystem as a whole.

For developers and organizations looking to navigate this new landscape, the next steps involve a deeper commitment to mastering memory-safe paradigms and contributing to the growing library of Rust-based drivers and tools. Future efforts must focus on streamlining the interoperability between legacy code and modern modules, ensuring that the transition remains seamless and efficient. As the industry continues to evolve, the focus will likely shift toward expanding these safety principles into every corner of the operating system, from the lowest levels of hardware interaction to the highest levels of user application logic. The path forward is clear: by valuing stability and security as core metrics of performance, the Linux community has secured its foundation for the decades to come.