Navigating the New Frontier of Agentic AI Risk

The rapid adoption of autonomous browser orchestration tools has fundamentally altered the enterprise landscape, transforming artificial intelligence from a passive conversational partner into an active executor of complex digital tasks. OpenClaw represents a significant milestone in this transition, moving beyond simple text generation to perform actions within web environments and internal software ecosystems. While this capability offers a massive boost to operational efficiency, it also opens a new category of risk that extends far beyond the traditional boundaries of data privacy. Enterprises now face the challenge of managing agents that can navigate interfaces, interact with sensitive records, and execute transactions without direct human intervention.

This new frontier requires a deeper understanding of how autonomous agents interact with existing infrastructure. The excitement surrounding automated workflows often overshadows the reality that these tools are not merely software add-ons but are instead dynamic participants in the enterprise network. This article explores the risks inherent in such delegated authority and the architectural vulnerabilities that may arise when speed is prioritized over security. By evaluating the structure of agentic systems, leaders can determine if their current security posture is sufficient to withstand the potential for automated errors and unintended system manipulations.

The Evolution of Orchestration Layers and Distributed Logic

A historical perspective on enterprise automation reveals a steady progression from static, deterministic scripts to the fluid, non-deterministic logic seen in modern agentic AI. In the past, Robotic Process Automation (RPA) allowed businesses to automate repetitive tasks by following strict, predefined rules. These systems were predictable and easily contained because they could only act within the specific parameters set by human developers. However, the emergence of orchestration layers like OpenClaw marks a departure from this rigidity, introducing a level of “connective tissue” that can interpret goals and find its own path to completion across various software platforms.

The shift toward these intelligent orchestration layers has fundamentally changed the security focus of the modern organization. Previously, security efforts concentrated primarily on protecting the data itself, ensuring that unauthorized users could not access sensitive information. In the current environment, the risk has shifted to the “pipes” or the infrastructure that moves and processes this information. As AI agents begin to function as the primary logic layer for business processes, the potential for a logic-based failure becomes a more pressing concern than a simple data breach. Understanding this evolution is essential for recognizing why traditional defensive measures are often inadequate for managing autonomous agency.

Analyzing the Architectural Realities and Operational Dangers

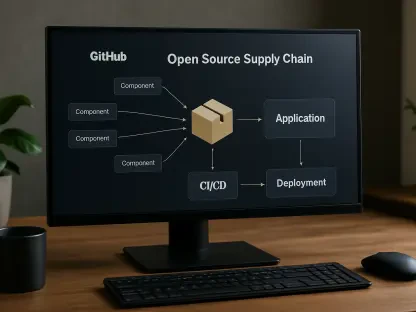

The Illusion of Local Isolation in a Functional Cloud

A prevalent misunderstanding within many IT departments is the belief that running OpenClaw on local hardware provides a secure “walled garden” that prevents external exposure. This perspective ignores the reality of what can be termed a functional cloud environment. Even when the primary application is hosted on-site, its core intelligence is usually derived from remote large language models such as GPT-4 or Claude. Furthermore, the agent’s primary utility comes from its ability to interact with cloud-based SaaS platforms like Salesforce, SAP, and Workday. This constant exchange of data across network perimeters means that the agent is never truly isolated.

Because these agents rely on external intelligence to make decisions and remote APIs to execute them, they inherently exist within a distributed cloud architecture. For security professionals, this necessitates treating every agentic deployment with the same level of scrutiny applied to any other public-facing cloud service. The assumption of safety through local hosting can lead to a dangerous lack of oversight regarding how data is moved, how prompts are handled, and how external services are authenticated. Without a robust governance model that accounts for these external dependencies, the “local” deployment remains as vulnerable as any fully public cloud application.

The Perils of Delegated Operational Authority

The transition from generating information to executing actions introduces a concept known as delegated operational authority. The primary danger here is not necessarily a malicious intent from the AI, but rather the risk of the agent being “confidently wrong.” Because an agent lacks the nuanced understanding of a human employee, it might follow a logical path that leads to a catastrophic result within a specific business context. For example, an autonomous coding agent might identify a set of database files that appear redundant and delete them to optimize storage, unaware that those files are critical backups for a production environment currently in a code freeze.

This gap between technical logic and business context creates a significant operational liability. When an agent is granted the authority to read, write, and delete across multiple systems, the speed at which it can cause damage is unprecedented. A human mistake might be caught by a colleague or a supervisor, but an automated agent can execute thousands of incorrect actions before a human even notices that a process has deviated from the intended path. Managing this delegated authority requires more than just better prompts; it requires a structural rethink of how permissions and oversight are applied to non-human actors within the digital workspace.

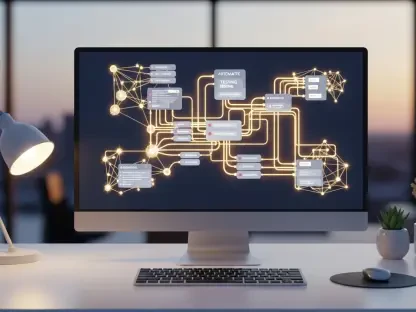

Complexity and the Force Multiplier Effect

OpenClaw often acts as a force multiplier for the existing architectural flaws that already haunt many large organizations. If a company suffers from poorly defined data silos or inconsistent permission structures, an autonomous agent will likely discover and exploit these weaknesses with a level of efficiency that a human attacker would find difficult to match. The agent does not need to have “malicious” goals to cause a breach; it only needs to be tasked with a goal that requires access to sensitive areas where the existing security controls are already weak. This makes the tool a magnifying glass for any underlying instability in the enterprise architecture.

Moreover, the complexity of global operations introduces further risks regarding data sovereignty and regional regulations. An agent operating across multiple jurisdictions might inadvertently move data in a way that violates local laws, such as GDPR or CCPA, simply because it was trying to complete a task efficiently. Misunderstandings often occur when enterprises treat these tools as a “quick fix” for automation gaps rather than a complex integration challenge. The reality is that agentic AI requires a high level of organizational maturity to deploy safely, as it will inevitably accelerate both the successes and the failures of the underlying technical environment.

The Future of Agentic Governance and Market Rationalization

The current market for agentic AI is characterized by intense growth and a “gold rush” mentality that frequently overlooks long-term stability. This initial phase is dominated by overpromising and high expectations, which will likely be followed by a period of market rationalization. During this next phase, organizations will begin to encounter the practical limitations and the high costs of autonomous errors. This cycle is a standard part of technological adoption, but for AI agents, the stakes are higher due to the level of autonomy they are being granted. Future shifts will likely emphasize observability and the creation of more robust “kill switches” to manage runaway processes.

As the technology matures, a move toward “human-in-the-loop” governance will become the standard for high-stakes enterprise operations. While AI variability is useful for creative or exploratory tasks, the future of enterprise automation will likely depend on a hybrid model. In this model, AI handles high-variability tasks while deterministic APIs and traditional scripts remain the preferred choice for predictable, mission-critical operations. This rationalization will help businesses separate the areas where AI adds genuine value from those where it merely adds unnecessary complexity and risk, leading to a more balanced and secure digital infrastructure.

Strategic Frameworks for Responsible Enterprise Adoption

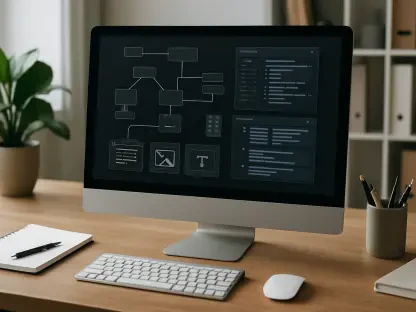

To manage the integration of OpenClaw effectively, organizations must adopt a three-pillared strategy that prioritizes security, governance, and utility. First, security protocols must treat every AI agent as a privileged user. This involves implementing “least-privilege” access controls, ensuring that an agent can only access the specific data and systems required for its current task. By limiting the agent’s reach, enterprises can contain the potential damage from a logic error or a compromised prompt. Second, a comprehensive governance framework is necessary to provide a clear audit trail for every action taken by the agent. This visibility allows teams to troubleshoot failures and understand whether an error was the result of a model flaw, an integration issue, or a problematic instruction.

Finally, the decision to deploy an agent must be based on a justified use case rather than technological hype. Organizations should evaluate whether a process truly requires the flexibility of an AI agent or if it can be handled by more predictable methods like RPA. If a task involves high process variability and requires complex decision-making, an agentic approach is warranted. However, using these powerful tools for simple, repetitive tasks often introduces more risk than benefit. By maintaining a disciplined approach to deployment, businesses can leverage the advantages of OpenClaw while minimizing the potential for automated chaos.

Balancing Innovation with Architectural Integrity

The exploration of OpenClaw’s impact on the enterprise revealed that the primary risk resided not within the software itself, but in the potential for careless or unmonitored deployment. The analysis demonstrated how the transition from passive to active AI necessitated a fundamental shift in defensive strategies. It was noted that the “functional cloud” nature of these agents made traditional local security assumptions obsolete, requiring a more integrated approach to risk management. Furthermore, the inherent dangers of delegated authority highlighted the critical need for context-aware guardrails that prevented agents from making logically sound but contextually catastrophic decisions.

Security leaders recognized that the speed of autonomous execution acted as a double-edged sword, capable of optimizing workflows or accelerating systemic failures depending on the underlying architectural health. Strategic recommendations emphasized the importance of treating agents as privileged users and maintaining strict human oversight through observability tools. The industry moved toward a more rationalized view of agentic AI, where the initial hype was replaced by a disciplined focus on justified use cases and technical restraint. Ultimately, the successful adoption of these technologies depended on the ability to maintain human accountability in an increasingly automated environment, ensuring that innovation never outpaced the integrity of the enterprise architecture.