The distance between a viral demonstration of an autonomous agent and a stable, revenue-generating enterprise application is often measured in thousands of hours of rigorous engineering rather than the cleverness of a single prompt. While the early phase of generative technology relied heavily on the novelty of “magic” outputs, the focus has shifted toward the grounded reality of corporate implementation. The initial trap of prompt-driven curiosity, where clever queries were mistaken for a business strategy, has given way to a more disciplined approach.

Professional engineering demands a transition from viewing artificial intelligence as a standalone miracle to treating it as a standard, albeit complex, software challenge. In a production-ready environment, the ultimate goal is predictability, which often makes the most successful systems appear “boring” to the casual observer. This lack of drama is actually the hallmark of excellence; a system that behaves consistently under the pressure of real-world variables is far more valuable than one that offers flashes of brilliance followed by catastrophic failures.

The Illusion: Moving Beyond the Magic Button

Many early adopters viewed large language models as a “magic button” that could bypass traditional software development lifecycles. This perspective led to a surge in prototypes that looked impressive in controlled settings but crumbled when faced with the nuances of enterprise requirements. Shifting the perspective away from this illusion requires a commitment to engineering discipline, where the goal is to create systems that are as stable and measurable as any legacy database or application server.

The industry has entered a phase where the novelty of a chat interface no longer suffices to justify the investment. Instead, stakeholders are looking for integrated solutions that can perform specific, repeatable tasks without constant human intervention. By stripping away the mysticism surrounding these models, developers can focus on the architectural work that ensures a system remains production-ready, focusing on reliability rather than the dopamine hit of a surprising conversational response.

Bridging the Gap: Transitioning From Hype to Utility

The “Prerequisite Era” is now a defining period for corporate technology, where foundational work must precede the pursuit of autonomous agents. While the democratization of large language models means that model access is no longer a competitive advantage, the true differentiator has become engineering capability. The ability to wrap a model in a functional, secure, and data-aware ecosystem is what separates market leaders from those merely running expensive experiments.

When sophisticated models lack structural grounding, they often become nothing more than “polished autocomplete,” offering linguistically sound but Factually hollow responses. This gap between hype and utility can only be bridged by focusing on the plumbing of the system. Success in the current landscape depends on how well a team can integrate these models into the existing software stack, ensuring that the AI has a clear understanding of the business rules and constraints that govern daily operations.

The Pillars: Establishing Practical AI Engineering

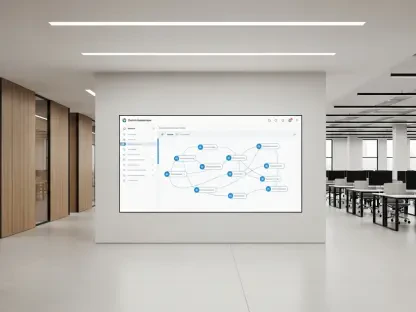

Data modeling remains a critical hurdle because enterprise data is notoriously untidy, living in fragmented silos and legacy systems. Managing the chaos of heterogeneous sources requires a rigorous approach to data engineering that ensures the model receives high-quality, relevant information. Retrieval-Augmented Generation (RAG) has evolved from a simple concept into a complex discipline involving optimized chunking, metadata design, and sophisticated indexing to ensure precision and recall.

Beyond retrieval, the necessity of observability and security has taken center stage. Systems must include robust feedback loops to monitor accuracy and relevance, while also enforcing strict row-level security policies. Data lineage and authorization are now considered more critical than the specific tone of a model’s voice. Furthermore, the transition from stateless interactions to systems with memory allows for a deeper understanding of business history, transforming a tool into a collaborator that understands context.

Front Lines: Insights From Professional Implementation

Consensus among experts indicates that the quality of the retriever is the single most important factor in system performance. If the data pipeline is flawed, even the most advanced model will produce subpar results, proving that the engineering of the pipeline matters more than the model itself. This reality is often discovered during the transition from a clean experimental notebook to a messy, integrated operational environment where data is rarely as perfect as it appears in a demo.

Observations from professional workshops highlight an uneven distribution of AI fluency, where many developers are still building the “muscle memory” required for these complex systems. There is a growing realization that an unconstrained agent is a security liability rather than an asset. Professional implementation now prioritizes constraints and guardrails over cleverness, ensuring that the system operates within a defined sandbox that protects the organization from unintended consequences or data leaks.

The Framework: Transitioning From Demo to Production

Organizations that successfully navigated the shift to production-ready AI established rigorous evaluation benchmarks to measure performance over time. These pioneers prioritized the “boring” infrastructure, such as stable data pipelines and governed repositories, which provided a repeatable framework for deployment. They recognized that mapping fragmented PDFs and unstructured corporate data was a necessary first step that could not be skipped if the goal was a reliable, grounded system.

By integrating these new tools into existing software engineering workflows, companies moved away from isolated experiments toward integrated platforms. This transition involved a step-by-step approach to building governed systems that respected the existing security and operational standards of the enterprise. This disciplined focus on the underlying architecture ensured that the technology remained a stable asset, allowing for a future where artificial intelligence functioned as a dependable component of the broader corporate infrastructure.