The traditional concept of a secure software perimeter has essentially evaporated as modern engineering teams now prioritize deployment velocity over the meticulous, manual scrutiny of every line of code. This shift has created a systemic vulnerability where the sheer volume of daily releases far outpaces the capacity of human security researchers to validate them. While traditional scanners provide a superficial layer of defense, they often fail to grasp the complex, multi-step logic that sophisticated attackers exploit. The emergence of autonomous security testing represents a fundamental pivot from these static, reactive methods toward a model of continuous, intelligent oversight that functions at the same speed as the development pipeline itself. This review examines how these autonomous systems are redefining the “deployment-to-validation gap” and whether they can truly replace the intuition of a seasoned penetration tester.

The Evolution of Autonomous Security Validation

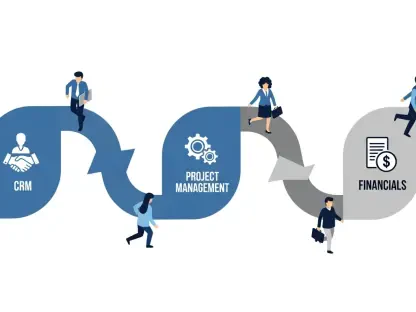

At its core, autonomous security testing is the industry’s response to the failure of periodic pentesting. In the past, a company might hire an external firm once a year to find vulnerabilities, resulting in a static report that was often obsolete by the time the next feature was pushed. The new paradigm of self-securing software moves away from these “point-in-time” snapshots toward a state of constant, automated vigilance. By integrating directly into the development workflow, the technology ensures that security is not a final hurdle before launch but an intrinsic part of the coding process. This evolution is driven by the realization that as cyber threats become more automated and AI-driven, human defenders need equally sophisticated tools to maintain parity.

The architecture of these systems focuses on closing the window of opportunity that exists between a code commit and its eventual security audit. In a landscape where nearly 80% of organizations deploy updates weekly but only a fraction validate them, the risks of “shadow” vulnerabilities are immense. Autonomous validation addresses this by creating a framework where the software environment is capable of probing its own weaknesses. This involves a shift from simple signature matching—where a tool looks for known “bad” patterns—to a more holistic understanding of application behavior. It is no longer just about finding a bug; it is about understanding how that bug interacts with the broader ecosystem of APIs, cloud infrastructure, and user permissions.

Architecture and Core Functionalities

Autonomous Offensive Agent Modeling

Unlike standard automation, which follows a rigid script, autonomous agents utilize reasoning engines to simulate the thought processes of a human attacker. These agents do not merely look for a single flaw; they chain together multiple minor issues to uncover a significant breach path. For instance, an agent might identify a seemingly harmless information leak, use that data to bypass an authentication check, and eventually escalate its privileges within the system. This ability to reason about the “seams” of an application—where different components interact—allows the technology to discover complex logic flaws that traditional scanners consistently miss. Because these agents operate with high-level context, they can navigate undocumented API endpoints and understand deep application logic that was previously only accessible to human experts.

Continuous Feedback and Remediation Loops

The most significant architectural leap in this technology is the integration of “AutoFix” capabilities, which move beyond mere detection to active resolution. When a vulnerability is identified and verified through exploit simulation, the system does not just send an alert to a cluttered dashboard; it generates a merge-ready Pull Request containing the specific code fix. This reduces the friction between security and engineering teams, as developers are provided with a solution rather than just a problem. Moreover, the system performs an automated retest immediately after a fix is merged to ensure that the vulnerability has been neutralized without introducing new regressions. This closed-loop process ensures that the lifecycle of a vulnerability is measured in minutes or hours rather than weeks or months.

Modern Trends in Security Automation

The current trajectory of the industry shows a decisive transition from traditional Dynamic Application Security Testing (DAST) toward context-aware, intelligent agents. Old-school DAST tools were notorious for producing “noise”—a high volume of false positives that required manual triaging, which often led to developers ignoring the results entirely. Modern autonomous tools mitigate this by prioritizing high-signal validation. By verifying every finding against a live target before reporting it, these systems ensure that only legitimate, exploitable threats reach the development team. This trend toward “security-as-code” reflects a broader cultural shift where security is treated as a functional requirement of the software rather than an external compliance check.

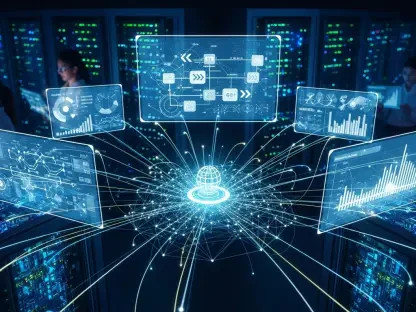

Furthermore, there is an increasing demand for tools that can see through the complexity of modern, fragmented architectures. As applications move toward microservices and serverless functions, the attack surface becomes highly distributed. The latest autonomous agents are designed to be environment-agnostic, pulling data from source code, cloud configurations, and runtime telemetry simultaneously. This unified view allows the system to identify cross-component vulnerabilities, such as a misconfigured S3 bucket that is reachable via a specific API flaw. This level of synchronization is becoming the gold standard for organizations that need to maintain a rigorous security posture without sacrificing the agility of their DevOps practices.

Real-World Implementations and Efficacy

The practical value of autonomous security testing has been validated by its ability to uncover high-impact vulnerabilities in widely adopted frameworks. For example, recent implementations of this technology led to the discovery of critical CVEs in platforms like Coolify and SvelteKit. In the case of Coolify, autonomous agents identified Remote Code Execution (RCE) flaws that could have compromised thousands of servers. These were not simple syntax errors but complex privilege escalation paths that required an understanding of how the platform handled root access. By identifying these flaws, the technology not only protected individual users but also forced platform-wide security improvements that benefited the entire ecosystem.

In comparative tests, these autonomous systems have occasionally outperformed senior human penetration testers, particularly in identifying workflow integrity issues. While a human tester might suffer from fatigue or time constraints, an autonomous agent can exhaustively test every possible path in a document signing or payment workflow without oversight. This has been particularly evident in identifying Cross-Site Scripting (XSS) and signature forgery flaws that had remained undetected for years. These real-world successes demonstrate that while human intuition remains valuable for high-level architectural strategy, the “grunt work” of exhaustive vulnerability hunting is increasingly better handled by machine-led systems.

Technical and Operational Challenges

Despite its rapid advancement, autonomous testing faces significant hurdles, particularly when dealing with undocumented or highly unconventional application logic. Mapping the full extent of a complex enterprise application remains a technical challenge, as agents must infer the purpose of certain endpoints without the benefit of clear documentation. There is also the risk of “over-automation,” where a system might attempt a fix that, while technically secure, breaks a critical business function or violates a specific architectural constraint. Ensuring that the AI understands the “business intent” of a piece of code is a much more difficult task than identifying a technical vulnerability.

Another operational challenge lies in the human-in-the-loop requirement for sensitive decisions. While automated remediation is efficient for standard flaws like SQL injection, more nuanced issues—such as those involving complex authorization logic—still require a human to sign off on the proposed changes. There is a delicate balance to be struck between the speed of automation and the safety of manual oversight. Ongoing development is focused on refining these “confidence scores,” allowing the system to act autonomously on low-risk fixes while flagging more intrusive changes for expert review. This hybrid approach aims to maximize efficiency without introducing instability into the production environment.

The Future of Self-Securing Software

The long-term vision for this technology is a world where security is an inseparable, intrinsic part of the software development lifecycle. We are moving toward environments where the code, the cloud infrastructure, and the runtime telemetry are all part of a single, self-correcting organism. In this future, the very concept of a “vulnerability” may change, as flaws are identified and patched in the milliseconds between a code commit and a production deployment. This would effectively close the window of opportunity for attackers, forcing them to find entirely new methods of exploitation that do not rely on unpatched software.

Future developments will likely involve deeper integration with AI-enhanced development environments, where security agents provide real-time feedback to developers as they type. Imagine a scenario where a developer is prevented from writing insecure code in the first place because an autonomous agent recognizes the dangerous pattern and suggests a secure alternative instantly. As the boundary between the “builder” and the “defender” continues to blur, the role of the security professional will evolve from a gatekeeper to an orchestrator of these autonomous systems. The ultimate impact will be a significant reduction in the global “cyber-debt” that currently plagues the industry.

Final Assessment of Autonomous Testing

The transition from reactive security triage to proactive, verified protection was not just a luxury but a necessity for the modern digital era. Autonomous security testing has proved itself to be an essential force multiplier for security teams, allowing them to scale their efforts alongside the exponential growth of their codebases. By automating the most repetitive and time-consuming aspects of vulnerability discovery and remediation, the technology has freed human experts to focus on the creative and strategic challenges that machines cannot yet master. The verdict on this technology is clear: it is no longer an optional add-on but a foundational requirement for any organization serious about resilience in an automated threat landscape.

Organizations should prioritize the integration of these autonomous loops into their existing CI/CD pipelines as a primary defense mechanism. The focus must shift from simply finding bugs to establishing a verified baseline of security that is maintained automatically with every update. As the technology matures, the emphasis will move toward refining the intelligence of these agents to handle even more abstract logic flaws. Ultimately, the adoption of autonomous testing represented the moment the industry finally stopped playing catch-up with attackers and started building a future where software is inherently resilient by design. This shift ensured that the pace of innovation was no longer hampered by the slow, manual processes of the past.