The digital interface has transitioned from a simple delivery mechanism for static documents into a sophisticated, distributed ecosystem that demands both instantaneous response and deep interactivity. This evolution represents a significant advancement in the digital services industry, marking the end of a long-standing conflict between server-side reliability and client-side fluidity. Modern web application architecture is no longer about choosing a single platform or language; it is an exercise in strategic orchestration across global networks. This review explores the maturity of these architectural frameworks, examining how they have moved beyond the limitations of early scripts to become resilient, multi-runtime entities capable of powering the most demanding enterprise tools and consumer experiences.

The Evolution of Web Architectural Frameworks

The journey of web architecture reflects a decade-long struggle to balance the heavy lifting of the server with the nimble nature of the browser. In the early stages of the internet, the server was the sole source of intelligence, generating finished HTML pages and delivering them to relatively passive clients. While this provided a stable foundation for SEO and accessibility, it lacked the responsiveness required for modern software. The subsequent rise of Single-Page Applications (SPAs) attempted to solve this by moving the entire application logic to the browser. However, this shift introduced a new set of problems, primarily the “blank screen” effect where users waited for massive JavaScript bundles to download and execute before seeing any content.

Today, the industry has arrived at a sophisticated middle ground. Contemporary frameworks have evolved from simple server-side scripts into complex distributed systems that leverage the strengths of both environments. This context is vital because it explains why we no longer see a “one size fits all” approach. The modern framework is designed to be environment-aware, executing code on the server, at the network edge, or in the browser based on what will provide the fastest and most reliable user experience. This shift represents a transition from building “sites” to engineering long-lived software systems that survive across diverse hardware and network conditions.

Core Components of Modern Hybrid Architectures

Enhanced Server-Side Rendering and Hydration

Modern server-side rendering (SSR) has been reimagined as a performance foundation rather than a final destination. Unlike the static HTML generation of the past, current SSR serves as a bridge to interactivity through a process known as hydration. The server delivers a fully formed visual representation of the page, allowing the user to begin reading or navigating almost instantly. Behind the scenes, the client-side framework then “hydrates” this static content, attaching event listeners and state management logic without requiring a page refresh. This dual approach ensures that the initial load performance remains high, even on low-powered mobile devices that struggle with heavy script execution.

The significance of this evolution lies in the granular control it offers developers. Instead of hydrating an entire page at once—which can cause the main browser thread to lock up—modern architectures utilize “streaming SSR.” This allows critical components like the navigation bar or a product image to become interactive first, while less essential elements load in the background. By breaking the hydration process into staged intervals, frameworks reduce the “Time to Interactive” metric, ensuring that the interface feels responsive from the very first second of the user’s visit.

The Server as a UI State Manager

A fundamental shift is occurring where the server is reclaiming its role as the primary manager of the user interface state. In previous years, the trend was to send raw JSON data from an API and let the browser transform it into a viewable format. This often resulted in “janky” experiences as the client struggled to filter, sort, and map complex data sets. Modern architectures now favor “UI-ready view models” generated on the server. By shifting data transformation logic away from the client, developers reduce the processing burden on end-user hardware, ensuring a consistent experience regardless of whether a user is on a high-end desktop or a budget smartphone.

This approach effectively turns the server into a high-performance engine for UI preparation. When the server shapes the state, it can aggregate data from multiple microservices and databases with minimal latency, thanks to its proximity to those data sources. This reduces the number of network requests the browser has to make, which is a major win for users on unstable mobile networks. Furthermore, by keeping complex business logic on the server, the client-side bundle remains slim, focusing entirely on user interaction rather than heavy data processing.

Emerging Trends in Distributed System Design

The architectural map is expanding toward the “edge,” moving logic closer to the user than ever before. Edge computing and global content delivery networks (CDNs) have evolved from simple storage buckets into “multi-runtime” environments capable of executing code. This means a request can be intercepted by a server located in the user’s city, which can then personalize content or verify authentication headers before the request even reaches the main data center. This decentralization minimizes the physical distance data must travel, effectively killing the latency that has traditionally plagued global applications.

Moreover, we are seeing the rise of “isomorphic” codebases that can run seamlessly across these different environments. A single piece of logic might run at the edge to check a cookie, on the server to fetch data, and in the browser to update a form. This fluidity allows for a more resilient system; if a specific runtime fails or experiences high load, the architecture can intelligently fallback or shift responsibilities to another layer. This trend indicates a future where the distinction between “frontend” and “backend” continues to blur into a singular, cohesive execution stream.

Real-World Implementations and Sector Impact

In the e-commerce sector, these hybrid strategies have become a competitive necessity. For a retail giant, a delay of even a few hundred milliseconds can lead to a measurable drop in conversion rates. By utilizing a “staged” architecture, these platforms can deliver SEO-optimized, static content for landing pages while using highly interactive client-side logic for the shopping cart and checkout flows. This allows them to maintain a strict performance budget without sacrificing the dynamic features that modern shoppers expect, such as real-time inventory updates and personalized recommendations.

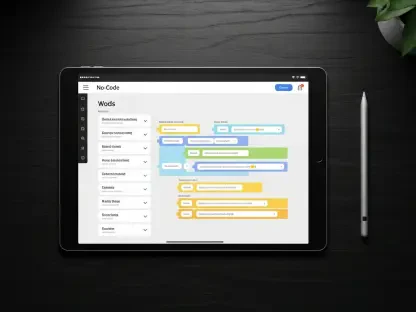

Software-as-a-Service (SaaS) platforms also benefit significantly from this architectural nuance. Managing a complex authenticated dashboard requires a delicate balance of security and speed. While internal tools and administrative panels require high interactivity and real-time state management, the initial entry points and documentation must remain lightweight. Hybrid architectures allow these platforms to maintain “SEO-friendly” public faces while their internal tools function as robust, desktop-like applications. This flexibility ensures that the complexity of the product does not hinder its discoverability or the user’s initial onboarding experience.

Technical Constraints and Operational Hurdles

Despite these advancements, web application architecture faces significant “architectural friction.” This often stems from a dogmatic adherence to single-stack solutions where teams attempt to force one rendering model onto every part of a complex application. For instance, using a heavy client-side framework for a simple content-heavy blog is as inefficient as using a static site generator for a real-time trading platform. The complexity of debugging distributed logic also remains a major hurdle; when code runs across the edge, the server, and the client, identifying the exact point of failure requires sophisticated observability tools that many teams are still struggling to implement.

Operational overhead is another concern, as maintaining a multi-runtime system requires a broader range of expertise than traditional monolithic development. Developers must now understand network protocols, cache invalidation strategies, and the nuances of different execution environments. To mitigate these limitations, the industry is moving toward more transparent framework abstractions. These tools aim to hide the underlying complexity of data fetching and state synchronization, allowing developers to focus on features while the framework handles the staged orchestration of the architecture.

Future Trajectory of Web Systems

The horizon of web architecture is defined by fine-grained reactivity and automated optimization. We are moving toward a state where frameworks will no longer require full page hydration; instead, they will only “wake up” the specific components a user interacts with. This concept of “resumability” ensures that the work done on the server is preserved and continued in the browser, rather than being discarded and rebuilt. Such a shift will drastically reduce the execution time required on the client side, making the web more accessible to users in regions with limited hardware capabilities or expensive data plans.

Strategic flexibility will also be driven by automated systems that adjust an application’s behavior based on real-time network conditions. Imagine an architecture that automatically serves a lightweight, text-heavy version of a page when it detects a weak 3G connection, but switches to a rich, media-heavy interactive experience on a high-speed fiber line. This level of responsiveness will mark the next phase of digital accessibility, where the web system is not just a static target but a living entity that adapts its own architecture to meet the user where they are.

Final Assessment and Review Summary

The review of modern web application architecture revealed a definitive shift away from ideological extremes toward a more practical, nuanced engineering philosophy. The industry successfully transitioned from the simplicity of server-side scripts to the complexity of distributed systems, ultimately landing on a hybrid model that prioritizes the user experience above all else. This evolution proved that neither the server nor the client can solve every challenge in isolation; instead, their combined strengths form the backbone of the modern digital landscape.

Development teams began to embrace the reality that performance and interactivity are not mutually exclusive, provided that the architecture is built with explicit trade-offs in mind. The move toward UI view models and edge computing effectively reduced the processing burden on users, while frameworks provided the necessary tools to manage this complexity. Ultimately, the maturity of these systems demonstrated that the most resilient web applications were those that rejected architectural dogmatism in favor of strategic flexibility and a deep understanding of operational constraints.