The architectural foundation of the global software economy is currently undergoing a massive tectonic shift as generative intelligence moves from a novel experimental phase to the primary engine of enterprise value. This transformation represents the most significant departure from the traditional cloud subscription model since the industry transitioned away from on-premise installations decades ago. In this current landscape, the multi-billion dollar software market is no longer defined by simple remote accessibility but by the depth of its integrated AI-native ecosystems. Businesses are increasingly moving away from static toolsets toward dynamic environments where the software itself participates in the creation and refinement of data rather than merely serving as a repository for it.

The fundamental shift in the SaaS paradigm is redefining how data is organized and how digital tools deliver value to the end user. In the past, the value proposition centered on efficiency and centralized record-keeping; however, the integration of generative models has turned software into a predictive and generative partner. Monetization strategies are consequently evolving to capture this new layer of intelligence, moving past the rigid structures of the previous era. This evolution is not happening in a vacuum, as the current market is shaped by a complex intersection of hyperscalers providing the raw compute power and nimble model providers offering the underlying linguistic and cognitive frameworks.

Technological infrastructure serves as the critical backbone for this new software architecture, requiring a fundamental rethink of hardware and delivery systems. Specialized chips and large language models have become as essential to the modern stack as the database or the user interface once were. Software providers are now tasked with managing complex cloud delivery systems that can handle the massive inference requirements of generative tasks without sacrificing the latency expectations of enterprise users. As these systems become more sophisticated, the distinction between the application layer and the underlying model is blurring, creating a highly integrated technical environment.

Disruptive Forces and Emerging Growth Pathways

The Coding Paradox and the Rise of Intelligent Agents

The democratization of development has created a peculiar paradox within the engineering landscape where the actual production of code is becoming a commodity. As AI-generated code reduces the scarcity of technical talent and lowers the cost of building complex features, the competitive advantage of having a large engineering team is diminishing. This shift allows smaller teams to build robust, feature-rich applications that would have previously required hundreds of developers. Consequently, the industry is seeing a surge of innovation from new entrants who can prototype and deploy at speeds that were once considered impossible for any startup.

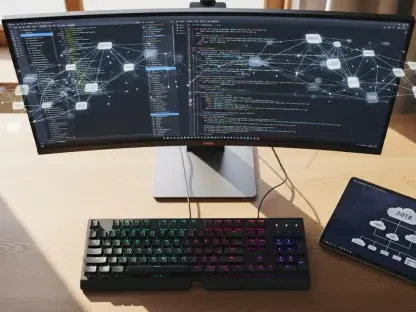

Beyond simple execution, software is evolving from a passive environment into a network of autonomous, context-aware agents. These intelligent partners do not wait for a user to click a button but instead monitor workflows and suggest or execute actions based on real-time business logic. This transition represents a shift from tools that a human operates to partners that operate on behalf of the human. Furthermore, these agents enable hyper-personalization at a scale never before seen, allowing for bespoke user experiences where every interface and automation sequence is tailored to the specific needs of an individual worker or industry vertical.

Projecting Market Trajectories and Performance Metrics

The total addressable market for software is expanding as AI integration allows digital tools to capture a larger share of enterprise spending by automating high-value cognitive tasks. Previously, software was limited to administrative or organizational functions, but today it is taking on roles in creative design, legal analysis, and strategic planning. This expansion suggests that while the cost per unit of work might drop, the volume of work handled by software will increase exponentially. This creates a fertile ground for growth as companies reallocate human labor budgets toward sophisticated AI-driven software solutions.

Predicting revenue volatility has become a central challenge for analysts as the industry transitions from predictable subscription models to more variable consumption-based frameworks. This shift affects long-term valuations, as recurring revenue now depends more on actual usage and the success of AI-driven outcomes than on the number of employee credentials sold. To maintain profitability, SaaS providers are leaning into an efficiency dividend, using internal AI tools to automate their own support, sales, and development functions. This internal adoption helps offset the rising costs associated with delivering high-compute intelligence to their customers.

Structural Obstacles and Competitive Complexities

The erosion of per-seat economics represents one of the most significant risks to the legacy software model as worker productivity doubles through AI augmentation. If a single employee can now perform the tasks that previously required three people, the total number of software licenses needed by a corporation may contract significantly. This creates a survival pressure for vendors who have historically relied on growing their user base to drive revenue growth. To survive, these providers must find ways to charge for the value and output produced by their AI, rather than the headcount of the people using the interface.

Gross margin compression is another looming threat as the industry moves from near-zero incremental distribution costs to token-based delivery models. Every generative response has a literal compute cost associated with it, which behaves more like a manufacturing expense than a traditional software distribution. Navigating this shift requires a sophisticated approach to model routing and cost optimization to ensure that high-quality intelligence does not eat away all the profit. Additionally, the rise of AI-first competitors and automated data migration tools is lowering the barriers to entry and reducing the switching costs that once kept customers locked into a specific ecosystem.

The Regulatory Framework and Security Imperatives

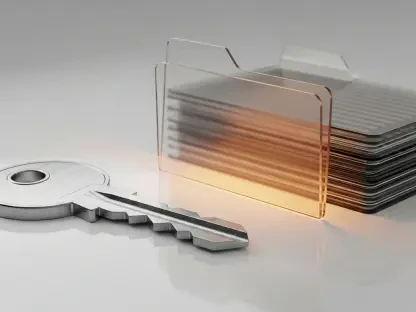

Managing data governance in the age of inference has introduced a layer of complexity regarding proprietary information and privacy laws. As software models are trained and refined on user data, the legal boundaries of intellectual property and consent are being tested in jurisdictions worldwide. Companies must now implement rigorous systems to ensure that their models do not inadvertently leak sensitive corporate secrets or violate international privacy standards. Enterprise-grade security has become a primary competitive moat, as customers are only willing to integrate AI into their core workflows if they trust the provider to handle their data with absolute integrity.

Evolving global standards are forcing software providers to navigate a patchwork of international regulations that impact how and where data can be processed. Cross-border data flows are no longer a simple matter of server location but involve the legal status of where an AI model was trained and where its inference takes place. Compliance is moving from a back-office necessity to a core product feature that distinguishes established players from unregulated startups. This regulatory landscape ensures that trust remains a central component of the software value proposition, as clients prioritize stability and legal safety over raw performance.

The Horizon of Innovation and Market Disruption

Competition is rapidly moving away from the sheer number of features toward the quality and exclusivity of proprietary datasets. In an era where any developer can generate code, the true differentiator is the ability to train models on high-quality, industry-specific data that others cannot access. This shift favors incumbents who have spent years accumulating deeply nested data within specialized workflows. However, there is a constant threat of model disintermediation, where foundational model providers might build specialized workflows directly into their core systems, potentially bypassing the need for separate SaaS applications altogether.

The future of the industry points toward value-based pricing where customers pay for specific outcomes rather than just access. This might mean paying for a successful lead generated, a legal contract reviewed, or a bug fixed by an AI agent. At the same time, the resurgence of bespoke internal solutions is a growing trend, as low-code and no-code AI tools empower non-technical staff to build their own software alternatives. These custom internal tools could eventually replace many general-purpose SaaS subscriptions, forcing vendors to focus on highly complex or regulated niches where DIY solutions are insufficient.

Strategic Imperatives for the Future of SaaS

The strategic evolution of the software industry demonstrated that the narrative regarding the imminent demise of the SaaS model was largely exaggerated. While the fundamental ways in which software is built and sold changed, the indispensable value of industry context and distribution networks remained intact. Successful firms managed to pivot by integrating intelligence as a core service rather than a peripheral feature. These organizations moved beyond the limitations of per-seat pricing and embraced usage-based models that more accurately reflected the massive productivity gains their tools provided to the enterprise.

Resilience in this new era was achieved by those who leveraged their deep domain expertise to maintain high switching costs through superior workflow integration. Investors and analysts focused on identifying the winners who successfully transitioned from providing passive tools to delivering active intelligence. By prioritizing the quality of proprietary data and the security of their AI deployments, these companies solidified their positions as essential partners in the modern economy. The most successful strategies ultimately combined the agility of AI-native development with the reliability of established enterprise software standards, ensuring long-term growth in a transformed technological landscape.