The shift in open-source dynamics from a grassroots movement to a strategic corporate necessity marks a new era in cloud-native infrastructure. Anand Naidu, a seasoned development expert with deep proficiency in both frontend and backend systems, joins us to discuss how the “plumbing” of the digital world—Kubernetes, OpenTelemetry, and Cilium—has become the ultimate control plane for the AI revolution. In this conversation, we explore the strategic motivations of industry giants like Red Hat, Microsoft, and Google, the evolving role of independent contributors, and why the “boring” layers of infrastructure are now the most hard-fought territories in technology.

If open source is moving away from being a developer-led “morality play” toward a strategic control plane, how does this shift change the way vendors prioritize their upstream contributions? What specific business advantages do companies gain by shaping the “dull” plumbing of the AI stack?

The romantic era of contributing to open source purely for “civic virtue” has largely been replaced by cold, hard product strategy. Today, vendors prioritize upstream contributions because they want to set the defaults and normalize the interfaces that everyone else has to live with. When a company like Red Hat leads CNCF activity with 194,699 contributions, they aren’t just being helpful; they are ensuring that their application platforms remain the standard for the 82% of container users running Kubernetes in production. By shaping this “dull” plumbing, businesses gain massive leverage over the entire ecosystem built on top of it, essentially turning infrastructure into a strategic moat. It’s about ensuring that when an organization scales its AI workloads, the underlying substrate is already optimized for that vendor’s specific products and services.

With Red Hat, Microsoft, and Google leading contribution activity, the center of gravity has shifted toward corporate interests. How does this heavy institutional investment affect the long-term neutrality of these projects, and what roles do independent contributors still play in an increasingly corporate landscape?

While the “center of gravity” is undeniably corporate, the sheer diversity of these giants often acts as a check and balance against any single entity seizing total control. Microsoft, for instance, has moved from open-source hostility to being the second-largest contributor with 107,645 contributions, creating a competitive tension that actually helps harden these projects into universal standards. Independent contributors still represent a vital force, holding the fourth-place spot in CNCF rankings with 52,404 contributions, which serves as a crucial “sanity check” for the community. This mix ensures that while the “plumbing” is professionally maintained by those with the deepest pockets, the code remains inspectable and influenced by a wider range of developers who care about the technical integrity of the tools.

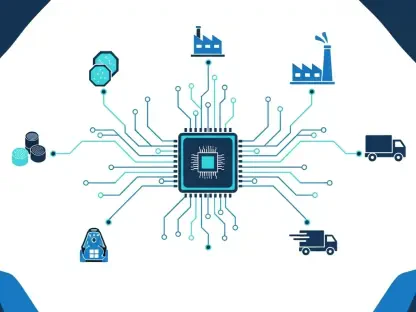

OpenTelemetry and Cilium have seen massive growth in contributors as organizations scale their distributed workloads. Why are these specific layers becoming mission-critical for AI production, and how do standardized observability and networking interfaces help teams manage the high costs and latencies of AI systems?

As AI moves into production, the “boring” infrastructure layers like Cilium and OpenTelemetry become the difference between a successful deployment and a costly failure. OpenTelemetry saw a 39% rise in commits in 2025 because teams are desperate for standardized observability to track the opaque behavior of distributed AI models. Cilium has seen its contributing companies jump 90% to over 1,000 because it sits at the intersection of networking and security—the very place where latency-sensitive AI workloads are won or lost. These tools provide the visibility needed to manage the high costs of compute, ensuring that developers aren’t just throwing money at GPUs without understanding where the bottlenecks are occurring.

While many AI models remain closed, the underlying infrastructure relies heavily on Kubernetes for inference and training. How is the integration of GPU-native schedulers and orchestration tools changing the operational assumptions of data scientists, and what steps should teams take to ensure their infrastructure remains inspectable?

We are seeing a fundamental shift where Kubernetes is becoming the “de facto operating system” for AI, with 66% of organizations already using it for generative AI inference. This integration forces data scientists to move away from isolated notebooks and toward a world where GPU-native schedulers, like those open-sourced by Nvidia, manage the complex orchestration of training jobs. To maintain inspectability, teams must lean into open standards rather than proprietary wrappers, ensuring they can peek under the hood of their clusters to see how resources are being allocated. The goal is to move away from “black box” infrastructure, as few organizations want to build their future on a foundation they cannot audit or influence when performance issues inevitably arise.

Nvidia has become a key contributor to Kubernetes and Kubeflow despite its dominant hardware position. Why is a chip manufacturer investing so heavily in software orchestration communities, and what metrics should organizations track to ensure their software layers are actually optimizing their expensive hardware investments?

Nvidia understands that selling the world’s most powerful chips is only half the battle; if the software layer can’t coordinate those chips efficiently, the hardware’s value plummets. This is why they ranked 14th in Kubernetes contributions with nearly 6,000 commits and are heavily involved in the Kubeflow community. They aren’t just selling silicon; they are investing in the workflow layers that determine how effectively those chips are utilized in a production environment. Organizations should track metrics like GPU utilization rates and scheduling latency within their orchestration tools to ensure that their software “plumbing” isn’t leaving expensive hardware idle or under-optimized.

What is your forecast for the future of open source in AI?

My forecast is that open source will stop being a “choice” and become the mandatory foundation for all governable AI. We will see the “romance” of the developer-led morality play continue to fade, replaced by a hyper-efficient, corporate-backed ecosystem where the most important innovations happen in the invisible layers of observability and platform engineering. Within the next few years, the gap between “closed” models and “open” infrastructure will close, as organizations realize that an open model is useless if it runs on an opaque, proprietary control plane. Open source won’t just be better; it will be the only way to manage the sheer complexity and cost of the AI-driven future.