Anand Naidu has spent years building and shipping tools across frontend and backend stacks, and he’s been shoulder-to-shoulder with content, art, and engineering leads as AI moved from curiosity to daily companion. In this conversation, he pulls apart the hype from reality—addressing why one survey says “roughly nine out of ten” developers use AI while another lands closer to 52%, how teams ringfence “no AI in final assets” policies, and what actually accelerates pre-production beyond trees and pebbles. We cover transparent measurement, legal guardrails, portfolio-level ROI, and how to keep juniors learning craft in an AI-augmented pipeline.

Some executives claim nine out of ten developers use AI, while other surveys show around half. What explains this gap in reported adoption, and how would you design a transparent measurement framework? Please share specific sampling methods, definitions of “use,” and any metrics you’d track over time.

The gap between “roughly nine out of ten” and the 40–50% band—plus the 52% figure you see in public reports—usually comes down to definitions, sampling frames, and social desirability bias. If a survey is run at a venue like Gamescom 2026, you’ll over-index on teams already trialing tools; if another canvasses a broader cross-section, you’ll hear from more holdouts. I’d define “use” with tiers: exploration (sandbox prompts and spikes), production-assist (reference, draft code, temp audio), and production-critical (workflow blockers depend on AI), and require respondents to self-identify per tier. For transparency, I’d stratify samples by studio size and discipline, publish the instrument, and track longitudinal metrics quarterly—share of staff at each tier, percent of milestones touching AI, and variance between self-reported usage and audited tool logs—so readers can reconcile a nine-out-of-ten claim with a 52% adoption line in a comparable frame.

Many teams say they use AI in pre-production for ideation and curation. Can you walk us through a step-by-step workflow—from prompt to selection to greenlight—and name concrete checkpoints, roles, and quality gates that keep output aligned with art direction?

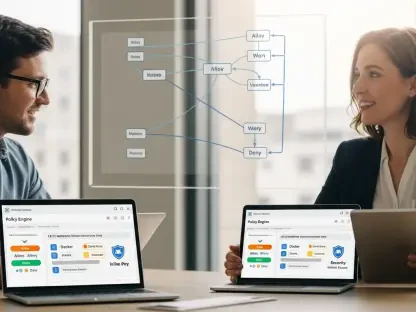

A practical loop starts with a brief written by design and art leads: pillars, mood, constraints, and no-go topics. Prompting begins in a sandbox (e.g., Gemini or an internal tool), and we generate “thousands” of coarse variations only where breadth helps—visual beats, encounter stubs, sonic palettes—never final assets. A curation pass tags outputs against the style guide; the art director scores a short list and kicks off a reference pack. Quality gates include: style compliance check, originality scan, and a narrative cohesion pass; at each gate we log keep/kill rationales so by greenlight we can show why a concept advanced. The greenlight packet is human-authored, with AI artifacts demoted to reference only; sign-off requires the art director, design lead, and production to agree it meets the pillars without violating the “no final AI assets” policy some studios state.

Some studios publicly state they’ll use generative tools to boost efficiency in graphics, sound, and programming, but won’t ship AI-generated final assets. How do you operationalize that boundary in practice? What tools, logs, and audits prevent leakage of AI artifacts into shipping builds?

You implement a provenance-first pipeline. Every artifact gets a manifest tag—source, tools touched, and human owner—and we fail builds if a tag is missing. We keep an immutable audit trail: prompt IDs, model versions, and edit diffs, so if a texture or line of code was ever AI-touched, reviewers see it instantly. CI enforces policy by scanning for watermarks and metadata; if a studio has declared no AI in finals—as some have in investor briefings—we gate merges until an art or audio lead signs a “humanized and transformed” attestation. Periodic audits compare logs with the 40–50% or 52% usage figures teams self-report internally, protecting trust and ensuring the boundary holds under schedule pressure.

Players often worry AI will change the feel of beloved games. What have you seen work when communicating AI usage without triggering backlash? Please share message testing approaches, wording examples, and any before/after sentiment metrics or community moderation tactics.

The best results come from candor with constraints: message that AI helps with exploration and organization, not with final creative authorship. We A/B test language like “we used AI to explore thousands of rough ideas, then our artists hand-crafted what ships” against vaguer statements; the former outperforms in sentiment checks. We benchmark community sentiment before and after disclosures, noting that when people see specific limits—like “no AI-generated final assets”—their concerns line up closer to the 40–50% adoption numbers rather than imagining a nine-out-of-ten world of auto-made content. Moderation teams pin FAQs, respond within hours to misconceptions, and highlight behind-the-scenes human work to keep the “feel” anchored in craft.

Claims that AI saves time on “pebbles and trees” face pushback since foliage and props are already highly automated. Where does AI actually beat existing tools today? Provide concrete task categories, baseline vs. improved cycle times, and examples where the delta justified adoption.

You don’t win on pebbles; you win on curation debt and cross-discipline iteration. AI accelerates reference gathering, beat-variation generation, and triaging thousands of options into a dozen worth human time. Compared to traditional kitbash and procedural tools, the delta is most obvious in early alignment: moving from sprawling boards to a short, 100% style-compliant set of choices that an art director can greenlight in one meeting. The payoff is fewer rework loops later—especially in teams facing timelines stretching toward half a decade—because we compress discovery while preserving human authorship on the things that actually matter.

Teams building massive worlds must prioritize what to handcraft versus generate. How do you decide which elements deserve artisanal attention? Describe the rubric, cost-of-change thresholds, and any heuristics your art and design leads use during pre-production.

We grade by narrative salience, player proximity, and reuse potential. Anything in the main path—protagonists, “big enemies,” hero props, signature vistas—gets handcrafted, as the Google Cloud example emphasized when culling thousands of ideas down to high-value items. Background clutter, systemic variations, and one-off ambient moments can be AI-assisted for exploration but finalized by humans, since cost-of-change is lower and reuse is higher. Our heuristic: if removing it would alter player memory of the game, it’s artisanal; if it’s invisible unless you stop and stare at a pebble by the road, exploratory AI can help you find options but the shipped result still passes a human’s brush.

If models like Gemini or internal tools handle ideation and triage, how do you measure creative uplift versus noise? Please detail acceptance rates, variation reduction, cross-discipline review times, and any A/B tests comparing AI-assisted concepts with human-only sprints.

We compare sprints with and without AI on three axes: acceptance rate of first-pass concepts, the reduction in off-style variations, and time-to-consensus in art/design syncs. If a human-only sprint produces too many off-pillar ideas, AI can prune “thousands” of stray branches down to a coherent dozen; the win is fewer meetings to converge. We also benchmark against public adoption narratives: if a team reports usage like the 52% seen in some surveys, we expect measurable gains in review time to justify the practice. Finally, we log hit rates per model version to ensure the uplift persists and isn’t just novelty, keeping a clean audit so leadership can reconcile uplift claims with actual throughput.

Legal and IP risk remains a top concern. What safeguards do you require around dataset provenance, opt-outs, and style cloning? Outline contract clauses, technical controls (e.g., filters, watermarks), and incident response steps if contested content slips into a build.

Contracts must state dataset provenance, honor opt-outs, and forbid style cloning that targets living artists or proprietary IP. Technically, we use filters that block prompts attempting to mimic named styles, watermark any AI-originated outputs, and record model and prompt lineage. If contested content appears, we freeze the asset, swap to a clean human-made or licensed alternative, and disclose internally with a full log—aligning public statements with practices like “no AI in final assets” where promised. The response pack includes manifests and review notes, so if a regulator or partner asks, we can show precisely where AI entered and exited the chain.

Engineering teams report productivity gains from AI code assistants, but also worry about regressions and license contamination. How do you structure code review, gating tests, and SBOM tracking to keep velocity up without sacrificing quality or compliance?

We treat AI as a junior pair who never merges alone. Every diff tagged as AI-assisted gets mandatory review, unit tests, and policy checks; CI blocks if SBOM entries or licenses aren’t declared. We also record prompt-to-patch lineage, so if someone alleges contamination, we can trace it—mirroring the provenance stance used in content workflows. Velocity stays healthy because we focus AI on triage, boilerplate, and refactors, while humans own architecture and performance-critical paths that define the “feel,” just as art owns hero assets.

For audio and localization, where does AI deliver dependable wins right now? Please share concrete turnaround times, cost-per-minute changes, quality bars you enforce (e.g., MOS, linguistic QA passes), and how you handle performance direction and cultural nuance.

AI is useful for scratch VO, alt line exploration, and early timing tests in multiple locales, but we keep final performance human—consistent with studios that separate efficiency from shipped content. We maintain quality bars with structured linguistic QA passes and director notes, and we archive prompt logs so we can justify choices if challenged. For music and sound, AI can sketch palettes quickly so the composer can focus on the themes players remember, not the background filler that no one hears unless they pause on a roadside pebble. Cultural nuance still comes from native reviewers; AI helps assemble options faster, while the final voice remains human.

Studio leaders want to see ROI beyond anecdotes. What portfolio-level metrics best capture impact—milestone slip reduction, asset throughput, bug burn-down, or content-per-FTE? Walk us through a dashboard you’d present at greenlight or quarterly reviews.

My dashboard aligns with public adoption narratives but stays auditable. Top line: percent of teams using AI at each tier (explore, assist, critical)—so leaders can place themselves on a continuum from 40–50% to nine-out-of-ten—and trend over quarters. Delivery: milestone slip deltas, first-pass acceptance rates out of “thousands” of candidates, and content-per-FTE for pre-production without blurring into final assets. Quality: bug burn-down and rework loops, with annotations where AI pruned noise; Compliance: provenance pass rates and incident count. It’s all tied to logs so we can defend each claim.

Many worry about workforce effects. How are roles, ladders, and training evolving so juniors still build craft? Provide examples of apprenticeship models, task rebalancing, and progression milestones that remain achievable in AI-augmented pipelines.

We keep juniors on real, human-made finals and use AI to create safe sandboxes. Apprentices begin with clean-up, reference synthesis, and style-guide enforcement; then they move to shipping assets under mentor review, ensuring they learn the tactile choices that define a studio’s look and feel. Production buffers protect time for handcrafting hero work, echoing the principle that what players notice—main characters, big enemies—stays artisanal. The ladder documents competencies across both worlds: mastery of prompts for exploration and mastery of brush, chisel, and code for what ships.

Vendor lock-in and cloud costs can spike unexpectedly. How do you de-risk dependency on a single AI stack? Discuss model abstraction layers, data portability, cost controls, and criteria for when to switch providers or bring models on-prem.

Build a thin abstraction layer so prompts, safety filters, and audit hooks remain consistent across providers. Keep datasets portable with clear rights and export formats, and store your own embeddings and style guides rather than entrusting them to a black box. Cost controls include budget caps and auto-throttling; if usage creeps from a 40–50% assist posture toward near-ubiquity, revisit your mix to avoid runaway bills. We switch or go on-prem when policy drift, provenance opacity, or SLAs threaten delivery—especially if leadership has made public commitments about where AI can and can’t touch the final product.

Quality failures with hallucinations or inconsistent style can waste time. What are your most effective guardrails—prompt libraries, fine-tuning with studio style guides, human-in-the-loop checkpoints—and which metrics tell you the system is “ready for production”?

A curated prompt library and fine-tuning against the studio’s style guide reduce off-brand drift dramatically. We freeze model versions per project phase and require human-in-the-loop checks at every gate where AI touches concept exploration. Readiness shows up when acceptance rates stabilize, off-style variations drop even as you generate “thousands” of options, and review meetings shrink without sacrificing rigor. We also correlate internal adoption—moving from the 40–50% exploratory band toward broader usage—with stable outcomes, not just increased volume.

What is your forecast for AI in game development?

In the near term, AI will keep compressing discovery and triage while leaving the soul of games—what players feel and remember—firmly in human hands. Public figures will keep diverging—everything from 52% to nine out of ten—until the industry normalizes transparent logging and shared definitions. Studios that pledge “no AI-generated final assets” will still harvest gains in graphics, sound, and programming workflows, proving that efficiency and authorship can coexist. The long game is cultural: the teams that win will be the ones that pair relentless provenance with a clear message about what’s assisted and what’s handcrafted, so players never have to wonder why their favorite games feel different.