Banking’s AI Moment Meets the Compliance Reality

Banks accelerated AI across underwriting, fraud, and service at record pace, yet the real go-live decision hinged less on dazzling performance than on hard proof that every output could be traced, reviewed, and explained end to end. That pivot reshaped the core question from “Can it work?” to “Can it stand up in an audit, an incident, or a supervisory exam?”

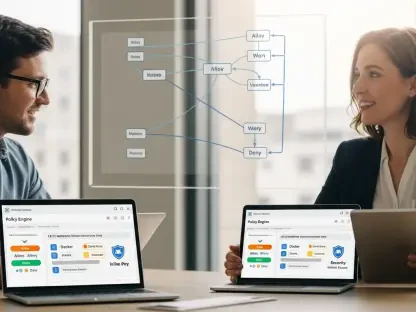

As AI moved from labs into customer journeys, quality assurance shifted from catching defects to demonstrating defensibility. Chris Faraglia of TestRail and Ranorex framed QA as the operational backbone for governance: the place where prompts, datasets, model identifiers, human reviews, and approvals become auditable evidence rather than tribal knowledge. The stakes are plain—customer trust, operational resilience, supervisory confidence, and competitive speed now ride on the reliability of that evidence.

Moreover, defensibility emerged as the throttle on adoption velocity. Institutions that embedded AI into structured test management advanced faster precisely because they could answer, with documentation, who did what, when, and why for every AI-assisted change.

What’s Driving the Shift: Forces Reshaping QA’s Mandate

The drivers behind QA’s elevation came from both technology diffusion and governance pressure. Pilots gave way to production, making standardization and repeatable control as important as model accuracy. As a result, QA inherited a mandate to convert policy into proof, connecting engineering activity to risk and compliance checkpoints.

At the same time, approval bottlenecks produced shadow AI. Where sanctioned pathways lagged, teams reached for unapproved tools, increasing exposure around data leakage and uncontrolled model behavior. QA, positioned between delivery and oversight, became the practical gate that channels usage into compliant workflows without stalling momentum.

From Demos to Defensibility: Trends That Put Governance Ahead of Functionality

Experimentation matured into enterprise rollout, which replaced ad hoc wins with uniform processes. Governance eclipsed raw performance as leaders prioritized lineage, oversight, and retention over benchmark scores alone.

Shadow AI signaled unmet demand; slow, opaque approvals nudged teams outside policy. Test management vendors responded by embedding auditability, making traceability a built-in feature rather than an afterthought. In turn, QA rose from support role to strategic bridge across engineering, risk, and compliance.

Signals in the DatAdoption Metrics, Risk Posture, and Forecasts for Scaled AI

Budget patterns tilted toward governance tooling and model risk management, reinforcing that evidence beats intent. Performance metrics that mattered shifted to evidence density, review cycle time, and release predictability.

Forecasts pointed to a plateau in unsanctioned usage as compliant workflows matured, alongside growth in AI-assisted testing volume. Early wins showed faster approvals via compliance-by-design and lower remediation costs, while leading indicators included regulator guidance cadence, internal policy refresh rates, and audit exception trends.

Friction Points and Failure Modes: Where AI Initiatives Stall

The most common stall came from documentation gaps: missing prompt histories, unclear model versions, and absent reviewer sign-offs. Without a unified view linking artifacts to releases, audits fragmented across tools and teams.

Ownership ambiguity slowed decisions. When engineering, security, and compliance lacked clear roles, releases lingered in limbo. Data risks multiplied through unmanaged tools, weak retention, and fuzzy access controls, while model risk blind spots appeared around drift, bias, and explainability.

Practical mitigations focused on role-based reviews, standardized test artifacts, automated evidence capture, and SLA-backed approvals. By predefining what counts as sufficient proof, banks converted discretionary debate into predictable, auditable steps.

The Rulebook Rewritten: Regulations and Standards Steering AI in Banks

Supervisory expectations coalesced around model risk controls akin to SR 11-7, AI governance acts, privacy laws such as GLBA and GDPR, operational resilience, and third-party risk. The through-line was demonstrable control with human-in-the-loop oversight and end-to-end traceability.

Standards alignment with ISO/IEC 27001, 27701, and 23894, along with SOC 2, offered a map from QA artifacts to control objectives. Internal frameworks—policy, risk tiers, change management, and exception handling—translated these requirements into concrete, testable workflows.

Regulators signaled a preference for evidence over aspiration. Every release decision needed a clear record of who did what, when, and why, backed by verifiable artifacts. In this context, QA became the venue where intent turned into proof.

Building the New Gate: How QA Becomes the Operational Control Layer

Embedding AI into existing QA workflows let AI outputs inherit governance controls without inventing parallel processes. Test management became the source of truth, capturing prompts, inputs, datasets, model IDs, validations, reviewers, exceptions, approvals, and their linkage to releases.

Automation with accountability proved decisive. Tools such as TestRail and Ranorex generated consistent, reviewable artifacts while enforcing role-based checkpoints and separation of duties. That structure preserved human judgment where it mattered and codified routine steps for scale.

Preventing shadow AI required sanctioned tools, fast-track pathways, and clear guidance that met teams where they worked. Success showed up as fewer audit findings, faster compliant releases, and higher evidence completeness—measures that resonated from delivery rooms to boardrooms.

The Road Ahead: What’s Next for AI Governance and QA in Banking

Explainability-by-design, prompt governance, model cards, and lineage tooling are converging with QA platforms, making provenance part of the build flow. Foundation models tailored for regulated use, policy-as-code, and continuous control monitoring are poised to compress review cycles further.

Consumer expectations around transparency, fairness, and recourse are shaping QA acceptance criteria, while cross-border data rules and vendor concentration risk complicate design choices. Organizationally, converged teams across engineering, risk, and security are establishing QA as the coordination hub for safe scale.

Growth areas include AI-assisted testing at scale, automated control testing, and real-time audit readiness. The trajectory favors institutions that treat compliance as a product capability rather than a checkpoint.

From Gatekeeper to Accelerator: Conclusions and Actionable Recommendations

This report concluded that defensibility determined deployability, and QA served as the practical engine of defensibility. The most effective programs defined approved AI usage by risk tier, embedded AI into test management to auto-capture evidence, and standardized human-in-the-loop reviews with clear SLAs.

They also monitored data flows and third-party exposure continuously, created rapid evaluation pathways to deter shadow AI, trained teams on compliant prompts and documentation, and audited iteratively as models and rules evolved. Investment priorities favored platforms and processes that maximized traceability and reduced audit friction.

Taken together, the findings indicated that banks scaled AI safely and faster when compliance lived inside QA. By making proof part of the path to production, the gate became a green light.