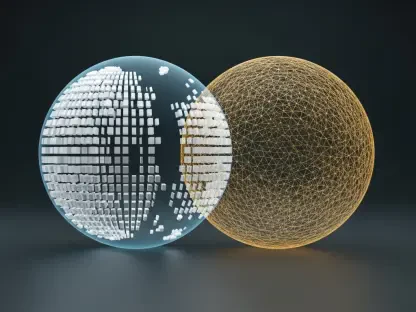

The transition of the data warehouse from a static repository to a dynamic reasoning engine represents one of the most significant shifts in corporate computing history since the dawn of the cloud. Snowflake is no longer positioning itself as just a place to store massive datasets; instead, it is carving out a role as the central brain for what is now known as the “agentic enterprise.” By merging its sophisticated AI platforms, specifically Snowflake Intelligence and Cortex Code, the company aims to provide a unified environment where enterprise systems, diverse data sources, and advanced AI models interact seamlessly. This strategic alignment creates a control plane where AI agents are empowered to do far more than simply retrieve information or answer basic queries. They are now being designed to execute complex, multi-step workflows and reason across a vast digital landscape. This approach allows businesses to move beyond the limitations of isolated chatbots toward a truly integrated system of automation that spans the entire organization.

Empowering Users and Developers

Enhancing the User Experience: Automation for Every Department

To make this vision of an agentic enterprise accessible to all, Snowflake is fundamentally reimagining its Intelligence platform as an adaptable personal work assistant for the average business user. Rather than requiring employees to navigate complex dashboards or write SQL queries, the system leverages natural language automation, allowing users to describe their needs in plain English. This shift lowers the barrier to entry for high-level data analysis, turning every department into a data-driven powerhouse. When a marketing manager asks for a lead conversion analysis, the agent does not just pull a report; it identifies trends, suggests budget reallocations, and prepares the necessary documentation for review. This level of interaction is facilitated by the Model Context Protocol (MCP) connectors, which ensure that the AI has access to the most relevant external contexts. By integrating these tools, the platform moves away from static responses toward a dynamic, iterative partnership between humans and digital agents.

Furthermore, the introduction of advanced reasoning and deep research features, now moving into wider availability, allows these agents to tackle problems that previously required hours of manual investigation. These agentic architectures can perform multi-step reasoning, diving deep into unstructured data and cross-referencing it with historical records to provide comprehensive solutions. This capability is bolstered by a new mobile integration, ensuring that these decision-making tools are available through an iOS application for executives on the go. Whether a user is in the office or traveling, they can trigger complex workflows and receive synthesized insights directly on their devices. The ability to save and share these analyses as reusable artifacts means that successful workflows are not lost but are instead institutionalized across the company. This creates a collaborative environment where the collective intelligence of the organization is constantly updated and refined by the very agents that serve its workforce.

Providing Robust Tools: Infrastructure for AI Builders

While the user layer focuses on accessibility, the technical foundation is built upon the Cortex Code platform, which provides the necessary infrastructure for developers to create governed, data-native applications. In a significant departure from the siloed traditions of the past, Snowflake has expanded its data access capabilities to include external sources such as AWS Glue, Databricks, and Postgres. This means that developers are no longer restricted to the data stored directly within the Snowflake environment; they can build specialized agents that reach across cloud providers to pull information from wherever it resides. This interoperability is a critical step toward creating a true enterprise control plane, as it acknowledges the reality of modern multi-cloud architectures. By providing a bridge between disparate data lakes and warehouses, Cortex Code allows for the creation of agents that have a holistic view of the corporate data estate, regardless of the underlying storage technology.

To support the engineering effort required for these sophisticated systems, Snowflake has introduced new software development kits for popular languages like Python and TypeScript, along with a specialized Claude Code plugin. These tools allow engineers to write, test, and deploy AI agents within a dedicated, zero-setup cloud environment known as a sandbox. This environment reduces the friction typically associated with setting up complex development pipelines, allowing for faster iteration and deployment cycles. Moreover, the implementation of the Agent Communication Protocol (ACP) enables different AI agents to communicate with one another, creating a networked ecosystem of specialized tools. For example, a procurement agent could automatically coordinate with a financial forecasting agent to determine the impact of a new contract on the quarterly budget. By providing “Plan Mode” in the management interface, developers can also maintain a human-in-the-loop safety mechanism, ensuring all proposed actions are reviewed before final execution.

Strategic Shifts and Security

Moving From Generative to Agentic AI: The Orchestration Era

This strategic evolution signals a broader shift within the technology industry, moving away from simple generative AI that creates text or images toward agentic AI that focuses on orchestration and execution. The primary goal is no longer just to generate a plausible answer to a question, but to coordinate a sequence of actions that achieves a specific business objective. This requires a level of logic and planning that goes far beyond the capabilities of early large language models. By positioning itself as the orchestration layer, Snowflake is attempting to solve the problem of how to make AI actually useful in a production environment. This involves managing the interactions between various tools, data sets, and external APIs to ensure that the final outcome is both accurate and actionable. This focus on “doing” rather than just “saying” is what defines the next phase of the digital transformation, turning the data platform into an active participant in the daily operations of the modern enterprise.

One of the most interesting aspects of this transition is the willingness to embrace data that exists outside the internal ecosystem, effectively addressing long-standing concerns about vendor lock-in. By allowing AI tools to operate on data residing in competing platforms, the company is signaling that the value of its services lies in the intelligence and orchestration layers rather than just the storage layer. This flexibility is a strategic necessity in a world where most large organizations utilize multiple cloud providers and a diverse array of database technologies. Providing a unified control plane that can span these different environments makes a platform a more attractive partner for enterprises looking to avoid being tied to a single stack. This approach prioritizes how an AI agent functions and how it interacts with the broader digital landscape over the specific location of the bits and bytes. It represents a move toward a more open, interoperable future where intelligence is the primary currency.

Maintaining the Governance Advantage: Security and Compliance

A cornerstone of this strategy remains the historical commitment to security and governance, which serves as a major differentiator in an increasingly crowded market. While many AI vendors are racing to release features as quickly as possible, Snowflake is building its agentic capabilities directly onto its existing governed data foundation. This means that every action an AI agent takes—whether it is accessing a sensitive customer record or calculating financial projections—is subject to the same rigorous compliance and security frameworks that have long protected enterprise data. This “governed-by-design” approach is essential for moving AI projects from the experimental stage into full-scale production, especially in highly regulated industries like finance, healthcare, and government. By ensuring that data privacy and access controls are baked into the architecture, the platform provides a level of trust that is often missing from more ad-hoc AI implementations that struggle with visibility and auditing.

Furthermore, the ability to track and audit every interaction within the platform ensures that organizations can maintain full visibility into how their AI agents are behaving. As agents become more autonomous, the need for transparency becomes paramount; businesses must be able to verify why a certain decision was made or why a specific workflow was triggered. The architecture allows for detailed logging and monitoring of agent activities, providing the necessary oversight to mitigate risks such as model hallucination or unauthorized data access. This focus on governance ensures that the transition to an agentic enterprise does not come at the expense of security or operational stability. By providing a safe path for scaling AI, the platform enables organizations to realize the benefits of automation while minimizing the potential for costly errors or compliance violations. This disciplined approach to development is what ultimately allows for the reliable deployment of AI workers at scale.

Future Challenges and Implementation

Navigating the Hurdles: The Reality of Enterprise Execution

Despite the significant technological advancements presented, the journey toward becoming a true enterprise control plane is not without its substantial challenges. One of the primary hurdles involves the fact that while a system can reason through data with high efficiency, it does not own the external systems where many critical business tasks are finalized. For instance, an AI agent may determine that a customer is entitled to a refund based on data within the warehouse, but it must still interact with an external CRM or ERP system to process that transaction. Managing these dependencies across different platforms introduces layers of complexity and potential failure points that are outside of the direct control of the central brain. Ensuring that agents remain reliable when they step outside the initial environment and into third-party applications requires robust integration standards and constant monitoring. The success of this vision will depend heavily on the ability to standardize how these disparate systems communicate.

Another major obstacle lies in the underlying structure of corporate data itself, where much of the business logic is often buried in legacy database code or poorly documented stored procedures. For an AI agent to truly understand the rules of a business, it must be able to interpret these complex semantics accurately. If the AI misinterprets a discount rule or a shipping policy because the logic was hidden in an obscure SQL trigger, the resulting actions could be detrimental to the company. Bridging this gap is critical, but the process of mapping human-readable business rules to technical data structures is an ongoing struggle for the entire industry. Additionally, as the volume of AI-driven interactions grows, the computational cost of maintaining these reasoning agents will need to be carefully managed to ensure the return on investment remains positive. Overcoming these technical and economic hurdles will be the next great challenge for organizations aiming to fully embrace the agentic model of operations.

Strategic Pathways: Implementing the Agentic Model

The evolution of the data cloud into a reasoning engine necessitated a fundamental rethink of how organizations managed their digital assets and human workflows. To prepare for this shift, enterprises focused on cleaning their core datasets and documenting the complex business logic that had previously lived only in the minds of senior developers. Leaders prioritized the creation of clear governance policies that defined the boundaries of agentic autonomy, ensuring that every AI-driven action remained traceable and compliant. They also invested in cross-functional teams where data scientists and business analysts collaborated to build agents that solved specific, high-value problems rather than chasing generic automation. By adopting standardized communication protocols like MCP and ACP, these organizations successfully integrated their AI workers into the existing tech stack, creating a seamless flow of information across clouds. Ultimately, the transition to an agentic enterprise was not just a technical upgrade but a strategic commitment to a future where intelligence served as the primary driver of growth.