The complexity of protecting the American homeland has shifted from the physical resilience of interceptors to the invisible integrity of the code that guides them across fragmented domains. As the Golden Dome Initiative matures into a comprehensive protective layer, the definition of a defensive perimeter has moved beyond physical silos toward a hyper-connected system of systems. This architectural transformation requires a radical evolution in how software is developed, secured, and deployed. Modern missile defense no longer relies solely on isolated hardware; instead, it functions as a technical defense fabric where data from space, air, ground, and sea must converge in real-time. This interconnectedness introduces a vast software dependency landscape that necessitates a modernized approach to federal software delivery.

The scope of the Golden Dome Initiative involves a multi-domain defense architecture that transcends traditional service branches. This framework integrates sensing and interceptor capabilities, creating a massive web of software interdependencies. For the Defense Industrial Base, this means that a vulnerability in a single low-level library could potentially compromise the efficacy of an entire regional defense net. Key stakeholders across the Department of Defense and private sector contractors now recognize that federal software modernization is not just an operational preference but a fundamental requirement for national survival. The transition from legacy hardware-centric models to software-defined defense has fundamentally altered the mission of security professionals within the defense ecosystem.

Securing the New Perimeter of National Missile Defense

The current era of defense emphasizes a move away from the rigid, standalone systems of the past toward a fluid technical defense fabric. This evolution is driven by the need for rapid response times and the ability to process astronomical amounts of sensor data across distributed networks. The Golden Dome Initiative serves as the cornerstone of this effort, linking disparate platforms into a unified command and control structure. Consequently, the security of this initiative depends on the collective integrity of thousands of software components, many of which are sourced from the global supply chain. This shift necessitates a DevSecOps model that can account for the massive scale of interdependencies across various operational domains.

As the technical landscape becomes more interconnected, the role of the Defense Industrial Base has shifted from being a mere provider of equipment to becoming a critical guardian of the software supply chain. Federal software delivery modernization efforts now prioritize the creation of a seamless pipeline that can push secure updates to the edge in record time. This is especially vital when considering the speed at which modern threats evolve. The stakeholders involved must align their security protocols to ensure that every artifact, from the initial line of code to the final deployment on a satellite or ground station, remains untainted by adversarial influence.

Shifting Strategies in an Era of Hypersonic Threats

Hyper-Connected Architecture and the Distributed Risk Landscape

Adversaries of the state have recognized that the most effective way to disable a sophisticated missile defense system is not through brute force, but through the exploitation of software vulnerabilities. These nation-state actors frequently target agency boundaries, searching for the seams between different organizational jurisdictions where security might be less stringent. The rise of sophisticated supply chain attacks has demonstrated that traditional perimeter defenses are no longer sufficient. Tactics such as compromising software artifacts, stealing cryptographic signing keys, and initiating dependency confusion attacks have become commonplace. These methods allow attackers to infiltrate the defense architecture long before a missile is even launched.

Addressing these risks requires moving beyond the siloed security mentalities that have characterized federal programs for decades. Risk today propagates through federated identities and shared APIs, meaning a compromise in one administrative domain can quickly spread to others. To counter this, the defense community is adopting strategies that treat identity and integration points as the new front lines. By analyzing how risks traverse these interconnected nodes, security teams can implement more granular controls that prevent lateral movement by attackers. This distributed risk landscape demands a high level of coordination and a shared understanding of threat intelligence across the entire defense network.

Market Projections for Integrated Federal Security Frameworks

Data-driven outlooks for the period from 2026 to 2028 indicate a significant shift toward automated technical defense platforms. There is a growing consensus that manual security reviews are unable to keep pace with the volume of code required for the Golden Dome Initiative. Growth expectations for software supply chain security tools within the Department of Defense remain high, as programs seek to automate the identification and remediation of vulnerabilities. The market is increasingly favoring integrated frameworks that provide a unified view of risk across various contractor environments. This trend reflects a broader move toward operationalizing security through advanced technology rather than through administrative oversight alone.

A forward-looking perspective on federal security also highlights the burgeoning role of artificial intelligence in the DevSecOps lifecycle. AI-driven coding assistants and automated remediation tools are expected to become standard components of the defense development toolkit. These technologies offer the potential to significantly reduce the time required to identify and fix security flaws, which is critical in an era of hypersonic threats. However, the integration of these tools must be handled carefully to ensure they do not introduce new vulnerabilities. The market is responding by developing specialized security protocols designed to validate the outputs of these automated systems, ensuring they align with the rigorous standards required for national defense.

Overcoming Fragmented Tooling and Technical Inconsistency

One of the primary obstacles to achieving the Golden Dome standard is the legacy of a tool-first approach to security. Different contractors and agencies often utilize a divergent array of tools, leading to inconsistent Software Bill of Materials formats and conflicting risk scoring methodologies. This fragmentation creates significant gaps in the overall security posture, as it becomes difficult to establish a single source of truth regarding the health of the software ecosystem. When one program uses one metric for risk and another program uses a different one, the ability to coordinate a unified response to a new threat is severely hindered.

Managing patch service level agreements and supply chain verification becomes a logistical nightmare in such a distributed environment. To eliminate these security gaps, a unified operating model must be established across all program silos. This involves standardizing the way vulnerabilities are reported, tracked, and remediated. Establishing such a model ensures that all stakeholders are operating from the same playbook, which is essential for maintaining the integrity of the Golden Dome. Furthermore, specialized solutions are required to maintain operational integrity in air-gapped or disconnected mission environments where traditional cloud-based security updates may not be feasible.

Navigating the Regulatory Floor and the Golden Dome Ceiling

The regulatory environment for federal software has become increasingly rigorous, with mandates like Executive Order 14028 and NIST SP 800-53 Rev 5 setting new benchmarks for supply chain risk management. These regulations provide a necessary floor for security, ensuring that all contributors to federal projects adhere to a baseline of protective measures. The Cybersecurity Maturity Model Certification and the Secure Software Development Framework further reinforce these standards by requiring demonstrable proof of security practices. While these regulations are vital, the high-stakes nature of the Golden Dome Initiative requires contractors to aim significantly higher than these baseline requirements.

To reach the Golden Dome standard, programs must leverage advanced concepts like Continuous Authority to Operate. This approach replaces static, periodic security assessments with automated policy enforcement and continuous monitoring. By integrating compliance checks directly into the development pipeline, programs can ensure that every update remains within the boundaries of acceptable risk without slowing down the delivery process. This proactive stance on compliance allows the defense community to maintain a state of constant readiness, which is a prerequisite for defending against sophisticated and fast-moving threats.

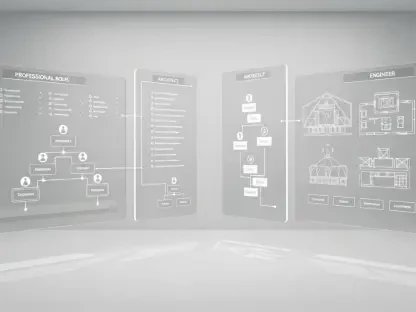

The Three Pillars of Modernized Federal DevSecOps

Prevent: Securing the Boundary Against Malicious Inputs

The first pillar of a modernized DevSecOps strategy focuses on preventing malicious or non-compliant components from entering the development environment. This is achieved by transitioning to controlled proxy endpoints that intercept all incoming code and dependencies. Instead of allowing developers to pull directly from public repositories, these proxies subject every artifact to a rigorous automated evaluation. This ensures that only components that meet predefined security criteria are allowed into the internal workflow. By stopping threats at the boundary, organizations can significantly reduce the potential for downstream compromises and costly remediation efforts.

In the current technological landscape, prevention also involves the careful management of AI coding assistants. While these tools can enhance productivity, they often suggest dependencies that may be insecure or even non-existent. Grounding these AI assistants requires validating their recommendations against live registry intelligence in real-time. This ensures that any code suggested by an automated system is checked for known vulnerabilities before it is ever integrated into a mission-critical system. This layer of automated gatekeeping is essential for maintaining the integrity of the software that powers national missile defense.

Govern: Automating Policy-as-Code Across the Workflow

Effective governance in a modern DevSecOps environment is achieved through the implementation of automated policy-as-code. This involves integrating security requirements directly into the continuous integration and deployment pipelines, creating automated gates at every stage of the lifecycle. By aligning these gates with the Department of Defense Reference Architecture, programs can ensure that all software meets the necessary standards before it moves toward production. This automation removes the subjectivity and potential for human error associated with manual reviews, providing a more consistent and reliable security posture across the entire enterprise.

Governance also entails the active management of hidden debt, which often takes the form of security exceptions or unpatched vulnerabilities. In a modernized framework, exceptions are not merely granted and forgotten; they are time-bound, auditable, and tied to specific remediation plans. This approach ensures that every risk is consciously accepted and that there is a clear path toward closing any security gaps. By treating governance as a continuous process rather than a one-time hurdle, federal programs can maintain better control over their distributed software assets and ensure long-term mission success.

Prove: Maintaining Continuous Evidence of Mission Readiness

The final pillar is the ability to provide continuous, verifiable evidence of security posture and mission readiness. This represents a departure from static, paper-based documentation toward living, searchable software inventories. By maintaining a real-time record of all software components in use, programs can perform rapid impact analysis whenever a new vulnerability is disclosed. This allows for a much faster response than traditional methods, which often involve manual and time-consuming efforts to locate affected systems. Continuous evidence collection ensures that leadership always has an accurate picture of the risks facing the initiative.

Managing the lifecycle of software components is also a critical part of proving readiness. Identifying end-of-life components that no longer receive security patches is vital for eliminating permanent vulnerabilities within the system. Modern DevSecOps tools can automate this process, flagging aging components and helping teams plan for their replacement. Furthermore, by automating the evidence collection required for the Risk Management Framework, programs can streamline compliance and focus more of their resources on actual security improvements. This transformation from reactive reporting to continuous monitoring is the hallmark of a mature defense security program.

Building a Resilient Technical Fabric for Future Defense

The strategic shift from reactive document generation toward proactive, continuous enforcement has redefined the landscape of national defense. By adopting the integrated framework of preventing malicious inputs, governing through automated policy, and proving readiness with continuous evidence, the Defense Industrial Base has established a more resilient technical fabric. The initiative demonstrated that the reliability of American homeland defense is inseparable from the integrity of its software supply chain. Contractors who embraced these modernized principles found themselves better equipped to counter the sophisticated tactics of nation-state adversaries, ensuring that the Golden Dome remained an impenetrable shield.

Successful implementation of this shared automated operating model provided the long-term stability needed to support increasingly complex defense missions. The transition toward standardized SBOMs and unified risk scoring addressed the fragmentation that once plagued federal programs, creating a more cohesive and transparent security environment. This evolution in DevSecOps allowed the defense community to move with the speed and agility required to stay ahead of evolving threats. Ultimately, the lessons learned during this period of transformation underscored the necessity of automation and shared standards in safeguarding the nation’s most critical infrastructure against the challenges of an uncertain world.