The traditional image of a software engineer meticulously crafting lines of code is rapidly being replaced by a landscape where natural language commands define entire system architectures. This fundamental shift marks the transition from manual syntax to a prompt-driven logic model, often termed vibe coding. In this new paradigm, the primary programming interface is no longer a specific language but the human intent conveyed through high-level descriptions. The industry is witnessing a significant pivot where the abstract vibe of a project dictates its development path, moving away from the rigid constraints of traditional coding environments.

The emergence of this trend is deeply tied to the aggressive competition between major infrastructure players. The recent effort by Cloudflare to replicate the popular Next.js framework using artificial intelligence highlights this shift. By utilizing AI agents, the organization produced a functional alternative in an incredibly short timeframe, challenging the dominance of established ecosystems like Vercel. This event underscores how framework development is being transformed by tools that prioritize rapid output over manual logic. Consequently, AI agents are drastically reducing the capital and time required for high-level software engineering, making complex systems more accessible than ever before.

The Evolution of Software Engineering from Manual Syntax to Prompt-Driven Logic

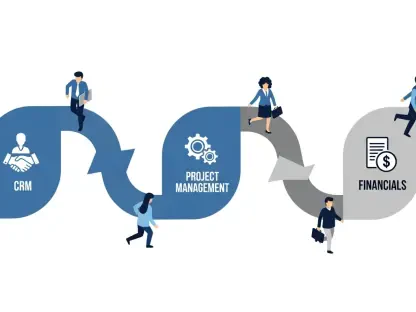

The historical reliance on deep technical expertise to build scalable software is facing a direct challenge from the democratization of complex engineering. As prompt-based architectures become more sophisticated, single developers are now capable of replicating enterprise-grade frameworks that previously required entire teams of engineers. This empowerment is driven by the ability of AI to interpret high-level functional requirements and generate the underlying code without the developer needing to manage every micro-optimization. The focus has moved from the technical how to the conceptual what, signaling a significant evolution in the professional identity of the software engineer.

The shift toward outcome-oriented development relies heavily on the maturation of advanced tools like Cursor and Claude Code. These platforms allow developers to maintain a creative flow, focusing on the broader architecture and user experience while the AI handles the repetitive logic. This reduction in technical friction allows for a rapid prototyping revolution where ideas can be tested and iterated upon in real time. As a result, the traditional moats that protected software companies—specifically their complex proprietary codebases—are being eroded by the sheer speed and efficiency of AI-assisted generation.

The Rise of Prompt-Based Architectures and the Democratization of Complex Engineering

Performance indicators in this new era provide a startling look at the economic shifts occurring within the development cycle. Looking at landmark projects like the vinext reimplementation, the cost-to-output ratios reveal a dramatic decrease in financial overhead. Projects that once cost hundreds of thousands of dollars in human labor are now being executed for a fraction of that amount using automated agents. Market data suggests that the growth of these coding agents in professional environments is not merely a passing trend but a structural change in how production-grade infrastructure is built and maintained.

The viability of this model is increasingly supported by automated testing frameworks that provide a layer of validation for AI-generated code. High unit coverage and end-to-end browser tests are becoming the standard method for ensuring that the vibe translates into a stable product. While the human element remains necessary for oversight, the economic argument for AI-driven development is becoming hard to ignore. Organizations are finding that the ability to deploy complex features in days rather than months provides a competitive advantage that outweighs the initial learning curve of these new tools.

Performance Indicators and the Economic Projections of AI-Generated Infrastructure

However, the rapid adoption of AI-generated logic brings significant security risks that cannot be overlooked. Unverified outputs often contain subtle flaws such as broken authentication flows or server-side request forgery (SSRF) that can expose sensitive data. The review gap—the discrepancy between the speed of code generation and the speed of manual security audits—represents a critical vulnerability for modern enterprises. When code is produced at a rate that outpaces the ability of human teams to verify it, the risk of deploying compromised infrastructure increases exponentially.

To combat these technical obstacles, organizations are beginning to integrate rigorous guardrails into their automated pipelines. Strategies like mandatory continuous integration (CI/CD) and sophisticated vulnerability scanning are essential for managing the output of AI agents. It is no longer sufficient to rely on the perceived quality of the prompt; instead, the industry is moving toward a model where every line of AI-generated code must pass through a gauntlet of automated checks. This transition ensures that while development speed is maximized, the fundamental integrity of the software is preserved.

Confronting the Vibe Coding Paradigm: Security Risks and Technical Obstacles

The necessity of standardized security protocols for AI-built software has prompted a new discussion regarding regulatory landscapes and accountability. As critical infrastructure becomes increasingly dependent on AI-generated components, the demand for industry-wide validation standards has intensified. Government compliance and standardized audits are becoming necessary tools for ensuring that software built with minimal human intervention remains safe for public use. This push for regulation aims to create a framework where innovation does not come at the expense of public safety or data privacy.

Legal and ethical implications arise when AI-generated code leads to significant security breaches. Determining who is accountable—the developer who provided the prompt, the company that built the AI, or the organization that deployed the software—remains a complex challenge. Furthermore, security disclosures are being utilized as strategic instruments in corporate competition, where companies highlight the flaws in an AI-generated competitor to gain market positioning. This environment necessitates a transparent approach to software accountability to maintain trust in an increasingly automated world.

Navigating the Regulatory Landscape and Accountability Standards

Predicting the next shift in web architecture suggests a move toward a hybrid engineering model. This approach blends the extreme speed of AI generation with the nuanced architectural integrity that only human experience can provide. Rather than replacing the engineer, AI is becoming a sophisticated partner that handles the heavy lifting of implementation while the human focuses on strategy and security. This partnership is likely to produce more flexible, platform-agnostic deployment models that challenge the dominance of tightly coupled hosting ecosystems.

Market disruptors are already moving toward these flexible architectures, allowing developers to move away from vendor lock-in and embrace more versatile infrastructure. Consumer and developer preferences are evolving toward systems that offer both speed and reliability without forcing a choice between the two. The long-term impact of global economic conditions is also fueling the demand for this low-cost, high-efficiency engineering. As companies look to optimize their budgets, the adoption of hybrid AI-human teams will likely become the standard for any organization seeking to remain relevant in a fast-paced digital economy.

Predicting the Next Shift: Hybrid Engineering and the Future of Web Architecture

The tension between rapid AI-led innovation and the core requirement for security defined the industry’s progression through this era. It became evident that vibe coding was not merely a temporary curiosity but a permanent shift in how software maturity was measured. Organizations that successfully leveraged AI agents without compromising stability provided the blueprint for future development. These leaders recognized that automated security validation had to evolve at the same pace as generation tools to prevent a systemic collapse of technical integrity.

Investment in AI-assisted engineering shifted its focus toward advanced verification and architectural oversight. The final perspective offered by this transition showed that while the initial excitement centered on speed, the lasting value was found in the combination of human creativity and machine precision. Companies prioritized building robust testing suites that could verify the intent of a prompt with mathematical certainty. Ultimately, the industry moved toward a future where the initial vibe of a project served as the spark for a process that remained grounded in rigorous engineering principles and unyielding security standards.