The landscape of modern software engineering is shifting rapidly toward a future where the boundary between human intent and machine execution becomes nearly invisible. Microsoft’s release of .NET 11 Preview 2 represents a definitive stake in the ground, moving beyond incremental updates to embrace a deeper, more systemic optimization of the development stack. This roundup explores the collective technical shifts and architectural decisions that define this release, highlighting how the ecosystem is evolving to meet the demands of a high-speed, cloud-native world.

The Evolution Toward a Leaner and More Intelligent Development Ecosystem

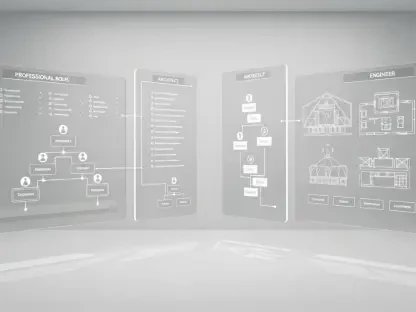

Strategic shifts in the Microsoft development roadmap following recent updates suggest a move away from traditional compiler-heavy logic in favor of runtime-native integration. This transition marks a pivotal moment for software engineering because it moves the complexity of execution from the build phase directly into the core engine. By internalizing these processes, the platform allows for a more fluid interaction between the code and the underlying hardware, effectively reducing the friction that has historically slowed down large-scale deployments.

The Preview 2 release specifically balances the reduction of infrastructure overhead with aggressive execution speeds across the entire stack. Engineers have noted that this “leaner and meaner” philosophy is not just about raw power; it is about smarter resource allocation. Instead of forcing developers to manually tune their environments, the system now takes a more proactive role in managing performance, ensuring that applications remain responsive even as they scale in complexity.

Deep Dive Into Architectural Refinements and Runtime Innovations

Redefining the Asynchronous Frontier: Runtime-Native Management

One of the most significant changes involves moving toward runtime-native async state machines, a move that fundamentally alters how developers interact with asynchronous code. Previously, the compiler was responsible for the boilerplate logic required to track the state of an operation. By shifting this into the runtime, the platform eliminates a layer of “noise” that often cluttered stack traces during debugging sessions. This refinement makes troubleshooting significantly more efficient, as the errors reported now align more closely with the developer’s original logic rather than the compiler’s generated artifacts.

However, moving logic from the compiler into the core execution engine involves a delicate set of trade-offs. While it simplifies the developer experience and potentially reduces the size of compiled binaries, it places a heavier burden on the runtime to manage these transitions flawlessly. The consensus among early testers is that the gain in transparency and the reduction in debugging “noise” far outweigh the architectural complexity of this shift, signaling a new era of runtime intelligence.

Advanced JIT Optimizations: Intelligence Without Manual Intervention

The Just-In-Time (JIT) compiler has evolved to automate the elimination of redundant bounds and arithmetic checks, which are common performance bottlenecks. In high-performance cloud environments, even a micro-optimization in a “hot code path” can lead to significant savings in compute costs. The JIT can now prove the safety of certain operations at runtime, allowing it to skip unnecessary safety checks that were previously mandatory to prevent memory corruption or overflow errors.

These improvements provide a competitive advantage for enterprise applications that process massive streams of data. By optimizing code on the fly without requiring the developer to write complex, unsafe code blocks, .NET 11 maintains its commitment to safety while chasing maximum throughput. This automated approach ensures that even legacy codebases can see performance boosts simply by migrating to the new runtime, democratizing high-level performance across the ecosystem.

Slashing the SDK Footprint: Efficiency in Cross-Platform Tooling

Deduplication strategies in the latest SDK have significantly reduced installer sizes for Linux and macOS users. By utilizing content hashing and symbolic links, the installation process avoids storing multiple copies of identical files across different workloads. This is particularly beneficial for CI/CD pipelines and containerized environments where storage space and download speeds directly impact the agility of the deployment cycle.

Navigating the risks of automated SDK optimizations requires a careful balance between portability and stability. While reducing the footprint is a clear win for efficiency, it necessitates a more robust management system for symbolic links and file dependencies. Developers operating in diverse deployment scenarios have observed that these changes make the environment feel more native to Unix-style systems, aligning the .NET experience more closely with other modern, lightweight dev-tooling.

Specialized Performance Gains: From Kestrel to MAUI and Beyond

In the realm of web development, ASP.NET Core now handles malformed requests without the heavy overhead of exception handling, leading to a 40% increase in throughput for the Kestrel server. By treating invalid traffic as a standard logic path rather than an exceptional error, the system remains resilient against the “garbage” traffic typical of the modern internet. This ensures that valid user requests are prioritized and processed without being slowed down by the noise of port scans or malicious probes.

Mobile responsiveness has also seen a boost through .NET MAUI’s binding optimizations, which have slashed memory allocations and improved UI frame rates. At the same time, Entity Framework Core has expanded its LINQ translations to allow more complex data processing to happen directly on the database server. These specialized gains across different frameworks demonstrate a unified effort to ensure that whether a developer is building a web API, a mobile app, or a data-heavy enterprise tool, the underlying platform is working to minimize latency.

Strategic Implementation: Maximizing the Potential of Preview 2

Integrating these early-access performance features into existing workflows requires a disciplined approach to testing and diagnostics. Developers are encouraged to utilize the new diagnostic analyzers to identify areas where their code can take advantage of the latest runtime optimizations. Best practices suggest that while the performance gains are tempting, the focus should remain on maintaining cross-platform compatibility by using the updated archival tools and file-format support included in this release.

Actionable steps for preparing legacy codebases involve auditing existing async patterns and data access logic. Since the runtime is becoming more intelligent, some manual optimizations previously used by developers might now be redundant or even counterproductive. Transitioning to the final production release will be smoother for those who begin phasing out manual bounds checking and complex compiler workarounds in favor of the idiomatic patterns that .NET 11 is designed to accelerate.

Strengthening the Foundation for the Future of .NET Development

The convergence of runtime intelligence and SDK efficiency in this iteration defined a clear path for the ecosystem’s future. By focusing on the “leaner and meaner” philosophy, the platform addressed the practical needs of cloud-native and mobile applications where every byte of memory and every millisecond of CPU time matters. The strategic reduction of boilerplate and the automation of performance tuning reflected a mature understanding of modern engineering challenges.

Ultimately, the advancements in Preview 2 set a high bar for the official launch, proving that the platform could evolve without losing its core identity. The community was left with a powerful set of tools that promised more than just speed; they offered a more intuitive and transparent way to build software. Developers who adopted these early patterns found themselves better positioned to leverage the full scale of the November release, ensuring their applications were ready for the next generation of digital infrastructure.