The long-standing struggle to keep automated tests from shattering during every minor UI update has finally met its match in a sophisticated blend of neural networks and adaptive algorithms. For years, the software industry accepted “flaky tests” as a necessary evil, a tax paid for the privilege of rapid deployment cycles. However, as of 2026, the arrival of autonomous testing ecosystems has shifted the conversation from mere script execution to intelligent quality orchestration. This transition is not just about writing code faster; it is about building a self-sustaining environment where software validates itself with minimal human intervention.

The fundamental tension in modern DevOps has always been the speed of delivery versus the stability of the release. Traditional frameworks required engineers to manually define “locators”—specific addresses for buttons or text fields—which would break if a developer simply moved an element two pixels to the left. AI-driven development addresses this by replacing static paths with a multi-dimensional understanding of the interface. Instead of looking for a specific ID, the system identifies the “intent” of a component, such as its shape, label, and relative position, creating a resilient layer of abstraction that survives structural changes.

The Paradigm Shift in Quality Assurance

The core of this transformation lies in the move away from the “script-first” mentality that dominated the early 2020s. In that era, the quality of a QA team was often measured by the sheer volume of test cases they could maintain. Today, the focus is on the health of the testing ecosystem. AI has turned what was once a reactive process into a proactive one. By integrating directly into the development environment, these intelligent systems anticipate where failures are likely to occur based on historical code changes, allowing teams to shore up defenses before a single line of code reaches production.

What makes this implementation unique is the departure from rigid, pre-defined workflows. Traditional automation followed a linear “if-then” logic that could never account for the chaotic nature of real-world user interaction. AI-driven ecosystems, however, operate on a probabilistic model. They understand that a user might navigate a checkout process in twenty different ways, and they generate test permutations that cover these diverse paths automatically. This ensures that the testing suite is as dynamic as the application it is designed to protect, bridging the gap between clinical lab results and actual user experiences.

Core Mechanisms: AI-Enhanced Testing

Adaptive Self-Healing and Visual Stability

The most immediate performance metric for AI in automation is the drastic reduction in maintenance overhead through self-healing mechanisms. When a test script encounters a UI change that would typically trigger a failure, the AI engine performs a real-time comparison of the DOM (Document Object Model) and visual attributes. It identifies the most likely candidate for the missing element and continues the test execution, simultaneously flagging the change for human review. This prevents the “suite meltdowns” that historically paralyzed continuous integration pipelines, ensuring that the development flow remains uninterrupted even during major refactoring.

Visual stability has also evolved from simple pixel comparison to semantic visual analysis. Older tools would flag an error if a font size changed by a single point, leading to thousands of false positives. Modern AI-driven visual testing ignores these trivialities, focusing instead on “functional visual integrity.” It understands that a shift in background color might be an intentional design choice, but a button overlapping a text field is a critical defect. This nuanced interpretation allows QA teams to trust their automated results without having to manually verify every minor visual update.

Automated Scenario Generation and Requirement Parsing

The true breakthrough in productivity comes from the ability of AI to translate abstract requirements into executable code. By utilizing Large Language Models (LLMs), these systems can ingest natural language documentation or user stories and automatically scaffold the necessary test architecture. This matters because it removes the translation layer where bugs often hide; the AI ensures that the test coverage is a literal interpretation of the business logic. It essentially acts as a bridge between the product manager’s vision and the technical reality of the codebase.

Furthermore, these systems do not just wait for instructions; they learn from existing user behavior. By analyzing production telemetry and clickstream data, AI-driven tools identify the “golden paths”—the routes most frequently traveled by high-value customers. They then prioritize these paths for deep testing, ensuring that the most critical business functions are always validated first. This data-driven approach to test planning ensures that resources are allocated where they have the most significant impact on revenue and user satisfaction, rather than being spread thin over obscure features.

Emerging Trends: Technological Innovations

Generative AI has now matured into a reliable “co-pilot” that assists engineers in optimizing their test code. These models do more than just write scripts; they perform deep analysis of the existing test architecture to identify redundancies and suggest more efficient logic. For example, if a test suite spends unnecessary time logging in for every single scenario, the AI can suggest global state management strategies to slash execution times. This level of optimization was previously the work of senior architects, but it is now available at the click of a button for every member of the team.

Moreover, the democratization of testing is reaching a fever pitch through the rise of sophisticated codeless platforms. These are no longer the rudimentary “record and playback” tools of the past. They are intelligent environments that allow domain experts—who may not be coders—to build complex logic using natural language prompts. This is a crucial shift because it allows those who understand the business best to be the ones who verify it. It frees technical engineers to focus on high-level infrastructure and security while the functional validation is handled by those with the deepest context of the product.

Real-World Applications: Industry Implementation

In sectors where high-frequency releases are the norm, such as e-commerce and fintech, the impact of AI-driven testing is quantifiable. These organizations have moved from bi-weekly releases to multiple deployments per day without a corresponding spike in defect rates. For instance, e-commerce giants use visual AI to verify that their storefronts render perfectly across thousands of different device and browser combinations. This is a task that would be physically impossible for a human team to accomplish, making AI an essential component of global scalability.

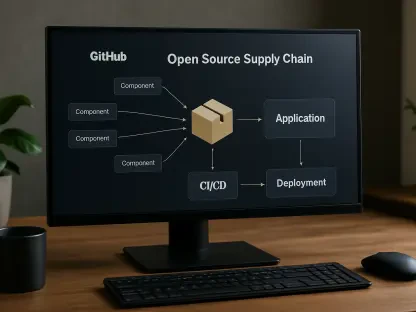

The integration into CI/CD pipelines has also fundamentally changed the nature of the “build” process. In the past, a build would fail, and a developer would have to dig through logs to find out why. Today, AI-driven systems provide a summarized “root cause analysis” alongside the failure report. They point exactly to the commit that caused the break and offer a potential fix. This reduces the “mean time to recovery” (MTTR), allowing teams to stay in a flow state and reducing the friction between the development and quality assurance departments.

Challenges: Technical Hurdles

Despite these advancements, the technology is not without its pitfalls. “AI hallucinations” remain a persistent threat, where a model might generate a plausible-looking test script that actually asserts the wrong behavior or uses non-existent libraries. This necessitates a “trust but verify” approach, where human oversight is still required to sign off on AI-generated assets. There is also the danger of “automation bias,” where teams become so reliant on the AI’s success that they stop performing the exploratory testing necessary to find truly creative or unexpected bugs.

Regulatory and security concerns also present significant obstacles, particularly in data-sensitive fields. Uploading proprietary code or customer data to a third-party AI provider is a non-starter for many organizations. To counter this, the industry is seeing a shift toward “private LLM” deployments—local instances of AI models that run within a company’s secure firewall. This allows organizations to reap the benefits of AI without exposing their intellectual property. Managing these local models, however, requires a level of computational resource and expertise that adds a new layer of complexity to the IT infrastructure.

Summary of the Technological Evolution

The review of current AI-driven autotest development reveals a technology that has transitioned from an experimental novelty to a foundational pillar of modern software engineering. It has successfully mitigated the fragility of traditional automation, but it has also introduced a new set of responsibilities centered around model governance and strategic oversight. The primary value proposition has shifted from “saving time on typing” to “providing a higher level of confidence in the final product.” While the tools have become more autonomous, the need for human intuition and strategic thinking has only become more pronounced.

The next steps for organizations involve moving beyond the mere adoption of AI tools toward a unified quality strategy that integrates functional, security, and performance testing into a single autonomous loop. Rather than treating testing as a separate phase that occurs after development, companies should aim for “continuous validation” where the AI monitors the health of the system in real-time, even after deployment. This will require a cultural shift where testers are retrained as AI curators and strategists, focusing on the broader implications of quality rather than the minutiae of script maintenance. The ultimate goal is a self-healing, self-optimizing software lifecycle that anticipates failure before it ever impacts the user.