Breaches of trust no longer hinge on classic bugs alone but on opaque, fast-moving AI behaviors that can misread context, misapply tools, and mislead users at scale before anyone notices. Enterprises, particularly banks, now face a quality mandate that spans model behavior, safety, and governance, pressing QA into a frontline role. Against this backdrop, Applause sharpened its bet on hybrid AI assurance and named Aatish Salvi as CTO, unifying product and engineering around evaluation, human-in-the-loop validation, and red teaming to keep AI rollouts on a reliable, auditable track.

The company’s stance echoed a broader market shift captured in its State of Digital Quality in Testing AI report: adoption surged, yet production stability lagged. The gap centered on non-determinism, context sensitivity, and agentic autonomy—factors that strain deterministic checks. The industry’s response pointed toward repeatable evaluation frameworks, operationalized oversight, and continuous monitoring as the new baseline for trust.

The AI Assurance Landscape: Scope, Stakes, and Players

Quality now underwrites credibility for both regulated and consumer experiences. Generative and agentic systems shape recommendations, support interactions, and even credit-related workflows; consequently, assurance moved from bug-finding to behavior stewardship. Legacy test scripts proved too brittle when faced with stochastic outputs, prompted reasoning paths, and tool-mediated chains that shift under new data or policies.

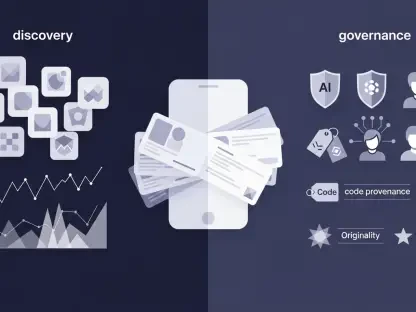

The landscape coalesced into linked segments: model evaluation, safety testing, human-in-the-loop reviews, structured red teaming, and continuous monitoring. Players ranged from specialist QA firms like Applause and pure-play AI safety vendors to cloud platforms and internal platform teams. LLMs, agentic orchestration, tool-use, RAG, multimodality, and synthetic data all pressed on methodology, forcing testing into scenarios, context, and adversarial probes rather than static coverage.

Financial services illustrated the stakes with high-impact decisioning, stringent auditability, and fraud controls that tolerate little ambiguity. Banks needed documented assurance, runtime controls, and post-incident traceability. Regulatory guidance tightened expectations for explainability, fairness, data privacy, and supervision, setting a higher bar for evaluation depth and for control evidence mapped to policy.

Momentum and Market Signals Shaping AI QA

From Deterministic Testing to Hybrid Assurance: Trends Redefining QA

Deterministic checks gave way to behavior-driven and adversarial evaluations that probe edge cases and stress contextual understanding. Human-in-the-loop became design, not a bandage: domain experts and diverse generalist testers supplied judgment that scaled automation could not reliably substitute.

LLM-as-judge systems emerged as amplifiers under guardrails, while real-world validation across devices, locales, and networks exposed failures masked in labs. Organizations pushed continuous evaluation and regression into production norms. Governance fused with QA via policy enforcement, evaluation pipelines, model cards, and incident response, supporting controlled rollouts with explainability and safety gates.

Numbers That Matter: Adoption, Spend, and Forecasts

More than half of organizations shipped AI features, yet over half of AI efforts stalled before stable production under the weight of integration issues, cost, and unresolved quality risks. Human evaluation remained central, with 61% relying on expert judgment to assess performance and safety.

LLM-as-judge adoption rose as a force multiplier but fell short as a stand-alone evaluator. Budgets signaled growing allocation to AI-native QA tools, red teaming, and validation services, especially in banking. Demand concentrated on repeatable frameworks, cross-functional red teams, and monitoring aligned with regulatory audits to accelerate safe scale.

Gaps, Risks, and How Applause Proposes to Close Them

The assurance gap widened as non-determinism, context dependence, and agentic autonomy outran legacy methods. Failures in the wild included hallucinations, unsafe outputs, prompt misreads, bias, and brittle tool-use chains. Organizations also wrestled with speed-to-market pressures, underbuilt evaluation assets, thin scenario coverage, and fragile rollback and guardrails.

Applause advanced a response anchored by leadership and operational rigor. The appointment of Aatish Salvi as CTO signaled tighter alignment of product and technology with AI assurance. Its hybrid approach blended automated tooling, AI-based evaluation, and human testers in real environments to capture emergent behaviors. Expanded services focused on model evaluation, domain expert validation, and structured red teaming, coupled with continuous evaluation pipelines and behavior-driven tests mapped to governance needs.

Compliance at the Core: Evolving Rules Shaping AI Quality

AI-specific laws, privacy mandates, anti-discrimination rules, and sectoral banking oversight reframed quality as control evidence. Banks faced expectations to demonstrate control over behavior, lineage, monitoring, explainability, and remediation—supported by model cards, evaluation protocols, human oversight records, and audit trails.

Security and resilience entered the QA charter: prompt injection defenses, jailbreak resistance, supply chain integrity, and incident playbooks. Practically, QA aligned with risk, compliance, and security functions; red teaming formalized as a control; runtime guardrails and policy engines became table stakes to satisfy supervisory expectations without throttling innovation.

What’s Next for AI QA in Financial Services and Beyond

Emerging technologies pointed to agentic testing harnesses, synthetic users, auto-curricula for stress testing, and multimodal evaluators that examine voice, image, and text together. Orchestration frameworks, policy engines, unified eval stores, and safety-as-a-service platforms threatened to reshape buying criteria.

Buyers showed preference for measurable risk reduction, auditable pipelines, domain-specific expertise, and global testing coverage. Growth clustered in decisioning systems, customer support agents, onboarding and KYC flows, and personalized experiences under strict controls. Regulatory tightening, budget scrutiny, and accelerating platform innovation pushed organizations toward continuous assurance as the operating norm.

Strategic Takeaways and Recommendations

The report’s throughline concluded that deployment speed had outpaced assurance maturity, and that hybrid methods with stronger governance formed the new standard. Banks that treated QA as a frontline control improved resilience and compliance, institutionalized human-in-the-loop reviews, and stood up cross-functional red teams on repeatable evaluation frameworks tailored to audits.

Technology leaders paired LLM-as-judge with human oversight and solid ground-truth datasets, shifted to behavior-driven testing with continuous monitoring and real-world validation, and installed feedback loops for prompt and design iteration with safe rollback. Vendors, including Applause, delivered hybrid offerings that combined scale, judgment, and auditability, supplied domain-calibrated evaluation assets and transparent metrics, and invested in agentic evaluation, safety tooling, and integrations with enterprise risk systems.

Taken together, these moves positioned organizations to convert promising prototypes into reliable, compliant production assets. The path forward favored automation for speed guided by domain-informed human judgment, and the market’s winners committed to operationalized assurance that documented, monitored, and corrected AI behavior as conditions evolved.