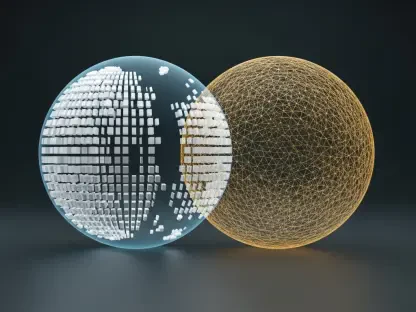

The days of engineering teams waking up at three in the morning to manually scale server clusters are rapidly vanishing as intelligence embeds itself directly into the fabric of the modern data center. This fundamental shift represents more than just a minor upgrade to existing toolsets; it is a total reimagining of how digital systems sustain themselves. While the initial wave of cloud computing focused on the agility of virtual machines and containers, the current phase involves a move toward autonomous, self-optimizing environments where software manages software.

At the heart of this transformation lies the intersection of microservices, Kubernetes, and sophisticated machine learning frameworks. Industry stakeholders, led by organizations like the Cloud Native Computing Foundation, are working toward standardizing how AI models interact with core infrastructure components. These efforts ensure that as automation takes the wheel, it does so within rigorous compliance and governance frameworks. Automated resource allocation and data privacy are no longer afterthoughts but are built into the very logic of the orchestration layer to prevent the chaos of unmonitored algorithmic decisions.

Mapping the Evolution of AI-Driven Cloud Ecosystems

Emerging Technological Drivers and the Rise of Predictive Scaling

Modern infrastructure has moved beyond reactive threshold-based scaling, where a system only adds capacity after a failure is imminent. Instead, the industry is embracing proactive, model-driven capacity planning that uses historical data to anticipate demand spikes before they happen. This predictive approach ensures that resources are ready exactly when they are needed, maintaining a seamless experience for the end-user. Generative AI has further accelerated this evolution by automating complex security audits and speeding up the development lifecycle through intelligent code assistance.

Consumer and enterprise expectations have concurrently shifted toward a standard of zero-downtime and instantaneous service elasticity. As businesses compete in a global market, the ability to scale without friction becomes a significant competitive advantage. This demand for constant availability forces cloud-native systems to become more resilient and adaptive, using machine learning to balance workloads across geographically distributed regions without manual intervention from a DevOps team.

Market Growth Projections and the Expanding Footprint of AIOps

Statistical evidence highlights the sheer impact of these technologies, with early adopters reporting a 60% improvement in Mean Time to Resolution through the use of intelligent automation. By filtering out the noise of thousands of irrelevant alerts, AI-driven operations allow engineers to focus exclusively on critical issues. This efficiency translates directly into economic gains, with forecasts indicating that global enterprises adopting AI-enhanced Kubernetes clusters can expect a reduction in operational expenditure of between 20% and 40% over the next two years.

The adoption rates for these systems are climbing as the technical barriers to entry continue to fall. As more organizations migrate their critical workloads to cloud-native stacks, the integration of AIOps becomes less of a luxury and more of a requirement for survival. This growth is mirrored in the financial sector and retail industries, where the volatility of traffic requires the kind of precision that only an automated, data-driven management system can provide.

Overcoming Complexity and Technical Hurdles in Autonomous Systems

One of the most persistent challenges in modern cloud environments is the sheer volume of telemetry data produced by high-velocity systems. Normalizing signals from disparate logs and metrics is essential to prevent data noise from overwhelming the underlying machine learning models. Without clean, standardized data, even the most advanced AI can suffer from hallucinations or incorrect conclusions, leading to potentially disastrous infrastructure decisions.

Furthermore, the scarcity of GPU resources and specialized hardware orchestration remains a significant bottleneck for companies running heavy AI workloads. Solving this requires a high degree of technical sophistication in how Kubernetes schedules tasks and manages hardware accelerators. Bridging the cultural gap is equally important; traditional DevOps teams must learn to trust automated systems while developing new skills in model oversight and algorithmic auditing to ensure that the automation remains aligned with business goals.

Navigating the Regulatory and Security Landscape of Automated Clouds

Artificial intelligence now plays a pivotal role in real-time threat detection and the automated enforcement of policies within containerized environments. As cyber threats become more sophisticated, the speed of human response is no longer sufficient to protect distributed applications. Automated security layers can identify suspicious traffic patterns and isolate compromised containers in milliseconds, significantly narrowing the window of opportunity for an attacker to move laterally through a network.

Managing compliance and data sovereignty remains a complex task, especially as MLOps pipelines often move data across international borders. Organizations must navigate emerging global AI regulations that demand higher levels of transparency and accountability for automated decision-making. Avoiding the black-box problem is critical; cloud-native tools are evolving to provide better explainability, ensuring that every automated resource adjustment or security block can be audited and justified to regulatory bodies.

The Future of Sovereign Clouds and Fully Autonomous Operations

The industry is moving toward a No-Ops environment where self-healing protocols manage the entire lifecycle of an application, from root-cause analysis to remediation. This vision relies on the integration of GitOps with AI, creating a unified and version-controlled source of truth for the entire infrastructure. By combining the transparency of Git-based workflows with the reactive power of machine learning, organizations can achieve a level of stability that was previously impossible.

Looking toward the long-term outlook, AI is transitioning from being a specialized workload that runs on the cloud to becoming the foundational logic that dictates how the cloud itself functions. Sovereign clouds are increasingly utilizing these autonomous protocols to ensure data localized within specific regions is managed according to local laws without sacrificing the efficiency of global automation. This transition marks the end of the cloud as a passive utility and its birth as an intelligent partner in the business process.

Summarizing the Paradigm Shift Toward AI-Native Operations

The convergence of AIOps and MLOps has fundamentally redefined the limits of what digital systems can achieve in terms of scalability and resilience. Organizations that recognized the importance of cultural adaptability and foundational infrastructure maturity were the ones most likely to thrive in this new landscape. The shift was not merely about replacing human effort but about elevating it, allowing teams to move away from mundane maintenance and toward the high-value strategic innovations that drive growth.

Forward-thinking enterprises prioritized the implementation of intelligent cloud-native tools as a competitive necessity rather than a speculative experiment. By investing in standardized data pipelines and robust orchestration layers, they built a framework capable of supporting the next generation of autonomous applications. The successful integration of these technologies proved that the future of the cloud lies in its ability to think, learn, and act with a degree of precision that human operators alone could never hope to match.