While raw processing power often grabs the headlines, the true gatekeeper of artificial intelligence today is the silent exhaustion of high-speed memory. As large language models expand their reach into every facet of enterprise operations, they have collided with a physical reality known as the “memory wall,” where the cost of storing data during a conversation exceeds the cost of calculating the response. Google TurboQuant enters this fray not as a faster engine, but as a more efficient fuel system, promising to dismantle the economic barriers that have historically made long-form AI interactions prohibitively expensive. This technology represents a pivot from the pursuit of sheer model size toward a sophisticated refinement of inference architecture.

Understanding Google TurboQuant: The New Frontier of Inference Optimization

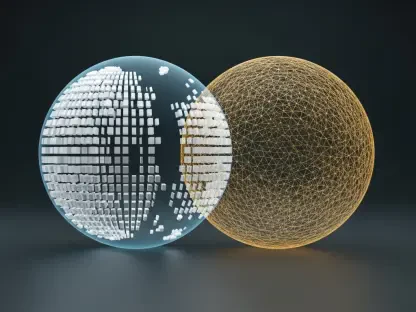

At its core, TurboQuant is an optimization framework designed to maximize the utility of existing AI accelerators by fundamentally changing how data is handled during the inference phase. When a model generates text, it must store a massive amount of temporary information to keep track of the conversation’s context. This process, while necessary, creates a massive drag on system resources. TurboQuant addresses this by implementing advanced quantization techniques that shrink the mathematical representation of data without losing the nuanced “understanding” the model possesses.

The emergence of this technology is particularly relevant as the industry moves away from simple prompt-and-response interactions toward complex, multi-layered workflows. By addressing the economic challenges of scaling, Google is providing a blueprint for how organizations can deploy high-performance models like Gemma and Mistral on hardware that would otherwise be overwhelmed. It is a strategic response to the reality that simply buying more GPUs is no longer a viable path to growth for most businesses.

Core Technologies and High-Performance Components

KV Cache Compression and Memory Efficiency

The primary innovation within TurboQuant lies in its handling of the Key-Value (KV) cache, the specific memory segment that stores the history of a session. In standard configurations, this cache experiences a “memory blow-up” as the context length increases, effectively capping how many users a single chip can serve. TurboQuant utilizes aggressive compression algorithms to reduce this footprint by up to 6x. This does not just save space; it fundamentally alters the concurrency math for data centers, allowing for more simultaneous “agentic” tasks per accelerator without a drop in performance.

Accelerated Vector Search and Attention-Logit Computation

Beyond simple storage, the framework optimizes the actual retrieval process through enhanced vector search capabilities. On specialized hardware like the Nvidia #00, TurboQuant achieves an 8x speedup in attention-logit computation, which is the mathematical heart of how a model decides which parts of an input are important. For Retrieval-Augmented Generation (RAG) systems, this means the AI can sift through massive external databases and integrate that information into its response almost instantaneously. This speed is critical for maintaining the illusion of a seamless, human-like interaction in high-stakes professional environments.

Emerging Trends: The Shift Toward Inference Economics

The industry is currently witnessing a tectonic shift where “inference economics” has replaced parameter counts as the primary metric of success. The era of building increasingly massive models is giving way to an era of efficiency, where the goal is to extract the maximum possible value from every watt of power and every gigabyte of VRAM. TurboQuant is a direct product of this trend, reflecting a market that values hardware utilization and cost-effective scaling over the raw size of a neural network.

Real-World Applications and Industrial Deployment

In practical terms, this technology transforms the feasibility of complex AI workflows, such as the automated analysis of hundreds of pages of legal or financial documentation. Before such optimizations, keeping that much information “in mind” would have been too costly for a standard enterprise deployment. By applying TurboQuant to open-weight models, developers are now able to create persistent AI agents that remember past interactions over days or weeks, turning a temporary tool into a long-term digital collaborator.

Technical Hurdles and Market Obstacles

Despite its impressive metrics, the path to universal adoption is not without friction. Integrating a high-performance framework like TurboQuant into the messy, fragmented reality of diverse enterprise software stacks remains a significant challenge. Furthermore, while Google claims zero-loss accuracy, the reality of model quantization often involves a delicate balancing act. Ensuring that specialized, domain-specific models maintain their precision after such aggressive compression requires constant tuning and a deep understanding of varied model architectures.

The Future of Lean AI: Long-Term Outlook

The trajectory of this technology suggests a move toward “unlimited” context windows where the physical limits of hardware no longer dictate the depth of an AI’s memory. As these optimization techniques mature, we will likely see the democratization of high-end AI, moving from massive data centers to mobile and edge devices. This shift would allow sophisticated, localized intelligence to run on hardware that currently lacks the capacity for large-scale inference, effectively putting a personal super-assistant in every pocket.

Final Assessment: Solving the Memory Bottleneck

The introduction of TurboQuant effectively signaled the end of the brute-force era in AI development. By achieving a 6x reduction in memory consumption and dramatically lowering the entry bar for high-concurrency applications, the framework proved that architectural ingenuity can overcome the physical limitations of silicon. Decision-makers should now look toward hybrid deployment strategies that leverage these compression gains to reduce operational overhead while expanding the scope of their AI initiatives. Future implementations will likely move toward automated, hardware-aware quantization that self-optimizes based on the specific task at hand, making the concept of a “memory wall” a relic of early-stage AI development. Significant investment in talent capable of managing these leaner, more complex stacks will be the next logical step for firms seeking to turn technical efficiency into market dominance.