The Global Pivot Toward Open-Source AI and China’s Strategic Positioning

The global struggle for technological supremacy has moved beyond proprietary software silos toward a decentralized battlefield where open-source accessibility defines the new boundaries of influence. While early iterations of large-scale artificial intelligence were characterized by gated ecosystems and high subscription costs, the current landscape is increasingly defined by democratized access to high-performance models. China has recognized this shift earlier than many of its counterparts, positioning itself as a central hub for open-source development to circumvent trade restrictions and foster a global dependency on its domestic architectures. This strategic pivot allows Chinese firms to leverage the collective intelligence of global developers while simultaneously establishing their own technical standards as the baseline for the next generation of digital tools.

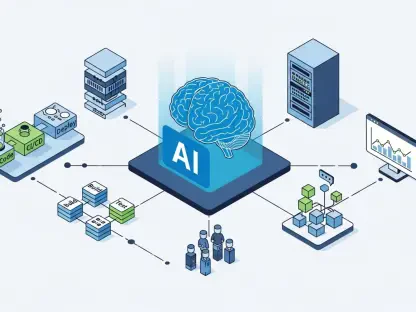

The artificial intelligence industry currently operates across several specialized segments, ranging from natural language processing to computer vision and predictive analytics. Major technological influences now include the rise of small, efficient models that can run on consumer-grade hardware, reducing the need for massive, centralized server farms. While Western market players remain leaders in absolute computational power, Chinese entities such as Alibaba, Baidu, and a host of agile startups are flooding the market with versatile, open-weights models. These developments occur within a complex regulatory environment where data privacy laws and safety standards are constantly evolving, forcing a delicate balance between rapid innovation and national security requirements.

The Dual Engines of Growth: Strategic Accessibility and Market Expansion

Shifting Paradigms from Proprietary Dominance to Open-Source Flexibility

The prevailing trend in the industry is a marked move away from “black box” systems toward transparent, modifiable architectures that offer greater flexibility to enterprise users. Businesses no longer wish to be locked into a single provider’s pricing structure or development roadmap; instead, they seek the ability to fine-tune models on their own proprietary data within secure environments. This shift has empowered a new wave of innovation where customization is valued over raw size, allowing emerging technologies like edge computing and federated learning to thrive. As consumer behaviors evolve, the demand for AI that is localized, culturally relevant, and cost-effective has become a primary market driver.

Moreover, the transition to open-source models has fundamentally altered the competitive landscape by lowering the financial barriers to entry for startups and smaller nations. New opportunities are arising in sectors such as agriculture, urban planning, and personalized medicine, where bespoke AI solutions are more effective than general-purpose tools. By providing the underlying framework for these applications, China is creating a massive ecosystem of developers who are inherently familiar with Chinese technical logic and infrastructure. This grassroots adoption creates a powerful network effect that is difficult for proprietary competitors to dismantle, as the sheer volume of users ensures continuous improvement and troubleshooting of the open-source codebase.

Quantifying the Global South’s Adoption and Future Market Projections

Recent market data indicates that adoption rates for accessible AI frameworks are growing most rapidly in developing economies, where traditional enterprise licenses are often prohibitively expensive. From 2026 to 2028, it is projected that the market share for open-source AI in Southeast Asia and Africa will grow by an annual rate of nearly thirty percent. These regions represent a significant portion of the future global workforce, and their reliance on specific technological stacks will dictate the flow of international trade and digital services. Performance indicators show that Chinese-led models are frequently outperforming their Western counterparts in localized linguistic tasks, further cementing their status as the preferred choice for non-English speaking markets.

Forward-looking forecasts suggest that by the end of the current decade, the majority of the world’s AI-driven infrastructure will be built on foundations that are either fully open-source or derived from open-weight models. The proliferation of these systems is expected to trigger a secondary market for specialized hardware designed specifically to optimize these public architectures. As more countries integrate these tools into their national digital strategies, the demand for localized data centers and cloud services will skyrocket. This trajectory indicates that the centers of technological gravity are shifting toward regions that prioritize wide-scale distribution and collaborative development over exclusive ownership of intellectual property.

Navigating Structural Obstacles and Technical Bottlenecks

Despite the rapid expansion of open-source initiatives, the industry faces significant hurdles related to hardware availability and the quality of training data. Technical bottlenecks often arise when attempting to scale open-source models to match the logic and reasoning capabilities of their closed-source rivals, which benefit from specialized, high-bandwidth computing clusters. Furthermore, the global semiconductor supply chain remains a point of friction, as trade policies and export controls can limit the ability of certain regions to access the high-end chips necessary for training the most advanced iterations of these models. Strategies to overcome these issues include the development of more efficient training algorithms and the utilization of synthetic data to supplement real-world information.

Regulatory complexities also present a challenge, as different jurisdictions have vastly different requirements for AI safety, transparency, and copyright. Navigating this fragmented legal landscape requires a sophisticated approach to compliance that does not stifle the inherent speed of open-source collaboration. Many organizations are turning to automated auditing tools and standardized safety protocols to ensure their models meet international benchmarks without needing to undergo lengthy, manual review processes. Solving these structural obstacles is essential for maintaining public trust and ensuring that the benefits of artificial intelligence are distributed equitably across different socioeconomic strata.

The Regulatory Framework and Technological Sovereignty

The regulatory landscape is currently undergoing a period of intense scrutiny as nations strive to protect their digital borders while remaining competitive in the global economy. Significant laws concerning data residency and algorithmic accountability have forced developers to rethink how they distribute and maintain their models. For many governments, the concept of technological sovereignty—maintaining control over the core technologies that run their society—has become a top priority. This has led to the implementation of standards that favor open-source models, as they allow for greater transparency and national oversight than proprietary systems controlled by foreign corporations.

Compliance in this new era involves more than just adhering to privacy rules; it requires a proactive stance on security measures and the prevention of malicious use. Industry practices are shifting toward the adoption of robust watermarking for AI-generated content and the implementation of rigorous testing environments to identify potential biases or vulnerabilities. As standards become more uniform across major trading blocs, the ability to demonstrate a commitment to ethical AI development will become a key differentiator for market players. Those who can navigate these regulatory requirements while still providing high-performance, accessible technology will be best positioned to lead the market.

The Future Trajectory of AI as National Infrastructure

Artificial intelligence is rapidly evolving from a niche software category into a foundational element of national infrastructure, akin to power grids or transportation networks. Future growth areas are likely to center on the integration of AI into public services, where it can optimize everything from energy distribution to healthcare delivery. Emerging technologies such as quantum-enhanced machine learning and neuromorphic computing are expected to disrupt current paradigms, offering even greater efficiency and speed. As consumer preferences tilt toward privacy-first and locally hosted solutions, the demand for decentralized AI infrastructure will continue to grow, creating a fertile ground for innovation.

Global economic conditions will play a decisive role in the speed of this transformation, as investment flows are redirected toward technologies that offer the highest degree of resilience and scalability. Factors such as energy costs and the availability of technical talent will influence where the next major AI hubs are established. In this environment, the ability to foster a vibrant ecosystem of developers and researchers will be more important than any single technological breakthrough. Innovation will increasingly happen at the intersection of different disciplines, as AI is applied to solve complex global challenges like resource scarcity and climate change, making it an indispensable tool for future governance.

Final Assessment of China’s Open-Source AI Ambitions

The analysis revealed that China’s strategic focus on open-source artificial intelligence successfully challenged the long-standing dominance of proprietary Western systems. By prioritizing accessibility and scalability, Chinese firms managed to capture significant influence in emerging markets, effectively turning their technology into a global standard for digital infrastructure. The findings suggested that the industry moved toward a more decentralized model where the value resided in the ecosystem rather than in a single, locked-down product. Investors and policymakers recognized that the ability to customize and localize AI tools became a more important factor for long-term growth than raw benchmark scores.

The transition toward open-source foundations established a new set of priorities for the global technology sector, emphasizing collaboration and transparency over isolation. Actionable next steps for market participants involved the development of specialized hardware to support these open architectures and the creation of more robust international standards for safety and ethics. Future considerations must address the ongoing tension between national security and the borderless nature of open-source development. Ultimately, the shift toward democratized AI provided a roadmap for a more inclusive digital future, where the tools for innovation were available to any nation or organization willing to engage with the global community.