A developer sitting in a silent office at three in the morning realizes that the sophisticated large language model chosen for a flagship project is hallucinating pricing data for a high-value client. This moment of clarity highlights a growing realization across the global tech sector: the era of selecting artificial intelligence based on viral social media demonstrations has officially ended. The industry has moved into a more sober phase where the difference between a successful deployment and a public relations disaster rests on technical nuances that were ignored during the initial gold rush. It is no longer enough to have a model that can write poetry or summarize a meeting; modern requirements demand a level of precision, reliability, and cost-effectiveness that many popular systems simply cannot provide under pressure.

Selecting the right engine for an intelligent application is less about finding the “smartest” model and more about finding the one that fits the specific contours of a business problem. When a company integrates a model into its core stack, it is essentially hiring a digital workforce that must be managed, audited, and optimized. If the chosen model is too large, it drains the budget and creates intolerable latency; if it is too small, it fails to capture the complex reasoning required for high-stakes decision-making. The stakes are particularly high when these systems are granted the autonomy to interact with external databases or trigger financial transactions, turning a software choice into a significant operational risk.

This shift toward strategic procurement marks a maturation of the market, where technical teams now treat large language models as critical infrastructure rather than experimental novelties. The following analysis explores the multi-dimensional criteria necessary to evaluate these systems, moving beyond superficial performance metrics to address the hard realities of deployment, ethics, and long-term viability. As organizations move toward an “agentic” future where models act as semi-autonomous employees, the framework for selection must be as rigorous as any traditional supply-chain audit.

Beyond the Hype: Is Your AI Strategy Built for Performance or Just for Show?

The allure of a massive parameter count often blinds organizations to the practical realities of daily operation. While a model with hundreds of billions of parameters might perform exceptionally well on a standardized benchmark, it may be entirely unsuitable for a customer-facing chatbot that requires sub-second response times. Many enterprises have fallen into the trap of over-provisioning their AI capabilities, paying a premium for “intelligence” that never actually gets used in the context of their specific tasks. A system designed to categorize support tickets does not need to understand the nuances of 17th-century philosophy, yet many companies are using general-purpose behemoths for exactly these types of narrow applications.

There is a distinct difference between a model that is “performant” in a lab setting and one that is “reliable” in a production environment. Performance often refers to the peak capability of a model under ideal conditions, whereas reliability involves the model’s ability to maintain a consistent tone, follow safety guidelines, and avoid catastrophic failure over millions of requests. When the initial novelty wears off, stakeholders usually discover that a slightly less intelligent but more stable model provides significantly more value than a brilliant but temperamental one. This realization forces a pivot from chasing the highest leaderboard scores toward establishing a baseline of dependable, boring, and predictable software behavior.

Developing a strategy for show is easy; developing one for substance requires a deep dive into the hidden costs of intelligence. Every additional second of latency and every tenth of a cent per token adds up when a system is scaled across thousands of users. If the underlying strategy does not account for these compounding variables, the project will eventually collapse under its own weight, regardless of how impressive the initial pilot appeared to be. True performance in the modern era is defined by the equilibrium between computational cost, user experience, and the accuracy of the output, creating a trifecta that few models can perfectly balance without careful tuning.

From Novelty to Necessity: The High Stakes of Enterprise AI Integration

The transition of large language models from experimental curiosity to critical software infrastructure has changed the nature of IT procurement forever. In the past, software was largely deterministic; if a developer wrote a specific line of code, the machine executed it exactly as instructed every single time. Today, integrating an LLM introduces a level of probabilistic uncertainty that mirrors high-stakes commercial procurement, much like sourcing raw materials or trading livestock. Companies are no longer just buying a tool; they are entering into a partnership with a dynamic system whose behavior can change based on the slightest shift in input or a subtle update from the provider.

Selecting a model in this environment is comparable to selecting a primary cloud vendor or a banking partner because the cost of switching is exceptionally high. Once a team has built its prompts, fine-tuned its data pipelines, and integrated specific API hooks, moving to a different model can take months of re-engineering and testing. This reality has moved the decision-making process out of the developer’s sandbox and into the boardroom, where long-term viability and vendor lock-in are primary concerns. The “magic” that once surrounded AI has been replaced by a need for rigorous technical evaluation and an understanding that these systems are subject to the same laws of physics and economics as any other piece of hardware.

The limitations of treating AI as a magical black box become apparent the moment a system fails in a way that the developers cannot explain. In an enterprise setting, “I don’t know why it said that” is an unacceptable answer to a regulatory body or a disgruntled customer. Therefore, the selection process must prioritize models that offer some degree of interpretability or at least a stable enough architecture that failure modes can be mapped and mitigated. The move from novelty to necessity means that AI must finally grow up, leaving behind its whimsical, unpredictable roots to become the reliable backbone of the next generation of digital services.

Core Evaluation Pillars: Balancing Power, Speed, and Technical Constraints

When evaluating the technical infrastructure of a potential model, the size and parameter count must be weighed against actual task requirements rather than just prestige. A massive model requires specialized hardware, often leading to a “chore” of RAM and GPU allocation that can bog down even the most well-funded DevOps teams. If a model cannot fit into the existing hardware footprint of a company, the hidden costs of upgrading infrastructure can quickly eclipse the benefits of the AI itself. Hardware compatibility is not a secondary concern; it is a hard ceiling that dictates which models are truly viable for a given organization.

Latency benchmarks provide the next layer of scrutiny, specifically the Time to First Token (TTFT) and overall throughput. For interactive applications, the speed at which a model starts responding is often more important than the total time it takes to finish a long paragraph. Users perceive a system as “smart” when it reacts instantly, whereas a three-second delay before the first word appears can make even the most brilliant model feel broken. In API-driven environments, scalability and rate limits also play a crucial role, as a model that works perfectly for ten users might trigger aggressive throttling when ten thousand users try to access it simultaneously.

The knowledge lifecycle of a model is another critical pillar, particularly regarding knowledge cutoff dates and the necessity of Retrieval-Augmented Generation (RAG). A model’s “intelligence” is frozen at the moment its training concludes, meaning it is effectively oblivious to any event that has occurred since that date. To bridge this gap, organizations often use RAG to inject current data into the prompt, but this adds another layer of complexity and latency. Furthermore, developers must decide between using a broad foundation model or investing in fine-tuning to specialize a model on domain-specific datasets. Fine-tuning allows for a level of expertise that general models cannot match, but it requires a high-quality, proprietary dataset that many companies struggle to curate.

Modality and interaction dynamics represent the final technical hurdles, specifically the ability to process text, images, and complex document formats like PDFs. Verifying true multimodality is essential for modern workflows that require the AI to “see” a chart or “read” a handwritten invoice. Moreover, the choice between open-source and proprietary models introduces a philosophical and economic divide. Open-source models provide transparency and privacy, allowing a company to run the code on its own servers without sending data to a third party. However, proprietary models often offer superior ease of use and support, though they carry the risk of “model retirement” where the provider shuts down an older version that a company’s entire workflow depends on.

Governance and Ethics: Mitigating Risk in a Transparent AI Era

The legal landscape surrounding training data has become a minefield for organizations that fail to perform due diligence on their chosen models. As copyright lawsuits proliferate, the importance of provenance audits—verifying exactly where the training data came from—has moved to the forefront of the selection process. Using a model trained on scraped, copyrighted material could expose a company to massive legal liabilities or force the sudden decommissioning of a product if a court rules against the AI provider. Consequently, intellectual property protection has become a standard clause in AI contracts, with many enterprises demanding indemnification to protect themselves from future litigation.

A more technical but equally pressing ethical concern is the risk of “model collapse” caused by the prevalence of synthetic data in training sets. As AI-generated content floods the internet, newer models are increasingly being trained on the output of older models rather than on human-created information. This creates a feedback loop that can lead to a loss of precision, the amplification of biases, and the eventual degradation of the model’s reasoning capabilities. Organizations must ask their providers about the percentage of synthetic data used in training to ensure they are not building their future on a foundation that is slowly eroding toward mediocrity.

Environmental responsibility is also emerging as a non-negotiable factor for modern corporations committed to sustainability targets. The process of generating a single AI response can consume a significant amount of electricity and water for cooling data centers, leading to a carbon footprint that is often difficult to track. Forward-thinking providers are now offering transparency reports that detail their energy usage and even allow queries to be batched and run when renewable energy is most available on the grid. Regulatory compliance adds a final layer of complexity, as mandates like GDPR and HIPAA require that AI systems handle data with a level of care and “explainability” that many older models were never designed to provide.

Implementation Framework: A Strategic Approach to Model Selection

The most successful AI implementations begin with the concept of right-sizing the solution, moving away from the “bigger is better” mentality that dominated the early years of the industry. Strategic teams now start by identifying the minimum level of intelligence required for a task and selecting the smallest, fastest model that can meet that threshold. This approach not only saves significant amounts of money but also improves the user experience by reducing latency. Economic modeling involves calculating the long-term financial viability of cost-per-token, ensuring that the project remains profitable even if the volume of requests increases by a hundredfold.

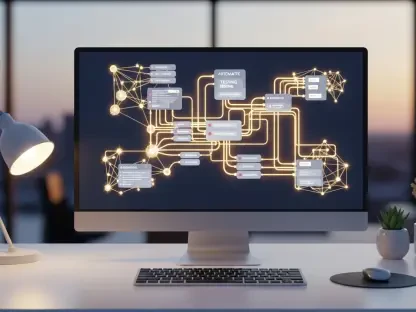

Preparing for an “agentic” future requires looking for models that support advanced features like tool use, function calling, and the Model Context Protocol (MCP). An agentic model does not just talk; it acts by searching the web, updating a CRM, or running a piece of code to solve a math problem. This transition from a chatbot to an agent requires a model with high “steering” capability—the ability to follow complex, multi-step instructions without getting distracted or stuck in a loop. Architects must also build “hooks” for human-in-the-loop moderation, ensuring that a person can intercept and correct an agent’s actions before they cause real-world consequences.

Qualitative testing remains the final, and perhaps most important, step in the selection process, as it allows teams to identify the unique quirks and biases of a specific model. Every model has a “personality” shaped by its training data; some are naturally more verbose, while others might be overly cautious or even sycophantic. Spending time in the “trenches” with a model reveals how it handles edge cases, whether it responds well to different prompting styles, and if its tone aligns with the brand’s identity. Only after a model has passed through this gauntlet of technical, ethical, and qualitative filters can it be considered ready for the high-stakes environment of modern enterprise integration.

The process of selecting a large language model evolved from a simple technical decision into a complex strategic maneuver that defined the trajectory of many organizations. Technical teams learned to balance the allure of high parameter counts against the hard realities of hardware constraints and latency benchmarks. They recognized that a model’s knowledge cutoff and its ability to integrate with real-time data through RAG were more important than its ability to perform well on abstract reasoning tests. Most importantly, the industry moved toward a model of accountability, where the provenance of training data and the environmental impact of computation were scrutinized with the same intensity as the model’s accuracy. By shifting from a mindset of novelty to one of necessity, developers successfully built systems that were not only intelligent but also sustainable and legally sound. The era of “magic” AI was replaced by an era of engineering excellence, where the right model was defined by its fit for the job rather than its score on a leaderboard. In the end, the companies that thrived were those that treated AI selection as a rigorous, multi-dimensional discipline rather than a race to the largest possible scale.