The rhythmic clicking of mechanical keyboards, once the definitive heartbeat of software development, has been superseded by the silent, high-velocity processing of autonomous coding agents capable of synthesizing entire application backends in seconds. This shift marks a profound migration from manual code construction to high-level system orchestration. As specialized tools like Claude Code and Cursor redefine the software development lifecycle, the fundamental definition of developer expertise is being rewritten in real-time. This analysis explores the market shifts currently underway, the emergence of the Architect-Validator model, and the strategies necessary for maintaining a cognitive edge in an increasingly automated technological landscape. The transition is not merely a change in tooling but a fundamental reordering of how human intellect interacts with machine logic to solve complex problems.

The Industrialization of Code Generation

Market Dynamics and Adoption Velocity

The software development lifecycle is experiencing a radical compression as implementation timelines shrink from months to mere days. Data from the current market indicates that the implementation phase, which historically consumed the majority of a project’s resources, is no longer the primary bottleneck. Autonomous coding agents have moved beyond simple autocomplete functions, evolving into sophisticated partners that can navigate complex file structures and maintain state across large repositories. This shift has created a widening productivity gap between traditional coders and AI-augmented engineers, with many organizations reporting efficiency increases ranging from twofold to tenfold. The speed at which a conceptual idea reaches functional deployment has become the new benchmark for success in a hyper-competitive tech economy.

Industry benchmarks show that the adoption of specialized Integrated Development Environments and Large Language Models has reached a critical mass across both enterprise and startup environments. Companies that once hesitated due to security concerns have now integrated local and private AI instances to safeguard intellectual property while reaping the benefits of automated synthesis. This rapid adoption is driven by the necessity to keep pace with a market where the cost of failure has plummeted. When the time required to generate a prototype is reduced to a few hours, organizations can afford to experiment with a higher volume of ideas, leading to a more iterative and experimental approach to product development. The velocity of this transition suggests that the traditional, slow-burn development cycle is becoming an artifact of the past.

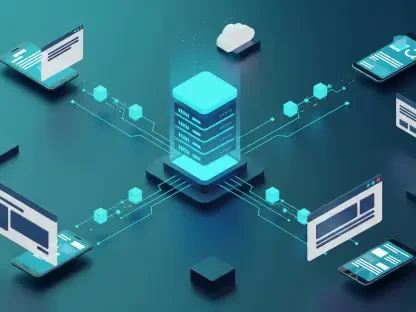

Practical Applications in the Modern Tech Stack

Modern engineering teams are increasingly delegating what is known as accidental complexity to AI agents, focusing instead on the core logic and unique value propositions of their software. This includes the automated generation of boilerplate code, the creation of comprehensive unit testing suites, and the execution of complex framework migrations that used to take weeks of manual labor. By automating the syntax-heavy portions of the stack, developers are freed to focus on the interconnectedness of services and the overall user experience. The industrialization of these tasks means that a single engineer can now manage portions of a codebase that previously required an entire sub-team, effectively flattening organizational hierarchies.

Legacy modernization has emerged as one of the most impactful real-world applications of these advanced AI tools. Organizations are successfully leveraging AI to translate outdated, monolithic codebases into modern high-level languages like Go or Rust with surprising accuracy. This process involves more than just a direct translation; AI agents can analyze the intent of the original code and suggest more efficient, concurrent patterns suited for modern cloud architectures. These case studies in efficiency demonstrate that the value of AI is not limited to new projects but is a vital tool for technical debt remediation. The ability to rapidly modernize infrastructure allows companies to pivot their technological foundations without the catastrophic downtime or massive capital expenditure traditionally associated with such transitions.

The Shift to Upstream Value: Expert Perspectives

The Death of the Syntax Specialist

Industry leaders increasingly argue that the primary value of a developer has moved upstream, away from the terminal and toward the initial problem statement. The era of the syntax specialist—the individual whose primary skill is a deep, encyclopedic knowledge of a specific language’s quirks—is effectively over. In its place, a new demand has emerged for professionals who can articulate complex requirements with precision and clarity. The focus is now on the what and the why of software development, rather than the how of line-by-line execution. This migration of value suggests that the most successful engineers in the coming years will be those who possess a mix of technical literacy and strong communication skills.

The ability to provide high-quality context is now the most critical skill in a developer’s repertoire. AI models are only as effective as the instructions and environmental data they are provided, making the act of prompting a sophisticated form of technical specification. To guide an AI effectively, a developer must be able to define functional boundaries, resiliency parameters, and operational constraints with absolute clarity. This requires a holistic view of the system that transcends individual files or functions. The expertise lies in the ability to bridge the gap between a vague business need and a concrete technical reality, a task that remains firmly within the human domain.

The Brooksian Framework: Essential vs. Accidental Complexity

To understand why AI cannot fully replace the human engineer, one must look toward the conceptual framework established by Fred Brooks. Brooks famously distinguished between accidental complexity—the difficulties inherent in the production process—and essential complexity—the inherent difficulty of the problem itself. AI is exceptionally adept at eliminating accidental complexity, such as managing memory, handling syntax, or integrating disparate libraries. However, the essential complexity of modeling a real-world business process into a logical system remains a challenge that requires human judgment and conceptual modeling.

Expert consensus reinforces the idea that software engineering is, at its heart, the creation of a conceptual construct. While AI can generate the code that gives that construct form, it cannot independently determine the most logical way to represent a complex set of human requirements. The process of fashioning a conceptual model involves navigating trade-offs and understanding the long-term implications of architectural decisions. Because AI lacks a true understanding of the real-world context in which software operates, it remains a tool for implementation rather than a source of original architectural intent. The human role is thus preserved as the architect of the essence, while the machine handles the accidents of the medium.

The Rise of the Architect-Validator

The transition toward the Architect-Validator role marks a significant evolution in professional identity within the tech sector. In this model, the engineer functions as a high-level judge who prioritizes technical requirement writing and system design over manual coding. This role demands a rigorous approach to verification, as the developer must be able to identify subtle logic errors or security vulnerabilities in AI-generated output. The validator must possess enough foundational knowledge to know when the AI is deviating from best practices, even if the code appears to function on the surface. This requires a shift in mindset from a creator of code to a curator of technical integrity.

System design has become the ultimate differentiator in an environment where code is abundant and cheap. The Architect-Validator must focus on how different components of a system interact, ensuring that the overall structure is scalable, maintainable, and secure. This elevated perspective allows for a more strategic approach to development, where the engineer acts as the guardian of the project’s long-term health. By focusing on the high-level architecture, the developer ensures that the rapidly generated code remains cohesive and aligned with the overarching goals of the organization. This evolution ensures that even as the mechanical aspects of coding are automated, the need for professional accountability and technical foresight remains higher than ever.

Future Implications: Navigating the AI Transition

The Threat of Cognitive Debt

As AI tools handle the heavy lifting of programming, a significant risk of mental atrophy has emerged within the engineering community. This phenomenon, often referred to as cognitive debt, occurs when a developer relies so heavily on automated suggestions that they lose the ability to reason through complex problems from first principles. Without the intentional practice of manual coding, the mental muscles required for deep logic and debugging can begin to weaken. To maintain a competitive edge, engineers must engage in regular strength training by working on foundational problems and staying deeply engaged with the underlying logic of the systems they manage.

The danger of cognitive debt is particularly acute during critical system failures where AI tools may not have sufficient context to provide a solution. In these high-stakes scenarios, the ability of a human engineer to dive into the codebase and manually diagnose an issue is the last line of defense. Maintaining foundational coding knowledge is therefore not just an academic exercise but a vital component of professional resilience. The most effective developers will be those who use AI to accelerate their work while simultaneously dedicating time to master new data models and languages. This balanced approach ensures that the human remains the master of the machine, rather than a passive observer of its output.

The Trust but Verify Mandate

The prevalence of LLM gaslighting—where an AI model confidently provides incorrect or suboptimal information—has made the trust but verify mandate a cornerstone of modern engineering. Because AI functions on statistical probability rather than logical certainty, it is prone to generating code that may look perfect but contains hidden flaws or security risks. Developers must approach every AI-generated block of code with a healthy degree of skepticism, treating it as a draft that requires thorough review and testing. This evolving requirement for developers to act as high-level quality assurance judges is now a non-negotiable part of the job.

Verification skills are becoming as important as the ability to design the system in the first place. This involves not only running automated tests but also performing deep code reviews to ensure that the AI-generated logic aligns with the project’s architectural standards. The risk of blindly accepting AI output is the creation of a fragile system that is difficult to maintain and prone to unexpected failures. By taking a proactive approach to verification, developers can harness the speed of AI without sacrificing the quality or security of the final product. The ability to spot a hallucination or a suboptimal pattern in a sea of generated code is now a hallmark of a truly senior engineer.

The Changing Entry Barrier

The junior developer role is undergoing a massive transformation, as newcomers are now required to master system design much earlier in their careers. Historically, junior engineers spent their early years performing the manual labor of coding, which provided a natural path toward understanding larger architectures. Today, that manual labor is largely automated, forcing juniors to leapfrog directly into roles that require high-level design and verification skills. This shift has raised the barrier to entry, as the market now demands a level of conceptual maturity that was previously reserved for more experienced professionals.

This evolution presents both a challenge and an opportunity for the next generation of engineers. While they may miss out on the traditional apprenticeship of manual coding, they have the chance to become architects of complex systems much sooner. Education and training programs are pivoting to emphasize system design, security, and prompt engineering from day one. The successful junior developer in this new era is one who can demonstrate a deep understanding of how software components fit together, even if they have not spent years writing every line of code by hand. This faster track to architectural responsibility is reshaping the career trajectory of the entire software engineering profession.

Embracing the New Engineering Paradigm

The software engineering profession underwent a fundamental restructuring as the emphasis moved from the mechanics of writing syntax to the higher-order tasks of architectural design and system verification. It was observed that while AI effectively eliminated the burden of accidental complexity, the essential complexity of defining real-world solutions remained a uniquely human endeavor. The industry transitioned toward a model where the engineer functioned as an Architect-Validator, a role that demanded more than just technical skill but also a profound capacity for conceptual modeling and critical judgment. Those who successfully navigated this change were able to use AI as a massive force multiplier, achieving levels of productivity that were previously unimaginable.

Technical professionals had to actively guard against the erosion of their foundational skills by engaging in continuous mental exercise and rigorous verification of automated outputs. This period demonstrated that technical literacy was more important than ever, as the ability to critique and refine AI-generated work became the primary safeguard for software integrity. The barriers to entry for new developers became higher and more focused on design thinking, requiring a more holistic education in system dynamics. Ultimately, the future of the field was secured by those who recognized that while code became a cheap commodity, the human ability to structure that code into meaningful, resilient systems remained the ultimate source of value. This evolution ensured that software engineering remained a disciplined craft anchored by human accountability and strategic oversight.