Anand Naidu is a seasoned development authority who bridges the gap between complex backend architecture and front-facing security implementation. With a career rooted in the nuances of various coding languages, he has observed the evolution of software delivery from simple repositories to the high-stakes world of AI model registries. His expertise is particularly vital now, as the industry grapples with the fallout of malicious artifacts infiltrating public AI spaces through sophisticated social engineering. This conversation explores the alarming trend of attackers manipulating trending algorithms, the technical deception of malicious loaders, and why our current security frameworks are struggling to keep pace with the rapid adoption of artificial intelligence.

How do attackers manipulate trending algorithms and engagement metrics like likes and downloads to deceive developers? What specific red flags in a model’s documentation or setup instructions should technical teams prioritize during their initial vetting process to avoid downloading malicious scripts?

The psychological weight of seeing a repository hit the number one trending spot on a platform like Hugging Face is immense; it creates an immediate, though false, sense of institutional trust. In the case of the Open-OSS/privacy-filter repository, attackers were able to manufacture legitimacy by generating 667 likes and a staggering 244,000 downloads in less than 18 hours. This isn’t just organic growth; it is a calculated, artificial inflation designed to exploit the “social proof” that busy developers rely on when choosing tools. When a model appears to be an official OpenAI release and mirrors the documentation of a legitimate project almost word-for-word, it becomes incredibly difficult to spot the deception at a glance.

Technical teams must move past the surface-level metrics and scrutinize the setup instructions with a skeptical eye. A massive red flag in this specific campaign was the instruction to run a “start.bat” file on Windows or “python loader.py” on Linux and macOS. Standard AI model implementations usually rely on well-known libraries like Transformers or Safetensors to load weights, rather than requiring the execution of arbitrary shell scripts or secondary Python loaders. If the README suddenly diverges from established patterns or asks for administrative-level execution to simply “initialize” a model, your team should treat that repository as a high-risk environment. The presence of a “loader.py” script that doesn’t clearly map to the open-source architecture of the model is often the first sign of an impending infection chain.

When a malicious script uses third-party JSON hosting services for command-and-control instructions, why is it so difficult for standard network monitoring to flag? What specific steps must a security team take to verify that a Python loader isn’t masking a persistent infection chain on Windows systems?

Standard network monitoring often fails because it is looking for “loud” anomalies—connections to known-bad IP addresses or domains with no reputation. By using a public service like jsonkeeper.com to host command-and-control (C2) instructions, the attacker effectively hides their traffic in plain sight among thousands of legitimate API calls. To a firewall or a basic traffic analyzer, the request looks like a standard JSON fetch from a reputable hosting provider, making it nearly invisible. This technique allows the attacker to rotate their malicious payloads remotely without ever having to touch the code in the Hugging Face repository again, staying one step ahead of static file analysis.

To truly verify a suspicious Python loader, security teams need to look deep into the execution flow, specifically for base64-encoded strings and disabled security protocols. In this instance, the loader.py script deliberately disabled SSL verification to pull its remote instructions, which is a massive red flag for any production-grade software. On Windows systems, you must audit the interaction between Python and PowerShell; attackers frequently use PowerShell to download secondary batch files and establish persistence. You should specifically hunt for new scheduled tasks that mimic legitimate system processes. A common trick we saw was the creation of a task designed to look like a Microsoft Edge update, which is a classic “hide in the noise” tactic that ensures the infection survives a system reboot.

Why are traditional software composition analysis tools failing to detect malicious logic inside AI-specific artifacts like serialized model files? How should organizations transition toward an AI bill of materials to ensure continuous scanning of these non-traditional dependencies without slowing down development cycles?

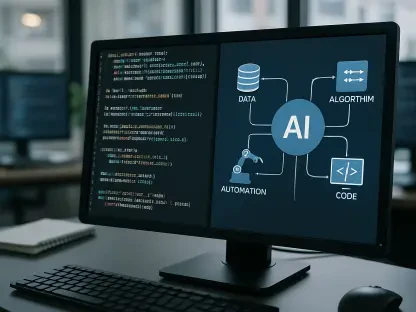

Traditional Software Composition Analysis (SCA) tools were built for a different era of development, one where we were primarily worried about known vulnerabilities in dependency manifests and library versions. These tools are essentially blind when it comes to the internal logic of AI-specific artifacts like Pickle-serialized model files. Because Pickle files are essentially executable code once they are unpickled, an attacker can hide a malicious payload deep within the model weights themselves. Traditional scanners see these as large data blobs rather than executable risks, allowing malicious code to bypass platform-level security checks and land directly on a developer’s workstation.

Moving forward, organizations must adopt an AI Bill of Materials (AIBOM) to provide visibility into these non-traditional dependencies. This transition is becoming a regulatory and security necessity, with industry forecasts suggesting that by 2027, roughly 60% of enterprises deploying agentic AI will require an AIBOM for continuous scanning. An AIBOM doesn’t just list the libraries used; it tracks the origin of the model, the specific versions of the weights, and the runtime behaviors expected from the model artifacts. To avoid slowing down development, this scanning must be integrated directly into the CI/CD pipeline, treating the AI model registry with the same level of governance as a private container registry or an internal NuGet feed.

Given that modern malware can bypass multi-factor authentication by stealing session cookies, what is the most effective protocol for handling a compromised workstation? Beyond just deleting malicious files, what steps ensure that hidden scheduled tasks and cloud credentials are truly secured?

The moment a developer executes a malicious file like the one found in the Open-OSS repository, the workstation must be considered a complete loss from a security perspective. Because the Rust-based infostealer targeted Chromium and Firefox-based browsers, it could siphon off session cookies that effectively bypass multi-factor authentication (MFA). If an attacker has your session cookie, they are “you” in the eyes of the cloud provider, regardless of whether you have a hardware key or a mobile authenticator. This means the protocol cannot simply be “delete the malware and change the password”; it has to be a total reimagining of the system.

A “scorched earth” approach is the only way to be certain. You must reimage the machine entirely to wipe out persistent elements like the scheduled tasks designed to look like Edge updates. Beyond the local machine, the security team must invalidate every single active session associated with that user’s identity across all platforms—SaaS tools, cloud consoles, and internal databases. You also need to rotate every credential that might have been stored in local configurations, such as FileZilla profiles, cryptocurrency wallets, or Discord storage. Finally, conduct a historical network hunt to see if any cloud credentials or host information were exfiltrated during the window of compromise, as those “stale” credentials can become backdoors months later.

How can enterprises establish governance at the registry layer to control model sources and runtime validation? What are the practical challenges of balancing developer speed with the need for a locked-down, approved versioning system for open-source AI models?

Governance at the registry layer requires moving away from a “wild west” approach where developers can clone any trending model into a corporate environment. Enterprises should establish a curated internal registry that acts as a buffer between public repositories like Hugging Face and the internal development teams. This internal registry should only host approved versions of models that have undergone static and dynamic analysis. By implementing runtime validation, organizations can monitor the behavior of the model during execution, flagging any unexpected calls to external domains or unauthorized attempts to access local system files that fall outside the model’s intended scope.

The practical challenge, of course, is that developers and data scientists move at a breakneck pace, and any friction in the sourcing process can lead to “shadow AI” where teams bypass security to get the latest tools. Balancing this speed with safety requires a tiered approval system. Common, well-vetted architectures should be pre-approved for immediate use, while new or “trending” repositories from unknown publishers require a mandatory sandbox evaluation. It is about creating a paved path that is faster and easier for the developer than going around the system. If the internal registry is updated frequently and provides the necessary metadata for compliance, developers are much more likely to stay within the sanctioned ecosystem.

What is your forecast for AI supply chain security?

My forecast is that we are entering an era of “Agentic Defense,” where the sheer volume and speed of AI supply chain attacks will make human-led vetting impossible. By 2027, I expect the majority of enterprises will be forced to implement automated, continuous scanning of AI artifacts, as the boundary between “data” and “code” in models continues to blur. We will likely see a move toward “signed” models, where only artifacts with a verifiable cryptographic signature from a known, trusted entity are allowed to execute in corporate environments. As attackers get better at impersonating legitimate brands and inflating social metrics, our trust must shift from “what is popular” to “what is verifiable,” making the AI Bill of Materials the most critical document in the modern developer’s toolkit.