The sheer volume of data surging through global server farms has finally outpaced the capabilities of off-the-shelf networking hardware, forcing a total reinvention of how digital information travels across the planet. As enterprises push toward more complex artificial intelligence deployments and real-time global operations, the traditional “good enough” network has become a significant bottleneck. Amazon Web Services (AWS) has responded by abandoning the industry standard of buying third-party equipment, choosing instead to design every component of its networking stack from the ground up. This shift represents a transition from viewing the network as a collection of connected boxes to treating it as a single, massive, and programmable computer.

This evolution is fundamentally changing the relationship between businesses and their infrastructure. For decades, the network was a source of friction, defined by hardware limitations and the specific quirks of various equipment vendors. Today, the focus has shifted toward a seamless utility model where the underlying complexity is hidden, yet the performance is exponentially higher. By taking full control of silicon, software, and physical transmission mediums, AWS is attempting to eliminate the “network tax” that has long slowed down innovation for large-scale digital enterprises.

The End of the “Good Enough” Network in the Age of Hyper-Scale Data

Modern businesses no longer operate on islands; they function within a hyper-connected ecosystem where even a millisecond of delay can equate to millions of dollars in lost revenue. The traditional approach to networking—using a patchwork of hardware from different manufacturers—simply cannot keep up with the demands of 2026-era workloads. When different devices run various versions of firmware, the result is a fragmented environment prone to unpredictable outages and security gaps. Enterprises have reached a tipping point where the standard, vendor-led networking model is no longer sufficient to support the massive data ingestion required by modern analytics.

Moreover, the complexity of managing these heterogeneous systems has become a drain on human capital. IT departments used to spend the majority of their time troubleshooting hardware incompatibilities or waiting for vendors to release critical security patches. By shifting away from this fragmented model, the industry is moving toward a standard of reliability that mirrors the power grid. This transition is not just about speed; it is about predictability. When a network is built on a unified foundation, every packet of data moves through a known path with a predictable latency, allowing developers to build more ambitious applications without fearing the “noisy neighbor” effect or unexpected throughput drops.

From Fragmented Hardware to a Unified Silicon Foundation

The most drastic change in this new architecture is the move toward a single, custom-designed silicon brain that powers every layer of the network. In the past, a cloud provider might use one type of chip for the core of the network and an entirely different one for the edges where it connects to the internet. AWS has disrupted this by implementing a custom application-specific integrated circuit (ASIC) that serves as a universal foundation. This hardware uniformity means that whether a data packet is moving between two servers in the same rack or across an entire continent, it is processed by the same logic and efficiency.

Coupled with this custom silicon is a specialized operating system known as NetOS. Unlike general-purpose networking software, this Linux-based system is tuned specifically for the custom chips it inhabits. This tight integration between hardware and software allows for global-scale updates that were previously impossible. If a new cyber threat emerges, a single software push can update millions of devices across the global fleet simultaneously. This level of agility removes the reliance on third-party hardware vendors who often take months to certify and release critical patches. For the enterprise customer, this means a more resilient environment that adapts to threats and performance requirements in real-time.

Engineering the Invisible Utility Through Vertical Integration and Custom ASICs

Vertical integration is the secret weapon that allows a cloud giant to treat networking as an invisible utility. By designing its own chips, AWS can optimize for specific enterprise needs—such as power efficiency and high-density port configurations—that generic chipmakers often overlook. This control allows for the creation of networking fabrics that are not only faster but also significantly more reliable. When the same entity designs the hardware, writes the software, and manages the deployment, the traditional “finger-pointing” that occurs during a system failure is eliminated, leading to faster recovery times and higher uptime.

Furthermore, this engineering philosophy extends to the very way data is prioritized within the wires. Custom silicon allows for sophisticated traffic management that can distinguish between a low-priority background backup and a high-priority financial transaction. By embedding these capabilities directly into the ASIC, the network can make routing decisions in nanoseconds without burdening the central processor. This creates a “frictionless” experience for the user, where the network simply works in the background, scaling automatically as demand spikes. The end goal is an infrastructure that feels like a natural extension of the server itself rather than a separate, complex entity to be managed.

The Staggering Physicality of the Cloud: Hollow-Core Fiber and 100Tbps Switching

While much of the innovation happens in code and silicon, the physical reality of the network is equally impressive. AWS has deployed switches that currently handle 51.2 terabits per second, but the roadmap for the immediate future includes a jump to 102.4Tbps capacity. To move this much data, the industry is seeing a shift toward 1.6Tbps port speeds. This massive bandwidth is necessary to support the 20 million kilometers of fiber-optic cable that form the backbone of the global cloud. However, the most significant leap isn’t just in the speed of the switch, but in the medium through which the light travels.

The introduction of hollow-core fiber-optic technology represents a fundamental shift in physics. Traditional fiber sends light through solid glass, which actually slows down the signal compared to the speed of light in a vacuum. Hollow-core fiber, as the name suggests, allows light to travel through an air-filled center. This reduces latency by approximately 30 percent, a staggering improvement in a world where microseconds determine the success of high-frequency trading or industrial automation. This physical upgrade allows data centers to be spaced further apart while still behaving as if they are in the same building, giving enterprises more geographic flexibility for their critical data.

Powering the AI Gold Rush with UltraCluster Topology and Microsecond Precision

The explosion of generative AI has placed unprecedented stress on networking infrastructure. Training a large language model requires thousands of GPUs to work in perfect synchronization, which is only possible if the network connecting them is nearly instantaneous. AWS has addressed this through a specialized “UltraCluster” topology. This design minimizes the number of “hops” a data packet must take between servers, ensuring that the heavy computational load of AI training isn’t slowed down by network congestion. Without this specialized layout, the cost and time required to train modern AI models would be prohibitively high for most companies.

Beyond raw speed, the network has also gained microsecond-level time precision. By synchronizing the internal clocks of every device across the global network, AWS enables “globally consistent transactions.” Previously, achieving this level of synchronization required expensive, specialized hardware located on-premises. Now, a developer can build a distributed database that maintains perfect data integrity across multiple continents because the network itself provides a high-precision time signal. This capability is a game-changer for industries like global finance and complex supply chain management, where the order of events must be captured with absolute accuracy.

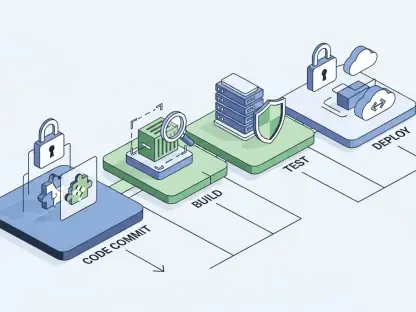

Navigating the Strategic Shift From Infrastructure Maintenance to Workload Optimization

The most significant takeaway for enterprise leaders is that the era of managing “the box” is over. As networking becomes more automated and integrated, the role of the IT professional must evolve from a hardware specialist to a strategic architect. Rather than worrying about port configurations or firmware versions, teams are now free to focus on workload optimization. This involves analyzing which specific applications require the ultra-low latency of hollow-core fiber and which can operate on more standard, cost-effective tiers. Success in this environment requires a deep understanding of how to align business goals with these new technical capabilities.

However, this high-performance world also demands a new approach to security and cost management. While the network is more resilient, the responsibility for how data is moved and stored still rests with the enterprise. Organizations were encouraged to re-evaluate their operational models to ensure they could fully exploit the benefits of 100Tbps switching without falling into the trap of vendor lock-in. Developers started building applications that were “network-aware,” utilizing the microsecond precision and massive bandwidth to create services that were once thought impossible. Moving forward, the focus was placed on leveraging this invisible utility to drive innovation rather than simply maintaining the status quo of the digital plumbing.