The traditional barrier between envisioning a spatial experience and actually deploying one has finally collapsed as Meta’s Immersive Web SDK (IWSDK) integrates sophisticated agentic workflows. By shifting the heavy lifting from manual syntax to AI-driven intent, this framework fundamentally alters how virtual reality content is birthed. Instead of wrestling with low-level logic, developers now orchestrate high-level systems through an ecosystem designed for speed and precision.

Evolution of Autonomous Workflows in Immersive Environments

This transition represents more than a simple update; it is a paradigm shift in spatial computing. While previous iterations required exhaustive knowledge of WebGL or specialized libraries, the new IWSDK leverages autonomous agents to handle the foundational scaffolding. This enables a move toward automated creation where the machine understands the spatial context of a request.

The emergence of these workflows marks the end of the manual-first era. By abstracting the complexities of environmental rendering and sensor integration, the system allows creators to focus on narrative and user experience. This evolution aligns perfectly with the broader industry trend of democratizing high-end technology for a wider range of designers.

Technical Pillars of the Agentic WebXR Ecosystem

AI-Assisted Agentic Coding Workflows

The core of this system lies in its deep integration with tools like Claude Code and GitHub Copilot. Unlike standard autocomplete features, these agents are trained on specific spatial constraints, allowing them to automate physics engines and hand-tracking logic with minimal human intervention. This specialized focus ensures that the generated code is optimized for the unique demands of head-mounted displays.

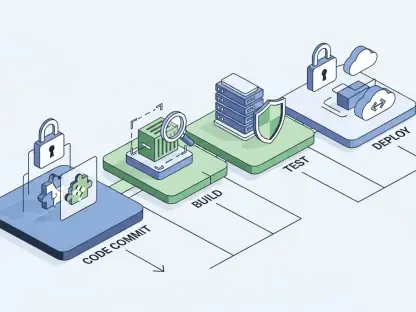

The Closed-Loop System for Validation and Testing

What truly sets this framework apart is its “closed-loop” architecture. The AI does not just write code and walk away; it actively validates the output within the runtime environment. By autonomously identifying bugs and remediating logic errors before a human ever sees the build, the system guarantees a level of functional stability that was previously impossible to maintain at such high development speeds.

Current Trends in Rapid Prototyping and Open-Source Growth

Recent movements toward rapid deployment cycles have been fueled by the open-source nature of the IWSDK. Under the MIT license, the community has rapidly expanded the library of pre-validated components. This collective intelligence reduces the “cold start” problem for new projects, allowing teams to iterate on complex mechanics in hours rather than months.

Moreover, the shift toward open frameworks has lowered the entry barrier for independent creators. By moving away from proprietary, locked-down engines, the industry is seeing a surge in experimental content. This grassroots innovation is vital for discovering new interaction metaphors that traditional studios might overlook in favor of safe, established patterns.

Real-World Implementations and Strategic Deployments

The practical power of this technology was best exemplified by the “Project Flowerbed” gardening demo. Recreating a complex VR environment in only fifteen hours served as a wake-up call for the industry, proving that agentic workflows can replace tens of thousands of lines of manual code. This efficiency makes it possible for smaller teams to produce AAA-quality interactions on a shoestring budget.

Strategically, the use of browser-based VR offers a massive advantage by bypassing the friction of traditional app stores. Direct URL distribution allows for instant updates and universal access across different hardware. This friction-free entry point is essential for scaling the user base and ensuring that immersive content remains as accessible as a standard website.

Technical Hurdles and Market Constraints

Despite these leaps, technical hurdles remain, particularly regarding performance consistency across diverse hardware. AI-generated spatial logic can occasionally be resource-heavy, requiring developers to step back into granular engineering roles to optimize frame rates. Finding the perfect balance between high-level abstraction and low-level performance tuning is the current frontier for the platform.

Furthermore, market constraints involve the tension between automated ease and creative control. While the agents are excellent at standard tasks, they can struggle with highly unconventional or non-standard physics. For specialized use cases, the need for manual oversight remains a bottleneck that the community is still working to solve through better prompting and refined training models.

The Future of Browser-Based Spatial Computing

Looking ahead, the scaling of content for the million-plus monthly WebXR users will depend on natural-language creation. We are moving toward a future where a simple spoken description can generate a fully functional room with interactive objects. This democratization will likely lead to a massive influx of user-generated environments, similar to the early days of the 2D web.

The long-term impact on the metaverse will be a shift from static platforms to dynamic, instantly generated worlds. As these agentic tools become more refined, the distinction between a “user” and a “developer” will continue to blur. This shift suggests a more fluid digital landscape where environments are built and modified in real-time based on the needs of the participants.

Final Assessment of Agentic WebXR Frameworks

The updated IWSDK was a clear turning point for immersive web development, successfully bridging the gap between complexity and accessibility. By automating the most tedious aspects of the development lifecycle, the framework allowed for a focus on genuine innovation. The results demonstrated a profound increase in efficiency that has already begun to reshape how developers approach spatial projects.

Moving forward, stakeholders should prioritize the integration of these agentic tools into existing pipelines to stay competitive. The focus must now shift toward refining the precision of AI-generated logic and exploring how these automated workflows can be used to create more inclusive, accessible virtual spaces. The foundation has been laid; the next phase will be defined by how creatively we use these newfound speeds.