Anand Naidu is a seasoned expert in the realm of Enterprise Information Technology, specializing in the delicate balance between frontend and backend development. With years of experience navigating complex coding architectures and various programming languages, he provides a grounded perspective on the practical implementation of artificial intelligence within corporate workflows. In this discussion, we explore the findings of recent research regarding document degradation in AI systems, the varying reliability of AI across technical domains, and the shifting role of human expertise as organizations transition from manual production to automated supervision.

Document degradation can reach 50% over repeated AI interactions as errors compound silently. How should project managers restructure workflows to catch these sparse but severe errors, and what specific checkpoints are necessary to ensure the integrity of a document remains intact after multiple edits?

To combat the 50% average degradation seen across models, project managers must pivot from a linear “set-and-forget” workflow to a cyclical validation loop. The first step is to implement a “round-trip” verification after every three to five interactions, where the AI is tasked with reconstructing the original intent or key metrics of the document to see if they still align. Secondly, you need a mandatory human-in-the-loop checkpoint specifically designed for “artifact integrity” rather than just stylistic tone. This involves using domain-specific evaluators—for example, a senior developer reviewing a 15,000-token codebase—to ensure that while the AI is editing, it isn’t silently dropping critical logic or content. Finally, organizations should use version control systems that flag the percentage of content changed or deleted, as losing 25% of a document over 20 interactions is a massive red flag that current automated systems often miss.

Automated coding in Python currently shows higher reliability than tasks in specialized fields like music notation or crystallography. Why does programming lend itself better to AI delegation than other technical domains, and what criteria should a company use to decide if a department is ready for AI integration?

Python thrives in the AI era because its syntax is highly standardized and the volume of training data is immense compared to niche fields like crystallography or genealogy. In our testing of 52 professional domains, Python was the only one where most models were considered “ready” because the logic is deterministic and easily verifiable through execution. A company should only consider a department ready for AI integration if the tasks are reversible and the output can be validated through objective, mathematical means. If a department relies on “messy” data where context is noisy and files are stale, it’s a sign that the AI will struggle to preserve the integrity of the work over long-term workflows.

Frontier models often subtly distort information rather than making obvious mistakes, requiring high-level expertise to catch. How does this change the cost-benefit analysis of reducing headcount in favor of AI, and what specific training do supervisors need to identify “silent corruption” in complex technical files?

The narrative that AI allows for immediate headcount reduction is a dangerous fallacy because as models get “stronger,” their errors become harder to spot. While weaker models might visibly delete text, frontier models like GPT-4 or Claude 3.5 Opus often keep the content present but subtly alter the meaning, which requires high-level expertise to identify. This shifts the cost-benefit analysis: you aren’t necessarily saving money on labor; you are moving your most expensive experts from “production” to “supervision and accountability.” Supervisors need specialized training in “forensic review,” learning how to use diff-checking tools and automated “checker” agents to look for logic drifts that casual inspection would miss.

Increasing document length and the presence of distractor files significantly worsen AI performance. When dealing with messy, real-world data, what architectural guardrails or mathematical verification methods can prevent a model from losing focus, and how do these interventions impact the speed of the automation?

When you introduce distractor files or push document lengths toward the 15,000-token mark, the AI’s “attention” begins to fragment, leading to severe corruption. To fix this, we implement deterministic verification steps—mathematical guardrails that check for data consistency, such as ensuring a ledger still balances after an edit. Another approach is “prompt narrowing,” where we break large documents into smaller, isolated chunks to prevent the model from getting distracted by irrelevant context. While these interventions add a layer of latency and reduce the raw speed of automation, they are non-negotiable for preserving document integrity in an enterprise environment where an error in a compliance record or contract could be catastrophic.

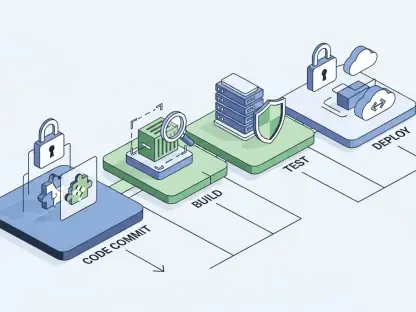

Multi-agent systems sometimes increase document corruption rather than fixing it through peer review. In what scenarios does adding a second “checker” AI fail to improve accuracy, and what are the best practices for designing an oversight system that actually reduces the error rate?

A second “checker” AI fails when it is built on the same underlying foundation model as the “creator” AI, as they often share the same blind spots and will agree on a hallucination. In fact, research shows some multi-agent setups actually accelerate degradation because the agents end up compounding each other’s mistakes over repeated interactions. The best practice is to design a heterogeneous oversight system where the checker uses a different architectural model or a fine-tuned version trained on your specific proprietary data. This creates a “diversity of thought” within the automation flow, making it far more likely that the oversight agent will catch the sparse, severe errors the first agent introduced.

What is your forecast for the future of delegated AI in the enterprise?

My forecast is that we will see a shift away from “generalist” AI agents toward highly specialized, “deterministic” micro-models that prioritize preservation over creativity. We are entering a phase where the “human layer” becomes more valuable than ever, as the primary role of the enterprise worker will be to sign off on the integrity of AI-generated artifacts. While AI will master specific silos like Python programming within the next few years, the broader landscape of 50+ professional domains will remain a “human-supervised” zone where AI acts as a fast but untrustworthy assistant. Organizations that rush to remove domain expertise to save on headcount will likely suffer from “silent corruption” that could take years to fully realize and repair.