The sheer velocity of modern software delivery depends almost entirely on the silent work of thousands of anonymous contributors whose code sits at the heart of nearly every enterprise application. While this collective intelligence allows companies to ship features at a pace that was unthinkable a decade ago, it creates a massive, invisible attack surface that most organizations are only beginning to understand. As a result, the “supply chain” of software—the journey from a public repository to a production server—has become the new frontline for sophisticated digital warfare. This article explores the mechanics of these risks and provides a comprehensive framework for securing the pipelines that power the digital economy.

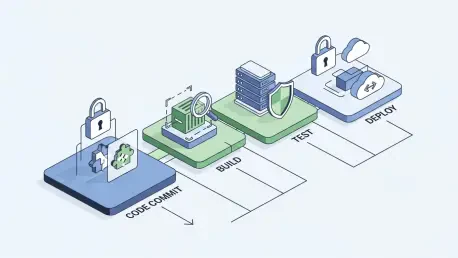

The objective here is to demystify the complexities of open source governance by addressing the most pressing questions facing DevOps and security teams today. Readers will gain a clear understanding of how vulnerabilities enter the system and, more importantly, how to build robust “gates” that prevent compromised code from reaching the end user. By exploring the intersection of development speed and defensive rigor, this guide provides a roadmap for moving from a state of reactive patching to proactive, systemic resilience.

Key Questions and Strategic Concepts

Why Has the Open Source Supply Chain Become a Primary Target for Cyberattacks?

The pivot toward supply chain attacks is driven by a simple calculation of return on investment for the adversary. Rather than attempting to breach a single well-defended enterprise, attackers target the upstream components that hundreds or thousands of companies use simultaneously. By compromising a single popular library on a registry like npm or PyPI, a malicious actor can achieve a massive “one-to-many” impact, potentially gaining access to internal environments across diverse industries with a single exploit.

This vulnerability is compounded by the “set it and forget it” mentality that often plagues dependency management. Many projects rely on libraries that have not seen a security update in years, yet these components remain deeply embedded in critical business logic. The lack of visibility into these nested, or “transitive,” dependencies means that a developer might think they are using a secure tool, unaware that it rests upon a foundation of unmaintained and vulnerable code.

What Is the Difference Between Inherited Vulnerabilities and Active Supply Chain Injections?

Security professionals generally categorize supply chain risks into two distinct buckets: known weaknesses and deliberate sabotage. Inherited vulnerabilities are the “known unknowns,” consisting of publicly documented flaws (CVEs) in legitimate software. These are often the result of unintentional coding errors that attackers exploit using automated scanners. While dangerous, they are predictable and can be managed through consistent patching and version updates, provided the organization has the inventory tools to find them.

In contrast, supply chain injections represent a much more insidious “unknown unknown.” This occurs when an attacker actively inserts malicious code into a package via techniques like typosquatting—registering a name similar to a popular package—or hijacking a maintainer’s account. These attacks are particularly difficult to detect because the code is often technically “valid” and does not trigger traditional vulnerability scanners. The malicious logic might be hidden in an installation script that exfiltrates environment variables or opens a backdoor the moment the package is downloaded into a build environment.

How Can Organizations Defend Against Dependency Confusion and Namespace Hijacking?

Dependency confusion is a specific structural flaw where a build system is tricked into pulling a malicious public package instead of a legitimate private one. This typically happens when a developer uses the same name for an internal library as one that exists on a public registry. If the build tool is configured to seek the highest version number without restriction, it will default to the attacker’s public version. To prevent this, teams must move toward a zero-trust model for package resolution, utilizing internal mirrors and strictly defined namespaces.

Effective defense requires the elimination of implicit trust in public registries. By routing all external requests through a private repository manager, organizations can enforce strict scoping rules that ensure internal packages are always sourced from internal servers. Furthermore, developers should utilize “lock files” that pin dependencies to specific, verified versions and cryptographic hashes. This ensures that even if a public repository is tampered with, the build will fail rather than ingest a modified, malicious version of the code.

What Role Does the Software Bill of Materials (SBOM) Play in Modern Pipeline Security?

A Software Bill of Materials acts as a comprehensive ingredient list for an application, providing a machine-readable inventory of every library, plugin, and transitive dependency used in a build. In the event of a zero-day disclosure, an SBOM allows security teams to instantly identify every impacted service across their entire infrastructure without having to manually scan thousands of individual repositories. It transforms a chaotic, multi-week search into a targeted, minutes-long response, significantly reducing the window of opportunity for an attacker.

Beyond incident response, the SBOM serves as a critical governance tool that enables policy enforcement at the build gate. By integrating SBOM generation into the CI/CD pipeline, organizations can automatically reject builds that include unauthorized licenses, abandoned projects, or components from high-risk geographic regions. This provides a continuous audit trail that moves beyond a static snapshot, ensuring that the security posture of an application is verified every time a single line of code is changed or a new dependency is introduced.

How Should Build Environments Be Hardened to Limit the Impact of Malicious Scripts?

The environment in which software is compiled is often overlooked as a potential vector for data theft. Many malicious packages use “post-install” scripts to scan the local machine for secrets, cloud provider credentials, or SSH keys. To mitigate this risk, build processes should be executed in ephemeral, isolated environments—such as short-lived containers—that are stripped of all unnecessary permissions. If a build script attempts to make an unauthorized network connection to an external command-and-control server, a properly hardened environment will block the egress traffic.

Furthermore, implementing “least privilege” for build agents is non-negotiable in a high-security DevOps culture. Build servers should not have broad access to production environments or sensitive internal databases. By restricting network access to only the specific registries and endpoints required for the build, organizations can create a “sandbox” that contains any potential infection. This behavioral containment ensures that even if a malicious package is accidentally ingested, its ability to cause systemic damage or exfiltrate sensitive data is severely curtailed.

Why Is Monitoring Maintainer Behavior and Repository Metadata Crucial for Trust?

The human element of open source is both its greatest strength and a significant vulnerability. A sudden change in the ownership of a popular project or a surge in code commits from a previously inactive maintainer can be a warning sign of account takeover or a “protestware” event. Security teams must look beyond the code itself and monitor the health and reputation of the projects they rely on. Metrics such as the frequency of updates, the number of active contributors, and the age of the project provide essential context for assessing risk.

Modern governance tools now incorporate “reputation scoring” that flags packages exhibiting suspicious lifecycle signals. For example, a package that is less than a week old but has seen a massive spike in downloads might be part of a coordinated typosquatting campaign. By setting policy gates that require a minimum level of project maturity or a certain number of trusted maintainers, organizations can prevent “bleeding edge” but unverified code from entering their ecosystem. This shift from purely technical scanning to behavioral analysis represents the next evolution in supply chain defense.

Summary of Strategic Controls

The complexity of the open source landscape necessitates a multi-layered approach that addresses the entire lifecycle of a dependency. Central to this strategy is the move toward “shifting left,” where security checks are integrated into the developer’s local environment and the initial intake phase of the pipeline. By enforcing strict version pinning and cryptographic verification, organizations can ensure that the code they tested yesterday is exactly the same code they deploy tomorrow. This eliminates the uncertainty created by dynamic versioning and protects against the silent replacement of legitimate libraries with tampered versions.

Visibility remains the cornerstone of effective governance. Maintaining a queryable, real-time inventory through SBOMs and automated dependency mapping allows for a rapid response to emerging threats. However, visibility must be paired with enforcement; a system that merely reports vulnerabilities without the power to block a compromised build is insufficient for modern requirements. The most resilient organizations are those that combine automated scanning with hardened, isolated build environments and behavioral monitoring to catch the anomalies that traditional tools frequently miss.

Future Considerations and Actionable Steps

As organizations look toward the future, the focus must shift from merely discovering vulnerabilities to building an inherently resilient architecture that can survive the compromise of any single component. The concept of “software transparency” will become an industry standard, requiring vendors to provide detailed provenance data for every piece of code they deliver. DevOps teams should begin by auditing their current dependency intake paths, moving away from direct public registry access in favor of managed internal mirrors. This single change provides the necessary control point to implement all subsequent security measures.

Moving forward, the integration of artificial intelligence into security tooling will likely provide the ability to detect malicious code patterns that are currently invisible to human reviewers. However, the most effective immediate action a team can take is the implementation of “hard gates” in the CI/CD pipeline that prevent any unvetted or high-risk component from moving toward production. By treating every external library as a piece of untrusted code, organizations can foster a culture of rigorous verification. This disciplined approach ensures that the undeniable benefits of the open source ecosystem are not outweighed by the risks inherent in its collaborative and distributed nature.