Artificial Intelligence (AI) stands at the forefront of technological innovation, propelling advancements in machine learning, data analytics, and real-time decision-making. Despite its revolutionary potential, maintaining AI data centers introduces significant challenges primarily due to their high power consumption. This exploration delves into the fundamental factors that drive the energy demands of AI data centers and examines the innovative solutions being pursued to alleviate these challenges.

AI workloads are inherently demanding, particularly those involving deep learning and generative AI models. Training models such as GPT-4 involves processing trillions of parameters using high-performance GPUs and TPUs, contributing to substantial power consumption. The ability of AI data centers to sustain these intensive computational tasks is a primary driver of the pronounced energy demands observed. Furthermore, AI computations necessitate continuous power for retraining and fine-tuning models, which, although optimized for efficiency, result in considerable energy costs when maintaining and running sophisticated models at scale.

The specialized hardware deployed in AI data centers, including GPUs, TPUs, and FPGAs, is meticulously designed for optimal efficiency and parallel processing. This increased core density and reliance on high-speed memory like HBM results in significant energy usage. The hardware’s capacity for performing complex AI tasks efficiently underscores the challenge of managing power demands without compromising performance. Moreover, the continual inference and training cycles required by AI workloads further amplify power requirements. The crucial role of high-bandwidth memory access in these processes elevates energy consumption, illustrating the pressing need for power-efficient hardware solutions in AI operations.

The Power-Hungry Nature of AI Computations

Understanding the power-hungry nature of AI computations necessitates a comprehensive look at how AI workloads operate. Deep learning and generative models stand as the epitome of computational intensity, demanding immense computational power to process the trillions of parameters involved in training complex models like GPT-4. The deployment of high-performance GPUs and TPUs, essential for handling these massive computations, contributes significantly to the energy consumption of AI data centers. These processors, capable of executing trillions of operations per second, invariably stimulate heightened power demands, spotlighting a major factor in AI data center energy consumption.

In addition to the initial training stages, AI computations involve sustained power consumption due to the continuous retraining and fine-tuning required to maintain model accuracy and relevancy. These perpetual cycles, optimized for performance despite being exceedingly energy-intensive, illustrate the demanding nature of AI operations at scale. The necessity for high-energy outputs during these processes is a fundamental aspect of the power consumption challenges faced by AI data centers.

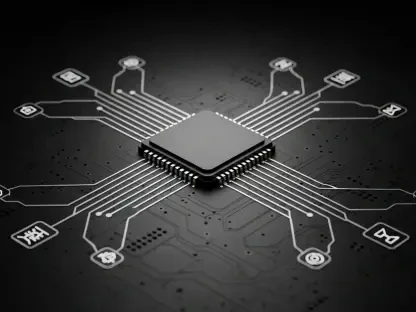

Energy-Intensive Hardware Requirements

The hardware implemented in AI data centers is a major contributor to their energy consumption issues. GPUs, TPUs, and FPGAs, renowned for their parallel processing capabilities, are specifically designed to manage the intricate tasks associated with AI efficiently. This efficiency is, however, paired with significant energy requirements. The ambitious engineering of these components, characterized by increased core density and the necessity for high-speed memory like HBM, results in substantial power usage. These hardware elements, while indispensable for AI functionality, present a formidable challenge in curbing energy demands without undermining the desired performance.

The constant cycles of inference and training mandated by AI workloads further exacerbate power consumption. These processes demand continuous high-bandwidth memory access, which propels energy consumption upward. Addressing this facet of AI data center operations emphasizes the significance of developing and deploying power-efficient AI hardware solutions. The balance between maintaining high-performance capabilities and managing energy consumption continues to be a pivotal focus within the realm of AI data center technology.

Advanced Cooling Systems and Their Impact

AI data centers generate substantial heat due to the intensity of their operations, necessitating advanced cooling strategies to uphold optimal performance standards and prevent hardware failures. Traditional air cooling methods often fall short in effectively managing the thermal output of AI systems, prompting the adoption of advanced cooling technologies such as liquid cooling and direct-to-chip techniques. These innovative methods, while effective in maintaining temperature control, contribute a significant portion to the cumulative energy consumption of AI data centers.

The integration of cooling technologies requires meticulous optimization of HVAC systems within data centers. Efficient heat management is paramount, as the energy used in cooling AI hardware forms a considerable part of overall power demands. Employing liquid cooling systems, including immersion cooling where servers are submerged in cooling fluids, highlights the advancements aimed at tackling the heat generated by AI operations. These cooling processes are essential for maintaining the functional integrity of AI data centers, underscoring the enduring need for innovation in this aspect of energy management.

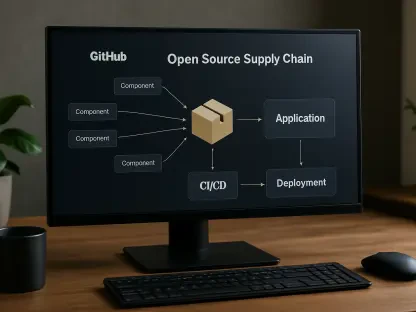

The Role of Data Movement and Storage

Another critical factor contributing to the high energy consumption of AI data centers is the role of continuous data movement and extensive storage capacities. AI applications necessitate the transfer of vast amounts of data from storage units to processing units, a process inherently demanding significant power. The constant movement and access to petabytes of data further exacerbate the already high energy demands, highlighting the intricate relationship between data-centric operations and power consumption challenges within AI environments.

The relentless requirement for extensive data storage and movement is a cornerstone of AI functionality, and addressing the energy impact of these processes remains a central concern. Efficiently managing data movement involves balancing operational needs with power usage, necessitating innovative solutions capable of optimizing these critical activities. This aspect of AI data center operations underscores the broader energy challenges faced by the industry and the importance of achieving sustainable practices in data management.

Innovative Solutions and Future Prospects

Addressing the high power consumption challenges of AI data centers requires a multipronged approach, incorporating both immediate and long-term solutions. Innovations in hardware design, optimization of computational processes, and advancements in cooling technologies are crucial. Furthermore, leveraging renewable energy sources and implementing more efficient data movement and storage strategies will play a significant role in creating sustainable and energy-efficient AI data centers. The future prospects for managing AI’s power consumption challenges are promising, with continuous research and development paving the way for breakthrough solutions that balance performance with sustainability.