Software that senses intent, decides in milliseconds, and acts without human delay is shifting enterprise competition from who can ship features to who can orchestrate outcomes, and the quiet revolution underneath is not a single model or clever widget but the integration fabric that turns raw data into decisions embedded in every transaction, screen, and workflow.

The Context: From Features to Decision Systems

Enterprises spent years bolting recommendation engines and chat interfaces onto legacy stacks, yet the center of gravity in software barely moved. Most business systems still run on fixed logic: gather inputs, apply rules, return outputs. That structure falters when user intent evolves second by second and operations span countless micro-events across channels. If 88 percent of companies report using AI in at least one function, but only a fraction scale it across the organization, the message is blunt: isolated features do not add up to adaptive systems.

This review examines enterprise AI integration as a technology category, not as a collection of tools. The focus is the end-to-end pipeline that connects streaming data, feature engineering, model inference, and automated actions into a closed loop. Put simply, it evaluates how the stack senses, decides, and acts. The promise is twofold: real-time personalization that tunes experiences per session and predictive operations that compress the window between signal and response. The test is whether the architecture handles messy data, variable load, and constant change without crumbling.

What It Is: The Operating Layer For AI-Native Applications

Enterprise AI integration is best understood as an operating layer that standardizes the flow from data to models to decisions to actions. Rather than scattering models around the edge of apps, it centralizes the logic and pipes outcomes back into core workflows. The approach treats models as replaceable components and treats data contracts, feature consistency, and decision routing as first-class assets. It matters because the old pattern—write rules, schedule jobs, push dashboards—cannot handle environments where value is created within the user session or the transaction itself.

What makes this implementation distinctive is that it demotes the algorithm to one piece of a managed supply chain. Feature definitions live in governed stores, inference runs behind latency budgets, and actions fire through APIs that sit inside business systems. The system’s strength is not a single breakthrough model but the disciplined repeatability of shipping predictions that stay accurate, traceable, and fast at scale. Competitors that focus on individual models or function-level widgets rarely reach this level of consistency across use cases.

How It Works: Data Contracts, Feature Stores, and Decision Loops

Under the hood, the integration layer starts with data ingestion across batch and stream. Batch brings depth for training and analytics; streams supply recency for event-driven decisions. Lake, warehouse, or lakehouse choices shape how teams balance flexibility, governance, and cost. What matters more than the label is a shared schema that survives change: when source fields evolve, the downstream features must not silently break. That is where data contracts and lineage tools create guardrails, ensuring each transformation is auditable and each feature has a clear provenance.

Feature stores sit at the center. They encode inputs like lifetime value, session velocity, or device risk as named assets that training and serving share. The payoff is twofold: training and inference read the same definitions, which reduces training/serving skew, and teams stop rebuilding features in parallel silos. The inference path then retrieves features from low-latency stores, calls model endpoints, and feeds a decision layer that ranks items or selects actions based on business objectives. By the time the result hits the API that renders a page or triggers an operations update, the loop has moved from raw event to system response in tens of milliseconds.

Why It Matters: Limits of Fixed Logic and Batch-First Architectures

As customer expectations converged on personalization as a baseline, rule-based experiences started to feel brittle. When ranking stays static for hours, intent drifts and conversion drops. In operations, analytics that arrive after the fact help explain misses but cannot prevent them. The advantage of integrated AI is not novelty; it is timing. Detecting a purchase signal within a session and changing the sequence of content or pricing can alter the outcome. Spotting fraud while the transaction is still open can avert losses outright.

The shift is also economic. Maintenance of sprawling rules grows expensive and fragile over time, and dashboards that require manual action introduce latency costs hidden as churn, stockouts, or chargebacks. Integrated AI compresses these gaps by turning recurring decisions into software. That reframes the ROI conversation from model accuracy to throughput of correct decisions per unit time, plus the reliability of those decisions under load and drift.

Real-Time Personalization: Architecture and Performance

Session-level personalization depends on a tight pipeline: event capture, stream processing, state storage, model inference, decision ranking, and delivery via APIs. Each hop has a latency budget, often totaling 50–150 ms end to end. Stream processors compute windowed features such as “items viewed in the last 60 seconds,” while state stores cache per-user context. Models score candidates and a decision layer applies business rules—inventory thresholds, margin targets, compliance constraints—to produce the final ranking. Reliability patterns like idempotent event handling and circuit breakers keep the system steady when traffic surges.

Performance is not just speed; it is the stability of speed. Spikes during campaigns or shopping holidays create backpressure on brokers and stream jobs. Architectures that partition topics and scale consumers horizontally tend to maintain predictable latency without dropping events. When this is done right, the measurable outcomes show up in engagement curves: higher click-through in early session steps, better add-to-cart rates, and tighter conversion funnels. Where teams miss is usually not model quality but stale signals or inconsistent features between offline training and online serving.

Embedded Predictive Analytics: From Dashboards to Actions

The other half of the equation moves analytics into operational systems. Training pipelines produce models for demand, churn, risk, or maintenance. Inference APIs run inside decision points—point of sale, transaction risk, replenishment plans—so actions occur automatically. A churn probability triggers a targeted retention offer before the user leaves; a risk score changes the authorization flow during checkout; a forecast adjusts safety stock before a shipping wave. The impact lies in orchestration: predictions surface where they matter, without human routing.

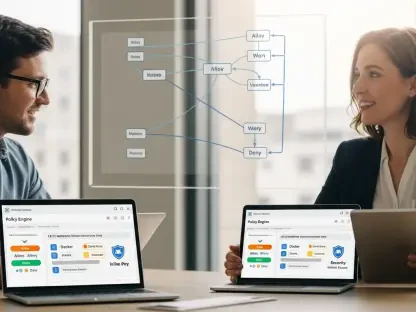

A key differentiator here is commitment to prescriptive logic. Predictive outputs by themselves are diagnostic; business value compounds when the system chooses a next-best action and executes it under constraints. That requires policy layers that encode thresholds, trade-offs, and regulatory rules. It also requires feedback loops to learn from outcomes. Programs that stop at prediction often celebrate charts while core metrics stagnate. Programs that connect prediction to gated actions tend to show inventory turns improving, fraud losses dropping, and retention climbing in observable increments.

The Infrastructure Question: Why Architecture Decides Success

Many AI programs stall at rollout because the target stack was never built for real-time inference and constant retraining. Once traffic gets real, data volumes swell, request rates spike, and models age faster than expected. The winning pattern splits responsibilities across five layers: data, AI/ML, orchestration, application, and experience. Data manages ingestion and governance; AI/ML handles training and serving; orchestration schedules jobs and pipelines; application exposes microservices behind gateways; experience brings the results to users in ways that do not block interactions.

Deployment choices follow control and latency needs. Cloud-native stacks offer elasticity and speed of iteration, while hybrid setups keep sensitive data in controlled zones without sacrificing burst capacity. Edge deployments move inference to where data originates, reducing jitter and enabling cases like visual inspection or IoT monitoring. None of these choices is universally best; what distinguishes mature programs is consistency of SLOs across them, plus the ability to route workloads based on cost, latency, and legal constraints without rewriting the entire app.

MLOps: Keeping Models Accurate, Traceable, and Live

MLOps is the reliability backbone of integrated AI. Versioning maintains lineage of data, code, and models. CI/CD tests models for performance and safety, automates canaries, and enables rollback without firefighting. Monitoring tracks live accuracy, data drift, and infrastructure health. Retraining pipelines pull in fresh labels, sweep hyperparameters, and redeploy validated candidates. Together, these practices prevent the slow decay that undermines trust after a celebrated launch.

The cultural shift may be the harder part. Data scientists accustomed to notebooks must align with software engineering rhythms. Product teams must treat model changes as feature releases with user impact. Compliance teams must get audit trails that explain why a decision happened, who accessed which data, and what version of the model was in use at that time. Where these norms are institutionalized, the half-life of model value extends and incidents become manageable exceptions rather than chronic pain.

Performance Benchmarks: What Good Looks Like

Strong programs establish clear SLOs around freshness, latency, and stability. Freshness defines how recently features reflect user behavior or system state. Latency targets govern the end-to-end time from event to delivered decision. Stability sets error budgets for dropped events, failed inferences, and degraded ranking. In retail personalization, achieving sub-150 ms inference with feature freshness under five seconds can move funnel metrics in double digits. In fraud prevention, cutting model response to tens of milliseconds reduces false declines without opening the door to fraud rings exploiting timing gaps.

Numbers like “88 percent adopted AI” or “only a quarter scaled successfully” are not decorative; they underscore the chasm between pilots and production. The adoption figure signals that most enterprises have models somewhere, which raises baseline customer expectations. The low scaling figure signals that integration, not experimentation, determines business impact. For teams weighing investment, the interpretation is simple: spend less on novel models and more on the connective tissue that keeps okay models useful, fast, and governed under real load.

Differentiation: Why This Integration Approach Beats Alternatives

Several alternatives compete for mindshare. One is the feature-first path: add point models to existing apps. It is easy to start but tends to create brittle dependencies and duplicated logic, especially when features diverge between training and serving. Another is the monolithic suite: buy an all-in-one platform that promises magic. Suites often centralize control but struggle with domain nuance and can lock teams into rigid patterns that age poorly. A third is the LLM-only mindset: swap structured ML for a general-purpose model with retrieval and prompts. LLMs shine at unstructured tasks but stumble on deterministic, high-volume, low-latency decisions without careful guardrails.

An integration-first approach differs by treating models as replaceable parts behind durable data contracts and decision APIs. It combines structured ML for ranking, scoring, and forecasting with LLM-driven retrieval and summarization where unstructured input dominates. It also prioritizes event-driven design, which keeps personalization loops live and predictive actions timely. This balance gives enterprises control over cost, latency, and explainability while still benefiting from the rapid evolution of model ecosystems.

Market Moves: Real-Time Decisioning Becomes Default

The market has shifted decisively toward real-time decisioning in critical paths. E-commerce and media treat session-level ranking as table stakes; banking applies during-transaction risk scoring; logistics tunes capacity allocation while orders pour in. LLMs augment this landscape by enriching context: retrieval-augmented search sifts documents, summarization condenses signals, and natural-language interfaces expose decision tools to operators without drowning them in UI complexity. Agentic patterns, once speculative, now automate back-office steps and even controlled front-office tasks with careful supervision.

Convergence amplifies these shifts. AI plus IoT produces predictive control in physical environments, moving from monitoring to actuation. AI plus edge reduces latency where network conditions are poor or intermittent. AI embedded in SaaS systems brings intelligent defaults to horizontal tools, though the deepest value still accrues where domain data and action pathways are unique. The result is a bifurcated market: generic intelligence commodifies fast, while integration that captures proprietary signals and routes high-stakes actions sustains durable advantage.

Where Results Show Up: Revenue, Cost, and Risk

Measurable impact concentrates in three buckets. On revenue, dynamic pricing, next-best-action, and adaptive merchandising translate richer context into higher conversion and basket size. On cost, process automation, demand and supply planning, and maintenance optimization remove latency and variance from routine decisions, freeing capital and labor. On risk, near-real-time fraud and anomaly detection, credit scoring, and policy compliance prevent losses and regulatory exposure before they compound. The common thread is not the domain but the loop: data becomes features, features feed models, models drive actions.

Sector specifics refine the picture. Retail depends on short windows of attention and volatile inventory; integration must balance margin protection with relevance. BFSI places a premium on explainability and audit; models that score by the millisecond still need crisp decision logs. Healthcare requires careful governance of data access and clinical safety; decision support augments rather than replaces clinicians. Logistics and media juggle fast-moving capacity and content; real-time feedback loops change outcomes within a session or a shift. Program maturity tracks how consistently these sectors maintain loops under stress.

The Catch: Integration Is Harder Than Modeling

Strategy failure often starts with picking use cases by trend rather than target metrics. Teams launch experiments with vague goals, then struggle to fund scale-up. Data readiness is the next sinkhole: fragmented sources, unstable APIs, and late quality checks produce brittle features. Real-time constraints expose batch-first assumptions in pipelines, making experiences feel stale. Lifecycle neglect turns into performance drift that no one catches until KPIs slide. Over-reliance on generic models misses domain nuance. Weak compliance and explainability block deployment in regulated flows.

The mitigations are precise: prioritize by ROI and implementation complexity; enforce shared schemas and data contracts; adopt event-driven design where timeliness matters; instrument observability by default from data pipelines through inference services; require decision logs with versioned context for audit; and budget for MLOps as a core platform, not a side project. None of this is glamorous, but it separates programs that glide into production from those that stay in demo mode indefinitely.

Security, Compliance, and Explainability: Guardrails For Trust

Trust does not emerge from accuracy alone. Sensitive domains require proof of control. Lineage systems track how fields were derived; access control restricts who can see which data and when; encryption protects data at rest and in transit; tokenization limits exposure in lower-trust zones. For models, governance frameworks log input features, prediction outputs, thresholds applied, and the exact versions of data and code in play. When an auditor asks why a credit limit was reduced, the system must reconstruct the decision path without guesswork.

Explainability tools close the understanding gap between complex models and human oversight. Feature attribution explains which signals influenced a score; counterfactual analysis shows what would have changed the outcome. Combined with challenge processes and human-in-the-loop escalation, these capabilities turn black-box perceptions into accountable practice. They also reduce internal friction: legal, risk, and compliance teams push programs forward rather than blocking them at the eleventh hour.

Data Quality and Feature Consistency: The Hidden Performance Lever

Many teams chase model architectures while ignoring the unglamorous work of data quality. In reality, freshness, completeness, and schema consistency amplify or blunt every other investment. Unified identifiers align user, device, and account views across systems. Deduplication and normalization prevent artificial inflation or suppression of signals. Label integrity—especially in fraud, churn, or defects—determines upper bounds on performance; noisy labels hide true relationships and mislead tuning.

Feature stores enforce consistency across teams and time. A feature such as “90-day purchase frequency” must mean the same thing in training and in production, next quarter and next year. When definitions drift silently, models degrade and A/B results wobble without explanation. Organizations that treat features as versioned products, with owners and SLAs, report fewer regressions, faster onboarding of new use cases, and a measurable drop in firefighting.

LLMs in the Stack: Where They Fit and Where They Do Not

Large language models transformed unstructured workflows: document search, summarization, code suggestions, and conversational interfaces. In enterprise AI integration, their edge is retrieval-augmented generation that grounds outputs in authoritative records. This boosts discovery, support, and knowledge management without hallucination-prone freeform answers. LLMs also act as orchestration glue, translating natural language intents into structured actions across services.

However, LLMs are not drop-in replacements for ranking, scoring, or fast risk decisions. Token-level inference is expensive and inconsistent at sub-50 ms targets. Deterministic constraints around compliance or margin can be hard to enforce through prompts alone. The pragmatic pattern blends LLMs for understanding and context with structured ML for crisp, low-latency decisions, all within the same integration fabric. That hybrid keeps cost, speed, and governance in balance.

Reliability Patterns: Designing For Failure, Not From It

Resilient systems assume components will fail. Idempotent event consumers handle duplicates without corrupting state. Dead-letter queues capture poison messages for inspection rather than crashing pipelines. Circuit breakers and rate limiters protect inference services from thundering herds. Shadow deployments mirror traffic to new models to measure impact before release. Blue-green or canary rollouts cut risk during upgrades. These are table stakes for critical decision paths, not nice-to-haves.

Observability must be layered. Data quality dashboards catch schema shifts and missing fields. Model monitors track prediction distributions, accuracy proxies, and drift signals. Infrastructure telemetry watches queue depths, tail latencies, and error rates. Correlating these layers shortens incident resolution and reveals systemic bottlenecks that single dashboards hide. Teams that invest here spend less time guessing and more time improving.

Cost Management: Economics of Real-Time AI

Real-time systems can get expensive if left to sprawl. Event volumes grow, feature stores expand, and inference calls multiply. Cost-aware architectures rightsize feature granularity, cache aggressively for hot paths, and batch low-criticality decisions where immediacy adds little value. Mixed precision serving and model distillation cut inference costs without gutting accuracy. Edge placement reduces bandwidth spend for sensor-heavy use cases. Importantly, cost metrics must sit beside business metrics: dollars per incremental conversion, per fraudulent dollar blocked, or per inventory dollar freed.

The other lever is consolidation. Many enterprises run multiple parallel stacks for adjacent use cases, each with its own pipelines and stores. Integrating around common contracts and governance reduces duplication. The result is less variance in behavior, fewer bespoke maintenance burdens, and a cleaner path to adding new models without reshaping foundations every time.

Implementation Experience: What Scales Beyond Pilots

Programs that moved beyond pilots shared a pattern. They began with high-impact, low-effort use cases tightly bound to measurable outcomes, such as during-transaction risk scoring or session-level ranking on high-traffic surfaces. They validated data readiness before modeling, fixing identifiers and schemas early. They set latency budgets and designed systems backward from them. They shipped with MLOps in place, not as a later add-on. And they produced decision logs the compliance team could read without translation.

In contrast, programs that stalled skipped early alignment on metrics, chose fashionable use cases with fuzzy economics, and underestimated the engineering load of real time. They over-indexed on off-the-shelf models without domain tuning and discovered late that explainability requirements blocked deployment. The lesson repeated across sectors: integration excellence, not model novelty, determined velocity and impact.

Competitive Landscape: Build, Buy, or Integrate

Enterprises face a classic choice. Building everything grants control but eats time and talent; buying monolithic platforms accelerates starts but dead-ends in rigidity. The integration stance takes a middle path: assemble best-of-breed components behind durable contracts. Feature stores, stream processors, inference servers, and LLM providers can be swapped as needs evolve, while business logic and data definitions remain stable. This reduces vendor lock-in and lets teams ride improvements in underlying technologies without disruptive rewrites.

Why this approach over competitors depends on constraints. Regulated industries value auditable lineage and policy layers that monoliths often oversimplify. High-scale consumer apps need tailored performance envelopes that one-size-fits-all platforms rarely hit. Organizations with diverse lines of business benefit from shared primitives rather than duplicated feature engineering per team. In short, the integration approach optimizes for long-term agility under governance, which is where most enterprise complexity lives.

Risk Management: Avoiding Failure Modes

Even with the right architecture, risk accumulates without discipline. Silent data drift gradually erodes prediction quality. Shadow dependencies in upstream systems cause cascading errors. Human overrides that bypass logging turn into audit gaps. Unpatched models embed biases that go unnoticed until customer complaints land. Mitigation requires policy, not hope: mandatory monitors for key features, automatic alerts for schema shifts, required sign-offs for model changes, and periodic bias and robustness tests scoped to high-stakes decisions.

A final risk is cultural. If teams treat AI as magic, they forgive sloppy practices until a public failure forces overcorrection. If teams treat AI as infrastructure, they demand the same rigor applied to payments, safety, or availability. The latter mindset keeps programs pointed at business value while preventing the operational debt that kills momentum.

The Verdict: System-Level AI, Not Feature Dust

The technology delivered a clear thesis: enterprise AI integration works when the product is the decision loop, not the model. The unique strength of this approach was its focus on feature consistency, event-driven design, and MLOps discipline that keeps models accurate, explainable, and fast under real load. Against alternatives—feature bolt-ons, monolithic suites, or LLM-only bets—it provided a balanced path that preserved agility and governance. The main trade-off was upfront engineering intensity; teams had to invest in data contracts, observability, and policy layers before headline features appeared. For organizations willing to treat AI as core infrastructure, the payoff showed up in engagement, margins, and risk reduction that persisted beyond the first release.

Looking ahead, actionable steps stood out. Start with a use case that moves a revenue, cost, or risk metric within a quarter. Establish shared schemas and a feature store before attempting real-time loops. Define latency budgets and build backward from them. Pair structured ML for rankings and scores with LLMs for unstructured understanding, connected through retrieval. Instrument decision logs from day one to satisfy compliance without drama. With these moves, enterprises had turned scattered pilots into durable systems and had shifted competition from shipping features to orchestrating outcomes reliably at scale.