A hush fell across boardrooms when a single metric cut through the hype: most new code at a tech giant already came from AI, and the remaining human work shifted from typing lines to steering systems, approving outputs, and setting policy with the confidence of production-grade discipline.

From Copilots to Managed Agency: Why This Transition Matters Now

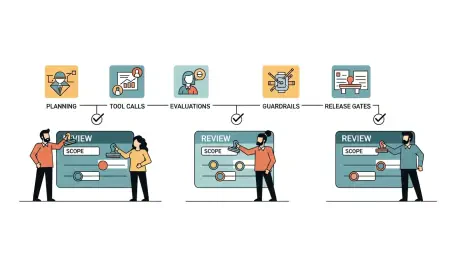

The center of gravity has moved from assistive tab-completion to autonomous, orchestrated agents that execute end-to-end tasks under human oversight. This spans software engineering, DevOps, marketing, sales enablement, and support, where agents plan, call tools, and submit changes while reviewers control quality and scope.

The significance is twofold: cycle times compress materially and quality improves via governance, redefining engineering toward architecture and validation. Ecosystems coalesce around Google (Gemini, Vertex AI), Microsoft (GitHub Copilot, Azure AI), Nvidia (platforms and tooling), Salesforce (Einstein), and Meta’s internal adoption push. Core enablers include large language models, function calling, vector search and RAG, multi-agent orchestration, evaluation pipelines, and guardrails. A layered market is forming across platforms, MLOps/LLMOps, agent frameworks, security/governance, and services—shaped by data protection rules, AI risk standards, and software supply chain controls.

The New Production Stack for Agents: Trends and Trajectory

Work Reimagined: Productivity, Roles, and the Rise of Orchestrated Autonomy

Google reports roughly 75% of new code is AI-generated with human approval, and a recent migration finished six times faster via a hybrid human–agent team. Beyond engineering, marketing teams generate thousands of variants, cutting turnaround about 70% with a 20% conversion lift.

Roles evolve as engineers move from manual coding to system design, architecture, and validation—closer to product engineering and solution architecture. Leadership pressure mounts as Nvidia calls for pervasive AI, Microsoft and Salesforce embed agents, and Meta links performance to usage. Operating models codify runbooks, centralized guardrails, human-in-the-loop checkpoints, and measurable outcomes, marking a cultural shift from experiments to accountable, transparent adoption.

By the Numbers and What Comes Next

Early indicators show durable throughput gains, high AI code shares, and faster go-to-market performance. Enterprises prioritize high-volume, lower-risk tasks first, then expand once controls prove reliable.

Cost curves improve as tool spend is offset by shorter cycles and fewer defects through tests and policy checks. Near term, agentization broadens from coding to testing, migration, documentation, and marketing ops; midterm, multi-agent systems coordinate across CI/CD and campaign orchestration. KPIs to track include acceptance rate, rework and defect rates, lead time, change failure rate, MTTR, and policy adherence.

Hard Problems in the Critical Path—and Playbooks to Solve Them

Safety risks persist: destructive edits, data leakage, prompt injection, and tool misuse. Technical hurdles include reliable planning, long-horizon tasks, non-determinism, version drift, and evaluating complex outputs.

Playbooks mitigate these gaps with risk-tiered human gates, policy-based guardrails on tools and scope, sandboxes and canary releases, automated evaluations and continuous monitoring, plus disciplined change management with training, documentation, and clear RACI for agent operations.

Governance and Compliance as Enablers, Not Handbrakes

Regulatory signals—from EU-style risk tiers and NIST-aligned frameworks to sectoral rules and global privacy laws—favor governed approaches. Enterprise standards now require lineage, audit trails, RBAC/ABAC, segregation of duties, and secure-by-default practices.

Supply chain integrity matures via SBOMs, signed artifacts, policy as code, and protected builds for agent outputs. Security posture hinges on secrets hygiene, least-privilege tool access, scanning of generated code, and incident playbooks for agent misbehavior, backed by explainability artifacts, evaluation evidence, and periodic reviews.

Where the Market Is Headed: Platformization, Autonomy Levels, and New Moats

Emerging capabilities—multi-agent planning, workflow graphs, self-correction via evals, synthetic data, and on-device inference—raise autonomy while improving privacy and latency. Vertical agent platforms, model-native IDEs/DevOps, and integrated marketing ops agents could disrupt incumbents.

Customers favor measurable ROI, transparency, and control, with tools that slot into existing stacks. Economics reflect rapid cost/performance gains, GPU supply dynamics, and consolidation around platforms with governance built in. Talent demand tilts toward AI platform engineers, evaluators, and governance leads, with upskilling paths for engineers and marketers.

Strategic Takeaways and an Actionable Path Forward

Google’s governed agentic model accelerated delivery, elevated human roles, and signaled a move from pilots to disciplined operations. Early actions included targeting controllable tasks like migrations, test generation, documentation, and creative variants; establishing approvals, audit logs, policy boundaries, and rollbacks; building platform primitives such as tool registries, identity and permissions, observability, and evaluations; redesigning incentives for architecture-first engineering and reviewer excellence; measuring acceptance, quality, cycle time, incidents, and impact; and scaling autonomy gradually with regular safety reviews and stakeholder training.