A New Pace of Software Risk

An obscure configuration bug that once languished in a backlog for months can now be chained with a permissive log parser and an overlooked API edge case to yield a working exploit in a single afternoon. That is the promise—and the problem—unlocked when frontier AI is put to work on production software, not as a static scanner, but as a directed collaborator with tools, context, and a mandate to prove every claim. The shift has redrawn the boundaries of what counts as “low risk” and pushed security programs to value speed of remediation over the comfort of deterministic scoring.

This review examines AI-driven vulnerability management as exercised in large, heterogeneous codebases by Broadcom’s Infrastructure Software Group. The group’s findings moved from “impressive but not groundbreaking” to materially consequential once the approach was reframed. Rather than measuring a model like another linter, the team built an operating environment that mirrored how senior researchers work: explicit threat models, component context, live systems for testing, and a hard requirement for proof of exploitability. The result was not just more findings; it was a different class of findings that traditional tooling tends to miss.

What Is Being Reviewed

AI-driven vulnerability management here refers to frontier language models directed by structured workflows to discover, validate, and help remediate exploitable flaws in production-grade software. Unlike traditional static analysis that flags patterns without context, this approach treats the model as a hypothesis engine that reasons over architecture, privileges, and data flows. The output is evaluated not by rule matches but by evidence: a proof-of-concept path and an actionable fix.

The distinction matters because static tools optimize for precision at the pattern level, while attackers optimize for impact at the system level. By embedding system knowledge—who calls what, where trust boundaries lie, which components run with which permissions—the AI stops behaving like a noise-prone scanner and starts functioning like a fast, tireless analyst. It asks, “Given this threat model, what path from input to impact is plausible, and how can it be demonstrated?”

How It Works: Context, Tools, and Direction

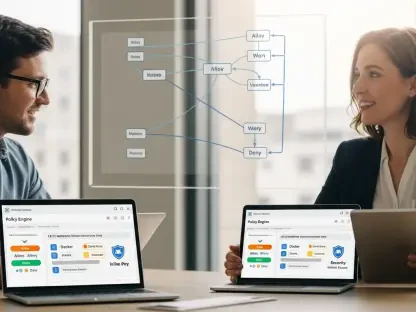

Three ingredients enable the jump in utility. First, explicit context changes the questions the model asks. Feeding threat models, dependency graphs, and deployment topologies lets the AI reason about trust transitions and data lifecycles, elevating analysis from line-level defects to end-to-end risk. Second, controlled access to build, runtime, and test environments enables the model to compile, run, and probe assumptions. This closes the loop between hypothesis and observation, producing findings that come with transcripts, traces, and minimal PoCs.

Third, discipline in direction keeps the system reliable. Structured prompts, iterative task decomposition, and gated validation steps reduce drift and make results reproducible. Findings are treated as unproven until a PoC triggers the bug and a candidate patch eliminates it without breaking tests. That adversarial posture aligns the AI’s incentives with production realities: fewer speculative tickets, more fixes that ship.

Performance and Results

Under this methodology, performance changed category rather than degree. The most notable capability was combinational reasoning. Models stitched together benign-looking conditions—like a lax serializer, a non-obvious type coercion, and a permissive rate limiter—into a coherent exploit chain. Each issue scored “low” in isolation; combined, they produced lateral movement or data exfiltration. This is where machine speed matters: traversing vast code paths and configuration surfaces to find alignments humans overlook under time pressure.

Equally important, the system delivered evidence, not just alerts. Findings arrived with working PoCs, proposed patches, and regression tests that demonstrated both exploit closure and functional preservation. That end-to-end arc lowered organizational friction: security engineering could send high-confidence fixes to product teams, and release managers could cut risk with less debate over severity semantics.

Differentiation: Why This and Not Competitors

Compared with legacy SAST/DAST, the differentiator is not raw detection volume but validated, chain-aware impact. Static tools excel at identifying known-weak patterns; they struggle to combine them into attack paths that reflect real deployments. Fuzzers find crashes, often deep and surprising, but need extensive harnessing and rarely map neatly to privilege boundaries. Bug bounties and pen tests surface creative chains, yet they are episodic and human-limited.

Frontier models, when supplied with system context and environment access, occupy the middle ground: broad code coverage with reasoning that spans components, plus the ability to generate and refine PoCs quickly. Benchmarks like CTFs understate this advantage because they withhold the very elements—tooling and operational context—that make the approach powerful in production. In short, the uniqueness lies less in the model itself and more in the disciplined wrapper that turns reasoning into shipping-quality security work.

What the Numbers Mean for Operations

While this review avoided raw counts, the directional signal was clear: more findings surfaced faster, and a higher share translated into fixes accepted by engineering. For users, that means two things. First, vulnerability backlogs will swell as latent issues become visible. Second, the window between disclosure and reliable exploitation will shrink because the same tools that find chains can weaponize them. As a result, competitive advantage moves from who can prioritize most precisely to who can patch most rapidly without breaking releases.

Interpreted for leadership, these results argue for different KPIs. Mean time to remediation becomes the headline metric, flanked by automated test coverage for security changes and rollback success rates. Triage still matters, but its role shifts from ranking to routing: deciding where to deploy scarce engineering time when speed is the dominant constraint.

Trade-Offs, Risks, and Limits

The approach is not without friction. Model reliability varies by task; poorly scoped prompts or ambiguous threat models yield plausible but wrong paths. Environment access must be tightly isolated to avoid introducing new risk, and replayable validation requires careful snapshotting of builds, data, and configs. Evaluation fidelity can degrade if test environments diverge from production in subtle ways, producing false comfort.

Organizationally, many change processes still assume quarterly patches and manual approval boards. That cadence collides with AI-driven discovery. Fragmented tooling and siloed ownership slow validated fixes, while skill gaps in prompt engineering and adversarial validation reduce returns. In the ecosystem at large, thinly maintained dependencies and opaque transitive trees propagate disruption: a fix in an upstream library forces cascades of version bumps and integration tests downstream.

Market Trajectory and Competitive Pressure

As vendors and adversaries deploy similar techniques, the market will experience higher issue volumes and compressed exploit timelines. Expect an early, orderly wave of fixes from well-resourced platforms, followed by turbulence across long-tail open source where maintainers lack automation. Over time, the system will settle into a steady state of persistent disclosure and short weaponization windows, making operational velocity the decisive KPI.

Competitively, products that embed disciplined AI—context ingestion, environment harnessing, proof requirements—will outpace those adding “AI” as a scanner mode. The difference will show up in customer outcomes: fewer false positives, faster patch acceptance, and clearer audit trails. Buyers should probe vendors on proof workflows, not just model benchmarks.

Who Benefits Now

Enterprises and public sector teams with mature CI/CD can adopt this method fastest: wire the AI into build artifacts, ephemeral test stacks, and telemetry; set proof gates; automate approvals for validated fixes. Organizations with legacy change management can still benefit by focusing on vendor update ingestion: standard emergency pathways, pre-approved rollback plans, and MTTR targets. Open-source maintainers gain leverage if platform partners provide shared infrastructure for validation and regression testing, converting ad hoc heroics into repeatable process.

Broadcom’s contributions sit in enablement: integrating frontier AI across discovery, exploit validation, patch creation, and regression, while investing in partnerships and open standards that diffuse disciplined practices throughout supply chains. The emphasis on adversarial proof and end-to-end integration is a practical template others can copy.

Verdict and Next Moves

This technology changed how vulnerability work got done by turning large models into system-level analysts bound by evidence. Its uniqueness did not rest on flashy demos but on a sober methodology: threat models for direction, environment access for truth, and adversarial proof for credibility. The payoff was a surge in exploitable, chain-aware findings and a cleaner handoff to engineering, which in turn made patch velocity—not static severity—the metric that mattered.

For teams planning next steps, the most leveraged moves were to modernize change pipelines around security fixes, instrument MTTR as a top-line KPI, and adopt disciplined AI with explicit proof requirements. Where internal capacity was thin, the practical route was consolidation: fewer platforms, standardized workflows, and partnerships that brought shared validation infrastructure. Taken together, these actions positioned defenders to meet AI-driven offense on equal footing—not by scoring findings better, but by shipping safe fixes faster.