The persistent struggle to transform a clever digital experiment into a reliable corporate workhorse has left many executive suites questioning the massive capital investments funneled into large language models over the last several development cycles. While the initial wave of enthusiasm focused on the novelty of generative responses, the current landscape demands more than just sophisticated conversation. Organizations are now seeking systems that can navigate complex operational workflows, manage internal resources, and execute transactions without constant manual intervention. This shift marks the beginning of a transition from passive assistants to active agents capable of driving measurable business value.

The Fragile Bridge Between Artificial Intelligence Prototypes and Production Reality

Most enterprises are currently trapped in a cycle of pilot purgatory where promising experiments fail to scale because they lack the structural integrity required for the corporate world. While a chatbot that answers basic internal questions is a manageable novelty, an autonomous agent capable of adjusting compute resources or accessing sensitive financial records introduces risks that traditional software frameworks are not equipped to handle. The gap between a successful demonstration and a production-ready system is often wider than anticipated, primarily due to the unpredictable nature of unstructured data and the lack of rigorous guardrails.

Teradata’s recent strategic shift suggests that the era of experimental projects is ending, replaced by a demand for systems that do not just talk, but act safely and predictably at scale. Moving a project into a live environment requires more than just a powerful model; it necessitates a foundation that treats artificial intelligence as a core operational component rather than a standalone feature. Without this shift in perspective, many companies find their innovation efforts stalling as they realize that the tools used to build a prototype are rarely sufficient to sustain a global enterprise deployment.

The Scaling Crisis: Why Generative AI Hits a Wall in the Enterprise

The transition from a simple large language model to a functioning agent is fraught with hurdles that often stall digital transformation efforts. Organizations frequently struggle with fragmented data silos that prevent models from accessing the context needed to make informed decisions. Furthermore, the skyrocketing costs of specialized compute and the looming threat of hallucinations in high-stakes environments create a barrier to entry that few can overcome. When a system provides an incorrect answer in a casual setting, it is a minor inconvenience; when it makes an error in a regulated financial report, the consequences are severe.

Traditional security protocols are often too rigid or too porous for the fluid nature of these new workflows. This creates a bottleneck where innovation is stifled by the very governance intended to protect the business, making it nearly impossible to move beyond proof-of-concept projects without a unified operational layer. Moreover, the lack of transparency in how many models arrive at their conclusions makes it difficult for compliance officers to approve widespread use. Consequently, the initial speed of development often grinds to a halt as soon as the project encounters the realities of corporate risk management and cost optimization.

Inside the Autonomous Knowledge Platform: A Unified Ecosystem for Agency

Teradata’s flagship Autonomous Knowledge Platform addresses these bottlenecks by consolidating disparate tools into a single, cohesive environment designed for backend operational tasks. The AI Studio provides a dedicated workspace where developers build, test, and deploy complex workflows without switching between incompatible third-party platforms. This integration reduces the friction typically associated with moving code from a sandbox to a production server, ensuring that the logic used during development remains intact during deployment.

Beyond the development tools, specialized agents known as Tera and Tera Agents handle the heavy lifting of infrastructure management. These agents monitor performance tuning, compute sizing, and FinOps telemetry to keep cloud costs under control, moving beyond basic interfaces to become active participants in system maintenance. By allowing enterprises to store data once and access it across all functions through a connected foundation, the platform ensures that agents work from a single source of truth. This centralized approach reduces errors and improves traceability, which is essential for any organization looking to scale its operations.

Bounded Autonomy: The Strategic Differentiator in a Crowded Market

While competitors often focus on the speed of deployment, the concept of bounded autonomy prioritizes rigorous governance over open-ended agency. This is achieved through the Enterprise MCP, or Governed Context Interface, a control plane that enforces role-based access and schema validation for every interaction. By maintaining a human-in-the-loop requirement for high-risk actions, the platform provides a safety net that is vital for highly regulated sectors like banking and healthcare. This ensures that while the agent has the power to suggest and prepare actions, the final authority remains with a qualified professional.

This approach transforms artificial intelligence from a potential liability into a governed corporate asset. Every decision an agent makes is recorded, providing a complete audit trail that can be reviewed by internal teams or external regulators. Unlike black-box systems that offer little insight into their internal logic, a governed framework allows for the fine-grained control of what information is shared and what actions are permitted. This transparency builds the trust necessary for leadership teams to authorize the use of autonomous systems in business-critical processes.

A Roadmap for Implementing Governance-First AI Agents

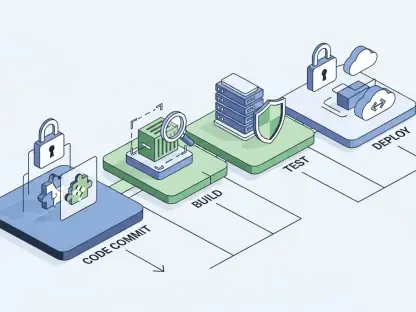

To successfully transition from pilots to agents, enterprises adopted a structured framework that prioritized security and operational efficiency. The first step involved establishing a governed context by utilizing a centralized interface to define strict permissions before any deployment. Once these boundaries were set, organizations operationalized performance tuning by deploying agents specifically to monitor infrastructure. This ensured that growth did not lead to unmanageable cloud expenditures, as the agents themselves managed the resources they required to function.

The implementation process further involved multi-modal orchestration to maintain consistency across hybrid environments. By leveraging specialized orchestration tools, businesses ensured that their systems performed reliably whether they were running on-premises or in the cloud. Finally, human oversight was integrated as a mandatory checkpoint for sensitive tasks, aligning autonomous actions with organizational policies. These steps collectively moved the industry toward a future where agents are not just experimental tools but essential components of the modern corporate infrastructure.