The State of DevOps AI Agents: Scope, Stakes, and Where They Fit Now

Production teams keep asking a hard-edged question with immediate budget consequences: can an autonomous DevOps agent safely observe live systems, decide on a course of action, and execute changes without human intervention while improving speed and resilience rather than adding a new failure mode. The answer hinges on a clear definition: unlike chatbots or coding copilots that advise, an autonomous agent perceives telemetry across logs, metrics, traces, CI output, and cluster events, reasons over that context, and uses APIs, scripts, and runbooks to act, sometimes learning from feedback loops.

Autonomy matters because it compresses reaction time and scales coverage across sprawling estates, yet the same power amplifies risk. The most credible value appears across plan, code, build, test, deploy, operate, secure, and optimize, with traction in incident response and SRE assistance, code and pipeline intelligence, infrastructure posture and policy compliance, and FinOps with capacity planning. Under the hood, large language models, retrieval, tool-use APIs, event streams, vector stores, embeddings, policy engines, and observability platforms enable these behaviors. Hyperscalers, DevOps platforms, incident tooling vendors, infrastructure and policy providers, and agent observability stacks all jockey for position. The common thread in production is toil reduction and faster time-to-insight, enforced by guardrailed autonomy and risk-managed change.

Momentum and Market Signals: What’s Shaping Adoption and Where It’s Heading

Adoption has tilted toward assistive patterns first, then autonomy where the blast radius is small and the playbook is known. Claims of broad “self-healing” gave way to assisted remediation with human approval, as teams prefer bounded, read-heavy deployments that aggregate context over risky write paths.

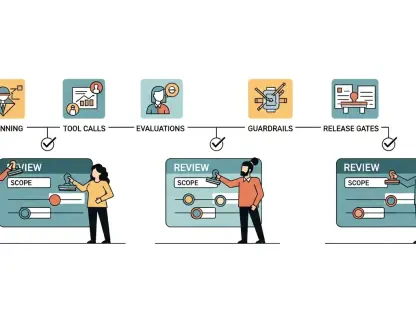

Moreover, engineering leaders now insist on agent observability—reasoning traces, action logs, and confidence scoring—paired with an instrumentation-first mindset: clean telemetry, SLOs, and policy-as-code before any agent gets credentials. Integration depth beats model novelty, with adapters for CI/CD, Kubernetes, cloud APIs, and ITSM. Enterprises also demand explainability, auditability, RBAC, and approvals. Early success patterns center on automated incident triage and impact scoping, pull request summarization with CI failure triage, and cost or configuration anomaly detection validated against policy.

Trends Moving the Needle: Assistive First, Autonomy Where Safe

The most visible shifts are pragmatic. Teams deploy agents to read, correlate, and summarize, then route suggested actions for sign-off. This preserves human judgment while shrinking the time to understand an alert or interpret a failing pipeline, and it limits the fallout from occasional misreads.

At the same time, vendor roadmaps emphasize integration and control: event bus ingestion, policy gates, confidence thresholds, and approval workflows. As a result, autonomy shows up in rehearsed steps—like safe rollbacks or pod restarts—where runbooks are deterministic and the environment is well-instrumented.

Data Points and Outlook: Adoption Curves, ROI, and Forecasts

Penetration sits between pilots and targeted production in mid-to-large engineering groups, with leaders tracking MTTA, mean time to understand (MTTU), change failure rate, rework from misdiagnosis, and false positive or negative rates in anomaly detection. ROI clusters around toil minutes shaved per incident, reviewer time saved on PRs, earlier misconfiguration detection, and containment of cost spikes.

The near-term outlook points to gradual expansion of safe autonomy in rehearsed failure modes, and slower gains across heterogeneous, legacy-heavy estates. Consolidation is likely around platforms offering robust policy guardrails, audit trails, and native integrations. Under budget scrutiny, buyers favor measurable, low-risk improvements over moonshots.

Reality Check: Technical, Organizational, and Integration Hurdles

Technical limits remain stubborn: agents falter in novel, cascading failures, and can hallucinate or exhibit overconfidence. Tool-use reliability, idempotency, and rollback safety require painstaking engineering. Polyglot code, hybrid infrastructure, and partial or stale context also degrade decision quality and shrink the set of safe actions.

Organizational friction compounds the challenge. Change management policies, approval latency, unclear ownership, and discomfort with automated actions slow rollouts. Skill gaps in prompt management, policy tuning, and failure rehearsal further hamper outcomes, while inconsistent telemetry, missing runbooks, fragmented APIs, and snowflake environments raise integration costs. Mitigations include bounded scopes, explicit guardrails, safe defaults, circuit breakers, human-in-the-loop gates, chaos drills for agent failure modes, canarying agent actions, and agent SLOs with continuous evaluation and counterfactual tests.

Compliance and Control: The Rules Shaping Safe Autonomy

Regulatory and standards frameworks set the floor for acceptable risk. EU AI Act risk tiers and the NIST AI RMF shape risk treatment, while SOC 2, ISO 27001, PCI DSS, SOX change controls, GDPR or CCPA, and HIPAA drive auditing, data handling, and segregation of duties. Enterprises respond with tight RBAC, least-privilege agent credentials, approval workflows, and explicit control gates.

Auditability has become table stakes: decision logs, data lineage, model and version provenance, and reproducible action plans must be retained. Security hardening includes secrets management, constrained sandboxes, controlled egress, and, increasingly, signed action plans. Policy-as-code alignment via OPA, Conftest, or Checkov enforces preflight checks, while operational safeguards—incident backout plans, kill switches, anomaly thresholds, and rate limits—contain mistakes before they propagate.

The Road Ahead: From Toil-Slashing Assistants to Trusted, Bounded Autonomy

Emerging capabilities point to tool-augmented reasoning, longer-horizon plans broken into verifiable steps, stack-specific fine-tuning, and simulation-based validation. Likely disruptors include unified observability-action fabrics, policy-driven planners, and closed-loop runbooks with confidence-aware gates that meter autonomy to match uncertainty.

Buyer preferences continue to evolve toward explainable agents with strong SLAs, transparent evaluations, and integration-native offerings. Growth appears strongest in triage, PR intelligence, CI reliability analytics, FinOps alerts with contextual root-cause hints, and pre-approved remediations for rehearsed incidents like cache flushes, pod restarts, and targeted rollbacks. Progress still depends on better telemetry hygiene, standardized incident ontologies, shared benchmarks, and vendor-neutral interfaces, with cost pressures, cloud complexity, and compliance tightening accelerating measured autonomy.

Bottom Line and Playbook: When to Trust, Where to Start, and How to Scale

Three conclusions stand out. Agents deliver today in bounded, read-dominant tasks with low risk; fully autonomous remediation at scale remains overstated; and production readiness is a systems property that blends scope, observability, policy, and testing rather than a single model feature. Trust is earned through transparency, repeatability, and restraint.

Practical steps follow a simple arc. Start with incident triage, PR summarization, or cost and configuration anomaly detection backed by policy checks. Instrument the agent from day one, capturing context, reasoning, confidence, and actions. Codify ownership, confidence thresholds, and approvals for production-changing steps. Rehearse ambiguous scenarios and partial outages in staging, and only expand autonomy after repeatable correctness on the exact scenario, with guardrails intact. Teams that invested in instrumentation, policy frameworks, and deep integrations over flashier models saw steadier gains and contained risk.