The relentless pursuit of high-end silicon has reached a point where the simple addition of more processing power no longer guarantees a proportional increase in performance for large-scale artificial intelligence deployments. For many enterprises, the strategy for scaling artificial intelligence has relied on an expensive brute force approach: simply throwing more high-end silicon at the problem. However, global retailers and tech giants are discovering a frustrating paradox where doubling their GPU count often fails to resolve latency spikes during peak traffic. This disconnect suggests that the industry is hitting a structural wall rather than a hardware one. The reality is that the standard monolithic approach to serving large language models is fundamentally misaligned with the way these models actually process data. Purchasing more NVIDIA #00s is a hollow victory if those chips spend the majority of their operational life acting as overqualified memory readers rather than high-performance compute engines.

The true challenge lies in the internal mechanics of transformer-based models, which dictate how information moves through a cluster. When a system is under heavy load, the traditional method of processing requests in a single, unified stream causes a conflict between different types of mathematical operations. This conflict is what prevents the hardware from reaching its advertised potential, regardless of how much capital is invested in the data center. Furthermore, as the complexity of user prompts increases, the strain on these monolithic systems becomes more evident, leading to a situation where the most advanced chips in the world are effectively being throttled by their own architectural configuration.

The Invisible Waste in Modern AI Infrastructure

The current GPU shortage is frequently framed as a supply-chain issue, but a closer look at system profiling suggests it is equally a problem of massive architectural inefficiency. Traditional inference setups treat large language model tasks as a single, uniform workload, but this masks a deep-seated structural imbalance. When a 70-billion-parameter model is integrated into a high-stakes environment like a search engine, the hardware is forced to oscillate between two wildly different operational modes. This leads to a bimodal utilization pattern that standard monitoring dashboards—which typically report misleading average percentages—fail to capture, leaving millions of dollars in compute power effectively stranded.

These misleading metrics often give a false sense of security to infrastructure managers. A dashboard might show 50% or 60% utilization, which seems acceptable on the surface, but this figure is a mathematical abstraction that hides periods of total saturation followed by periods of relative dormancy. In practice, the system is never running at a consistent, efficient pace. This uneven distribution of work not only wastes electricity but also necessitates the purchase of more hardware than should be required to handle the actual request volume. By failing to look beneath the surface of average utilization, organizations continue to invest in a cycle of waste that serves neither the developer nor the end user.

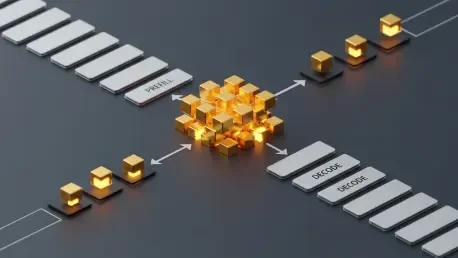

Decoding the Bimodal Workload: Why Monolithic Serving Fails

The core of the efficiency crisis lies in the two distinct phases of large language model inference: prefill and decode. The prefill phase is a compute-intensive sprint where the model processes a user’s prompt in parallel, pushing tensor cores to nearly 100% utilization. In contrast, the token generation or decode phase is a sequential, memory-bound marathon that accounts for the vast majority of the request time but leaves the GPU’s compute cores largely idle. By forcing both tasks onto the same hardware, monolithic systems create a bottleneck where new prefills interrupt ongoing decodes, causing the stuttering text output familiar to many AI users and preventing the cluster from ever reaching a steady state of optimal performance.

This interruption is more than just a minor annoyance for the user; it represents a fundamental clash in resource management. When a new request enters a system that is already busy generating tokens, the GPU must suddenly switch gears to handle the high-compute prefill task. This context switching forces existing tasks to wait, leading to increased inter-token latency. Because the hardware cannot be optimized for both dense matrix multiplication and high-bandwidth memory reading simultaneously, it inevitably compromises on both. This structural flaw ensures that as long as the workloads remain interleaved, the system will remain locked in a cycle of inefficiency that no amount of additional silicon can fully resolve.

Evidence from the Field: Reclaiming Compute Power with Disaggregation

Industry leaders like Meta, LinkedIn, and Perplexity have already transitioned away from monolithic serving toward a disaggregated inference architecture. This shift involves splitting GPU resources into specialized pools—one optimized for high-bandwidth prompt processing and another for memory-heavy token generation. Data from real-world implementations shows that this reorganization can effectively double the efficiency of an existing cluster. For instance, a global retailer was able to jump from 30% to over 70% bandwidth utilization while flattening inter-token latency. These results are supported by a rapidly maturing ecosystem, including NVIDIA’s Dynamo framework and open-source engines like vLLM and SGLang, which now offer native support for split-pool serving.

The success of these implementations proves that the problem is not the capacity of the chips, but rather how they are organized. By dedicating specific GPUs to the prefill stage, these organizations ensure that the heavy compute work is handled by hardware configured for maximum throughput. Meanwhile, the decode pool can focus entirely on the memory-intensive task of token generation without being interrupted by new incoming prompts. This division of labor allows each part of the system to operate at its highest possible efficiency. As a result, companies have reported that they can handle twice the traffic with the same number of GPUs, fundamentally changing the economics of running large-scale AI services.

A Framework for Implementation: Moving Beyond Average Utilization

Transitioning to disaggregated inference requires a strategic move from a hardware-centric to an architecture-centric mindset. Organizations must first instrument their serving layers to move beyond average metrics and identify the specific saturation points of their prefill and decode cycles. A practical implementation strategy involves deploying a specialized routing layer and high-speed networking, such as Remote Direct Memory Access (RDMA), to transfer key-value caches between specialized compute and memory pools. While this approach yields the highest return on investment for large-scale deployments with long prompt sequences, it offers a clear path for any enterprise to stop overpaying for idle silicon and unlock the true potential of current hardware investments.

The technical requirement for this shift involves a more sophisticated orchestration layer than what is found in standard deployments. Engineers had to ensure that the transfer of the key-value cache between nodes happened fast enough to avoid introducing new latency. High-speed networking became the backbone of this strategy, acting as the bridge that allowed the compute pool to hand off its finished work to the memory pool seamlessly. Despite the initial complexity of setting up such a system, the long-term benefits in terms of cost reduction and performance stability made it a necessary evolution. Companies that successfully navigated this transition found themselves in a much stronger position to scale their AI offerings without the constant need for massive capital outlays.

The transition to disaggregated inference represented a fundamental change in how the industry approached the scaling of artificial intelligence. Organizations moved toward a more nuanced understanding of workload requirements, effectively doubling their capacity by simply reorganizing their existing assets. This shift moved the focus away from the raw count of GPUs and toward the efficiency of the software architecture that managed them. By separating the prefill and decode stages, enterprises achieved a level of performance that was previously thought to be impossible without significant hardware upgrades. The implementation of specialized pools and high-speed networking solved the persistent issues of latency and core idleness. This architectural evolution proved that the key to unlocking the power of modern silicon lay in how the work was distributed rather than the sheer volume of chips in the rack. Looking ahead, this model set a new standard for sustainable and cost-effective AI growth.