Rising training bills, tighter carbon caps, and stubborn accuracy plateaus are colliding, pushing PyTorch teams to seek optimizations that deliver real gains without ripping up proven architectures. This how-to guide shows how to evaluate, integrate, and benchmark Perforated AI’s dendrite-inspired extension in PyTorch to target accuracy, size, speed, and energy simultaneously. The goal is simple: run a controlled pilot that tests whether artificial dendrites improve cost-accuracy tradeoffs without architectural rewrites.

The guide matters because the technology now sits inside the PyTorch Ecosystem, which signals compatibility, visibility, and baseline quality. However, community verification still decides whether the approach holds across tasks and workloads. By following the steps below, practitioners can generate reproducible evidence, compare fairly against strong baselines, and decide whether dendritic optimization earns a place in production.

From PyTorch Landscape to Production: Why Dendritic Optimization Matters Now

Ecosystem acceptance places the extension in full view of teams that prize reliability and maintainability. Listing in the Landscape acts as a trust accelerant: discoverability improves, compatibility expectations rise, and integration risks appear lower. Moreover, it reduces the coordination cost of getting buy-in inside larger organizations.

Perforated AI positions dendrite-inspired components as a way to bend the familiar accuracy-versus-cost curve. Rather than pushing for deeper networks or bespoke kernels, the pitch is to insert artificial dendrites that reshape signal processing within existing modules. That framing resonates with today’s production realities: budgets are stressed, schedules are fixed, and energy targets tighten.

The Case for Dendritic Intelligence in a PyTorch World

PyTorch has become the standard path from prototype to production, thanks to its eager execution model, large community, and extension points. In that setting, neuroscience-inspired methods migrate from novelty to practicality as soon as they are pip-installable, debuggable, and observable with familiar tools.

Claims remain ambitious: reports cite up to 75% lower error, 90% fewer parameters, 97% compute savings, and about 10x greener runs. These are company-stated outcomes and require independent verification. The differentiator is the light integration story: minimal code changes, no wholesale rewrites, and a path to A/B tests that isolate the effect of dendrites from other tweaks.

Implementing Dendritic Optimization in Your PyTorch Workflow

Step 1: Define the Performance and Efficiency Targets That Matter

Start by stating the win condition: accuracy lift, parameter reduction, latency, memory headroom, or energy draw. Rank these targets and record current baselines to reduce confirmation bias when results arrive.

Tip: Anchor Goals to Baseline Metrics (top-1 error, throughput, watts, cost per epoch)

Step 2: Install and Sanity-Check the PyTorch Extension

Install the package via pip in a controlled environment and verify CUDA, cuDNN, and driver alignment. Run a quick import-and-forward pass test on a small tensor to ensure basic functionality.

Warning: Pin PyTorch and CUDA Versions to Avoid ABI Mismatches

Tip: Use a Fresh Virtual Environment to Isolate Dependencies

Step 3: Wrap Existing Models with Artificial Dendrites

Insert dendritic modules around stable components, keeping core topology intact. Favor seams that already tolerate variation, such as encoder blocks or attention layers.

Insight: Start With Modular Layers (e.g., encoder blocks) to Localize Differences

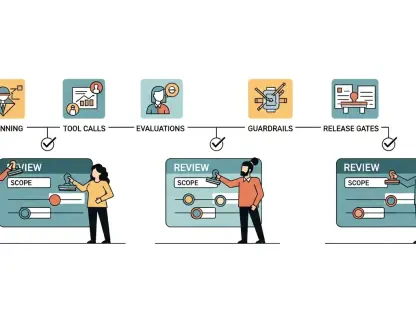

Tip: Keep a Togglable Flag to Switch Dendrites On/Off for A/B Testing

Step 4: Train With Dendritic Components Enabled

Begin with baseline hyperparameters to match training budgets. If loss curves diverge, sweep learning rate and weight decay before changing optimizers or schedules.

Tip: Begin With Baseline Hyperparameters; Then Sweep LR and Weight Decay

Watchout: Monitor Activation Scales to Preempt Gradient Pathologies

Step 5: Benchmark Accuracy, Compute, and Carbon Fairly

Match data, seeds, epochs, and augmentations between baseline and dendritic runs. Record FLOPs, wall-clock time, GPU utilization, and kWh to assess real efficiency.

Guidance: Use Standardized Suites (Model Zoo tasks, TorchBench) for Comparability

Tip: Track FLOPs, Wall-Clock, and kWh Using Consistent Tooling (e.g., CodeCarbon)

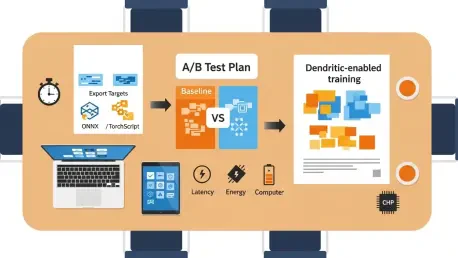

Step 6: Target Edge and Production Constraints

Quantize and prune with dendrites in place and validate export paths early. Confirm that kernels and graph transforms remain intact through ONNX or TorchScript.

Tip: Validate ONNX/TorchScript Export Paths Early

Insight: Co-Design Batch Size and Memory Footprint to Capture Parameter Savings

Step 7: Share Findings and Iterate With the Community

File issues, submit examples, and propose benchmarks that stress real bottlenecks. Reproducible configs help others replicate results and spot failure modes.

Tip: Submit Reproducible Configs to Encourage Third-Party Replication

Insight: Align With PyTorch Governance Norms to Speed Review and Adoption

Quick Recap of What to Do Next

Set explicit targets, fix baselines, and define success criteria across accuracy, size, speed, and energy. Install the extension in isolation, wrap models with a reversible switch, and train conservatively before tuning. Benchmark with matched budgets, capture compute and carbon, ready the export path, and share results for scrutiny.

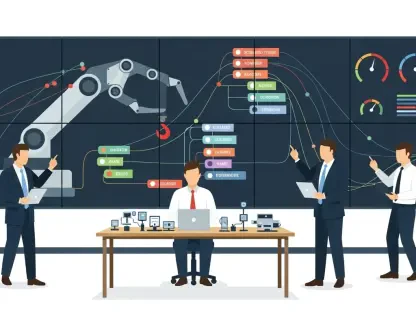

Beyond One Tool: Implications for ML Efficiency and Adoption

Bio-inspired drop-ins compete on practicality rather than novelty, which raises the bar on evidence. If strong results survive standardized audits, the demand for smaller, cheaper, greener models will drive adoption. Open questions remain about generality across tasks, compatibility with quantization and distillation, and resilience under distribution shifts.

What deserves attention next is not just accuracy curves but complete cost stacks: FLOPs, runtime, kWh, and dollars per unit quality. Clear reporting norms and third-party audits will clarify whether dendritic components become a staple or a niche tool.

Closing Thoughts and Next Steps

This guide outlined how to pilot dendritic optimization in PyTorch, framed the claims as unverified, and mapped a path to rigorous, budget-matched tests. The practical next move was to select one production-relevant model, run the A/B study with locked seeds and costs, and document end-to-end results. With those numbers in hand, scaling decisions became easier, vendor narratives were grounded, and the roadmap leaned on evidence rather than promise.