Anand Naidu has shipped products through crunch-time launches and long, quiet refactors, and he’s learned that scalability is not a late-stage patch—it’s a product decision made on day zero. In this conversation, he digs into architecture choices that prevent 13.5 hours a week from vanishing into technical debt, ties the true cost of downtime—ranging from $300,000 to over $5 million per hour—to concrete SLOs, and explains how modular architectures and early database design set teams up for growth. He walks through failure-mode simulations, pragmatic pathways from MVP to scale, and the guardrails that keep monoliths evolvable. Along the way, he shares how 0.1-second speed gains can lift conversions by 8.4% and how to sidestep the third‑party dependency trap that has disrupted 45% of organizations. The throughline is simple: treat flexibility, resilience, and capacity as features with roadmaps, budgets, and measurable payoffs.

Teams often trade speed to market for flexibility and resilience. When launch timelines compress, what early architecture decisions do you refuse to compromise on, and why? Can you share an anecdote where holding that line saved months later? What metrics help you justify the delay?

I don’t compromise on modular boundaries, database schema clarity, and basic observability. Those three decisions are like the foundation, plumbing, and circuit panel—cheap to get right early, punishing to retrofit. Once, under an aggressive launch date, we refused to flatten modules into a single deployable. We shipped with crisp interfaces and a schema designed to evolve, and that stubbornness spared us months of rework when usage compounded and one path needed to scale independently. The metrics that keep me honest are the 13.5 hours developers typically lose to technical debt each week, the 10x load scenario we simulate before launch, and the potential downtime cost—$300,000 to over $5 million per hour—which reframes a “small delay” as real risk reduction.

Developers spending 13.5 hours weekly on technical debt signals hidden costs. How do you quantify this burden upfront, translate it into roadmap trade-offs, and secure buy-in to pay it down? Walk us through a step-by-step approach that turned the tide on debt in production.

I price the 13.5 hours directly into burn: multiply it by team size and apply your blended hourly rate; suddenly “we’ll handle it later” becomes a visible budget line. Step one, instrument the hotspots—slow endpoints, failing tests, flaky deploys—and categorize debt by impact on reliability, performance, and delivery speed. Step two, make a debt quota in every sprint, not a someday project: for example, one module, one index, one flaky test eliminated each cycle. Step three, tie wins to outcomes your leadership feels—fewer pages, a 0.1-second response improvement that foreshadows conversion lifts, and avoided downtime costs that could run to hundreds of thousands per incident. We did this in production by carving a dedicated track, publishing weekly “hours reclaimed,” and mapping it to fewer rollbacks; within a few cycles the team felt the relief, and the roadmap breathed again.

An hour of downtime can cost mid-size firms hundreds of thousands and large enterprises millions. How do you connect these stakes to concrete SLOs, capacity plans, and incident budgets? Share a real example of evolving SLAs as traffic scaled 10x.

I start with the dollar stakes: $300,000 for mid-sized firms and over $5 million for large ones per hour of downtime. That anchors SLOs in business language. Next, I define SLO error budgets that assume 10x traffic: if we expect an occasional burst, we set headroom and concurrency budgets to absorb it without paging chaos. In one system that grew 10x, we first set conservative SLAs and quickly hit them, then raised our bar as we modularized: a chatty module got isolated, and our SLO for that path tightened while others stayed looser. The incident budget funded rehearsals—failure drills and capacity tests—because we treated the drills as insurance against those seven-figure hours.

Many apps run fine early, then stumble as usage compounds. What early load-testing and failure-mode simulations actually predict real-world growth? Describe the tooling, data you capture, thresholds you set, and how you translate findings into architecture changes.

Synthetic load tests only help if the scenarios mirror reality and include failure. I run ramp tests to 10x, then soak tests at that level, plus failure-mode drills: kill a dependency, inject latency, throttle a third-party. Data I capture includes p95 and p99 latency, error rates by module, saturation signals, and queue depths. My thresholds are simple: if p95 doubles under 10x, or if a third-party’s variability spills past our SLOs, we redesign—introducing queues, caches, or breaking out a module. The result is a punch list that reads like an architecture roadmap, not a test report.

With demand for custom apps growing fast, how do you avoid “build fast, replatform later” traps? Describe a pragmatic, staged architecture path from MVP to scale, including milestones for refactoring, re-segmentation of services, and data partitioning.

I acknowledge speed, then stage refactors as first-class milestones. Stage one: ship an MVP with modular seams, even inside a single deployable. Stage two: harden the database with the indexes you’ll need tomorrow, not after it hurts. Stage three: split high-churn or high-variability modules first, not everything at once. Stage four: introduce data partitioning criteria—by tenant or region—before tables swell. The market is set to grow at a 9.4% CAGR, so I assume traffic and feature churn will rise; this staged path keeps us nimble without a risky replatform.

Sixty-one percent of organizations moved away from tightly coupled monoliths. When does a monolith still make sense, and what guardrails keep it evolvable? Outline a decision tree for modular boundaries, deployment units, and when to split.

A monolith makes sense when your team is small, your domain is still forming, and time-to-market trumps distribution complexity. Guardrails matter: create strict module boundaries in code, independent test suites, and separate data ownership even inside one database. My decision tree goes like this: if a module changes faster than the rest, or its load pattern differs materially, or its failure impact is outsized, it’s a split candidate. If not, keep it inside the monolith but quarantine it with clear interfaces. That way you join the 61% trend only when the signals are strong, not because of fashion.

Modular architecture promises independent scaling. How do you define module contracts, versioning rules, and observability to prevent hidden coupling? Share a case where module isolation cut latency or costs, with the specific performance metrics you tracked.

I write contracts as small, stable interfaces—explicit inputs, outputs, and error codes—then version them deliberately with deprecation windows. Observability is per module: logs, traces, and dashboards that make any coupling obvious. In one system, we isolated a chatty module with a cache and a queue; p95 latency stabilized under peak because the hot path no longer waited on slow work. The metrics we tracked were p95 response time on the boundary, error budget burn, and request fan-out; the moment fan-out dropped, costs and tail latency came down in tandem.

Early database choices shape long-term throughput and agility. How do you design schemas and indexes for today’s needs without boxing in tomorrow’s? Detail a migration-safe playbook: data modeling, indexing strategy, sharding criteria, and rollback plans.

I model data with change in mind: stable keys, avoid overloading columns, and keep write paths simple. For indexes, I add the obvious ones upfront and plan secondary indexes for read-heavy patterns we know are coming, instead of waiting for pain under live traffic. Sharding criteria come from access patterns—tenant, geography, or chronological slices—so we can grow capacity without a messy rewrite. The rollback plan is non-negotiable: reversible migrations, shadow reads to validate new shapes, and dual writes when crossing boundaries so we can retreat gracefully if anomalies appear.

Teams often defer indexing and pay later under live traffic. What’s your step-by-step process for baseline indexing, query budgets, and performance regression gates in CI/CD? Include specific thresholds, dashboards, and alerting you rely on.

Step one: baseline indexes for primary lookups and joins we know we’ll use; ship them with the MVP. Step two: define query budgets—set target p95 and p99 for read and write paths—and enforce them in CI/CD with regression tests. Step three: wire dashboards per module showing query time percentiles and slow query counts, and alert when budgets drift. My thresholds are practical: if p95 slips after a merge, the gate holds the release. It’s cheaper to pause a build than to bleed under production load where that hour might flirt with $300,000 and beyond.

Performance gains as small as 0.1 seconds can lift conversions. Which early design moves—caching strategy, parallel requests, asset optimization—deliver the highest ROI? Share a before-and-after story with page-speed metrics, infra costs, and developer effort.

I start with caching at the edges and parallelizing calls that don’t depend on each other. Then I squeeze assets—optimize images and cut unnecessary payloads. We once focused on a hot landing path: with parallel requests and a cache in front of a read-heavy module, we trimmed 0.1 seconds, which is meaningful because a 0.1-second improvement can drive an 8.4% retail conversion lift. Infra costs held steady because we reduced tail work; developer effort was a sprint, and the gains were immediate and felt—pages snapped instead of dragging.

Third-party services can impose rate limits, price hikes, and variable latency. How do you architect integration boundaries, fallbacks, and egress controls to avoid lock-in? Provide a war story, the mitigations you used, and the measurable resilience outcomes.

I wrap every third-party with a thin module that owns retries, timeouts, and graceful degradation. I also budget for an exit: feature flags to cut traffic, circuit breakers to contain blast radius, and egress controls to curb runaway costs. We once rode a vendor that introduced throttles and latency spikes; guarded behind a module, we added a cache and a queue, reduced synchronous calls, and toggled non-critical features off when limits approached. The outcome was calm dashboards during turbulence and no downtime—a far cry from the 45% of organizations that have felt third‑party disruptions.

Many failures trace back to unchallenged early assumptions. What pre-mortem questions do you ask—10x load, feature churn, vendor exits—and how do the answers change your architecture? Offer a checklist and one example where it averted a costly rework.

My pre-mortem is blunt: What breaks at 10x? What feature forces a data model change? What if a vendor exits or shifts pricing? The checklist covers capacity headroom, schema evolution paths, third-party kill switches, and observability gaps. On one project, asking the 10x question surfaced a hotspot we modularized early; when growth came, we scaled that slice without touching the rest. That single decision spared us the months of frantic rewrites I’ve seen elsewhere.

When migrating from an experimental stack to production, schema incompatibilities can bite. How do you de-risk platform changes with shadow traffic, dual writes, and reversible migrations? Walk through the exact steps, tooling, and success criteria you use.

I route shadow traffic to the new path first, comparing responses silently to catch mismatches. Then I introduce dual writes so both schemas stay in sync while we validate integrity and performance. Migrations are reversible by design: forward and backward scripts, checkpoints, and feature flags to control exposure. Success criteria are boring by intent: no data drift over a soak period at 10x test load, p95 parity or better, and clean dashboards. Only then do we cut over; if anything smells off, we roll back in seconds, not days.

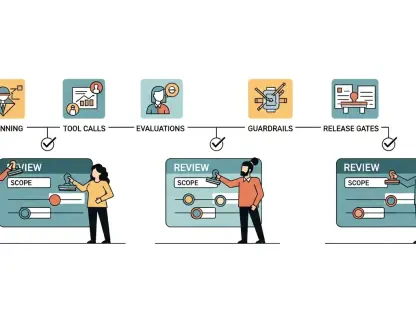

As teams scale, how do you align product, design, and engineering around scalability as a product decision, not a post-launch chore? Describe rituals, artifacts, and incentives that keep long-term capacity and reliability visible in sprint planning.

We put scalability on the roadmap with names, dates, and budgets, not as a footnote. Rituals include pre-mortems, load-test reviews, and reliability demos where we walk through failure drills. Artifacts are modular maps, SLO dashboards per path, and capacity plans tied to the 10x scenario. Incentives matter: we celebrate wins like a 0.1-second speed-up or a clean drill as real product outcomes, the same way we’d toast a feature launch. When everyone sees that uptime avoids $300,000 to over $5 million an hour in potential losses, priorities align quickly.

What is your forecast for web application scalability?

I see teams internalizing that scalability is a feature set—flexibility, resilience, and capacity—designed from day one, not a patch. With demand for custom apps rising at a 9.4% clip, the winners will pair modular boundaries with thoughtful data design and a ruthless focus on performance where a 0.1-second edge can yield an 8.4% lift. Third‑party turbulence isn’t going away—45% have felt it—so wrappers, fallbacks, and exit paths will be standard kit. And the old divide between launch speed and scalability will fade as more teams accept that the real cost of ignoring it isn’t abstract—it can be $300,000 to over $5 million an hour, which concentrates the mind and shapes better architecture choices.