From Push-to-Prod to Proof: Why Modern Teams Redesign the Path to Production

Release nerves have been eating roadmaps for years, and practitioners from product shops to platform teams keep reporting the same pattern: pipelines that look fast on paper collapse under manual reviews, fragile tests, and last-minute heroics that turn every deploy into a gamble. The consensus that emerged across interviews and postmortems is blunt—speed without trust invites chaos, while trust without flow stalls progress; the real target is predictable outcomes verified by evidence at each step.

Voices from high-change environments describe a shift from approval checklists to proof-driven automation. Instead of betting on a single “go/no-go” gate, they distribute checks across the path: small commits, layered tests, and production-grade verification. Some leaders note that this redesign does not reduce scrutiny; it simply moves scrutiny earlier and extends it past release, replacing ceremony with continuous, observable proof.

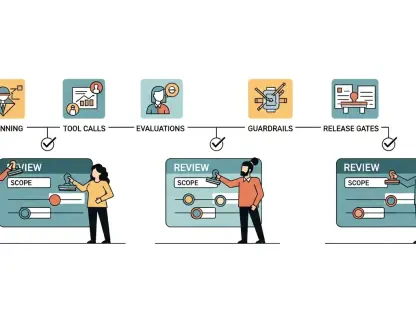

Engineering a Pipeline that Earns Trust at Every Step

Practitioners align on one principle: a pipeline is a product, not a script. Teams that treat it this way codify rules, measure reliability, and evolve it intentionally. However, their methods vary based on domain. Regulated environments lean on immutable artifacts and audit-ready logs, while consumer products emphasize progressive exposure and fast rollback.

Disagreements surface over how strict gates should be. Operations-leaning voices push for hard stops on any flake to protect stability, whereas product-leaning voices argue for tight but resilient gates that auto-retry known flakes and isolate noisy checks. Most land on a pragmatic middle ground—preserve hard stops for correctness while engineering the suite so healthy builds move quickly.

Shrink the Batch, Lower the Stakes: Trunk-Based Flow that Favors Steady, Verifiable Change

Engineering managers repeatedly endorse trunk-based development for one reason: small deltas quiet drama. Short-lived branches and bite-sized pull requests reduce cognitive load, make code review sharper, and keep merge conflicts trivial. This cadence produces a dependable rhythm where each step feels routine rather than risky.

Skeptics worry that frequent merges amplify exposure. Delivery veterans counter that exposure correlates with unknowns, not frequency; tiny changes carry fewer unknowns and yield clearer signals when something breaks. The shared tip: keep pull requests narrow, enforce pre-merge checks, and align on the rule that main stays releasable.

Tests that Accelerate, Not Obstruct: Layered Gates that Mirror Real Use and Stop Bad Builds Cold

Testing leaders agree that speed comes from design, not from skipping checks. They layer tests by cost and intent—fast, deterministic unit tests for logic; integration tests for contract confidence; and a lean set of end-to-end paths for user-critical journeys. The suite’s purpose is to accelerate safe flow, so slow or flaky tests get refactored or retired.

Some argue for heavy end-to-end coverage to model reality; others warn that such breadth collapses under maintenance. The roundup insight: use contract tests to shoulder cross-service truth, keep E2E small but meaningful, and treat any failure as a stop unless the flake is automatically quarantined and tracked to closure.

Turn Real Traffic into Evidence: Blue-Green, Canaries, and Feature Flags that Control Exposure

Release engineers report that progressive delivery translates uncertainty into measurable, reversible steps. Blue-green swaps reduce cutover risk by validating the new environment under production conditions, then flipping traffic when healthy. Canary releases sample impact with tight health thresholds before scaling out.

Feature flag advocates highlight a different edge: decoupling deployment from release. By shipping code dark, teams can enable features for slices of users, markets, or sessions, and disable trouble instantly without rollbacks. Across sources, the pattern is complementary—use blue-green for infrastructure-grade confidence, canaries for systemic health, and flags for behavior-level control.

After Release is Still the Pipeline: Observe, Alert Well, and Roll Back without Drama

Operators caution that “done at deploy” is a trap. High-performing teams extend the pipeline into production with dashboards, SLO-driven alerts, and runbooks wired into the delivery flow. Post-deploy checks are codified gates, not ad hoc glances, and promotion depends on live indicators meeting predeclared thresholds.

On rollback, opinions converge tightly: make reversion fast and boring. Blue-green provides instant switchbacks, flags disable problematic behavior, and versioned configs remove surprises. The recurring tip is to practice rollbacks the way disaster recovery used to be drilled—muscle memory beats theory when incidents hit.

What to Do Next: Concrete Patterns, Guardrails, and Habits that Make Releases Boring

Across case studies, next steps cluster into three moves. First, adopt trunk-based habits with small, reviewable changes and a releasable main. Second, enforce layered, fast tests as mandatory gates, measuring flake rates and paying down the worst offenders weekly. Third, add progressive delivery—start with flags, then add canaries or blue-green as architecture allows.

Guardrails complete the picture. Teams standardize build metadata, artifact immutability, and environment parity; they codify promotion criteria, alert thresholds, and playbooks for roll forward or back. The standout advice is cultural: forbid “just this once” exceptions and routinize the path so every change walks the same corridor to production.

Routine Beats Heroics: The Lasting Payoff of Predictable Deployment and Where it Leads Next

Leaders interviewed described a measurable payoff once predictability took root: engineers stopped hovering over deploys, changes moved daily instead of weekly, and incident response sharpened because alerts mapped to real user impact. With risk spread across many small gates, product planning stabilized and context switching dropped.

The roundup closed with practical direction. Teams that succeeded had invested in tiny batches, strict but fast tests, progressive exposure, and production observability wired into the pipeline. Documentation of rollback mechanics, SLO-based alerts, and regular game days turned releases into a non-event. For deeper study, sources pointed to playbooks on trunk-based workflows, guides on test suite design economics, and handbooks for blue-green, canary strategy, and flag governance. Collectively, these steps had converted deployment from a cliff-edge decision into a steady accumulation of proof.