Boardrooms are betting that an AI engineer can ship production code reliably, safely, and at scale, and that wager now underpins Cognition AI’s bid to raise a round that could value the company near $25 billion. The catalyst is Devin, an autonomous software developer that doesn’t just autocomplete lines but runs the whole job: it scopes requirements, plans work, writes code, provisions environments, executes tests, and validates outputs before handing artifacts to humans for sign‑off.

Context and Stakes

This shift matters because software delivery has long been constrained by context switching and handoffs. Traditional assistants speed typing; Devin targets throughput and quality by owning the lifecycle inside a contained workspace. Investors are paying for that difference: a valuation move from roughly $10.2 billion to near $25 billion signals belief that autonomy, not assistance, will drive the next enterprise productivity curve.

Moreover, early traction with Microsoft, Dell Technologies, and Cisco Systems suggests fit where stakes are highest. These buyers don’t reward clever demos; they reward repeatability under governance. Framed that way, Devin is a bet on reliability economics: fewer escapes, tighter feedback loops, and auditable changes.

How Devin Works

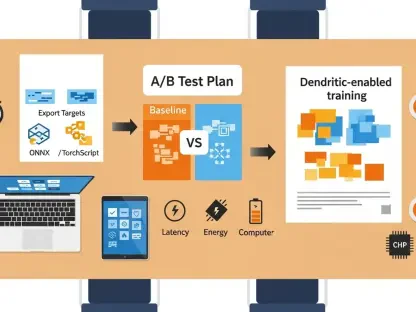

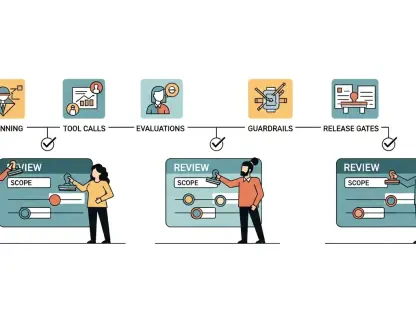

Devin begins by translating an issue or spec into a multi‑step plan, then decomposes tasks into units it can execute and verify. Planning is not a prompt trick; it is a workflow contract that binds code generation to testable checkpoints, allowing rollback and revision without losing state. That discipline reduces rework compared with snippet tools that lack memory of prior decisions.

Its self-contained environment—editor, terminal, package management, and sandboxed services—creates a reproducible build space. Because the toolchain lives with the agent, runs are traceable: commands, diffs, logs, and test results form an audit trail that can be plugged into CI/CD and change management, which eases compliance and incident review.

Security, Testing, and Enterprise Fit

Security is treated as a first‑class constraint, not an afterthought. Dependency hygiene, vulnerability scans, and policy checks run inline, and least‑privilege defaults restrict what Devin can touch. That model aligns with regulated teams that must prove not just that work was done, but that it was done under policy.

Continuous testing is the other pillar. Unit and integration suites are generated and expanded as tasks proceed; failed assertions trigger repair loops. Organizations track reliability through escape rates and flaky‑test suppression, turning agent behavior into metrics that can be governed like any other service.

Performance and Differentiation

What distinguishes Devin from rivals from OpenAI or Anthropic is not raw model prowess but the operational envelope: end‑to‑end ownership with built‑in planning, environment control, and supervision hooks. That combination converts probabilistic code generation into a managed process that enterprises can reason about. The upside is higher feature throughput and dependable refactoring; the trade‑off is heavier setup and the need for curated repos, style guides, and access policies.

In practice, Devin has shown strength on greenfield features, bug triage, API integrations, and large‑scale test generation. It is less automatic on sprawling multi‑repo systems where ambiguous specs and long dependency chains amplify error and context loss. Here, human supervisors still set scope and course‑correct, which tempers fully hands‑off narratives.

Market Signal

The prospective $25 billion valuation, if closed, would validate demand for tools framed as digital colleagues rather than keyboards on steroids. It also raises the bar: reliability, testing rigor, and security are now table stakes, not marketing lines. Competitors will match capabilities; the durable moat will be governance, ecosystem fit, and total cost across licenses, infra, and oversight time.

Verdict and What Comes Next

Devin delivered a credible pathway from code assistance to production autonomy by fusing planning, contained execution, and measurable QA into a single loop. The approach favored enterprises that valued auditability and policy compliance over raw generative flash, and it exposed real constraints in messy, evolving codebases. The next step was clear: deepen long‑context reasoning, harden policy‑as‑code integration, and tune domain variants for sectors like fintech and health, all while proving unit economics at scale. On balance, Devin read as a leading indicator of where developer tools were heading—and a worthwhile bet for teams ready to supervise autonomy rather than type faster.